[ad_1]

Our prospects wish to be sure their customers have the perfect expertise working their utility on AWS. To make this occur, you want to monitor and repair software program issues as rapidly as doable. Doing this will get difficult with the rising quantity of information needing to be rapidly detected, analyzed, and saved. On this publish, we stroll you thru an automatic course of to combination and monitor logging-application information in near-real time, so you’ll be able to remediate utility points sooner.

This publish exhibits the best way to unify and centralize logs throughout completely different computing platforms. With this answer, you’ll be able to unify logs from Amazon Elastic Compute Cloud (Amazon EC2), Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Kinesis Knowledge Firehose, and AWS Lambda utilizing brokers, log routers, and extensions. We use Amazon OpenSearch Service (successor to Amazon Elasticsearch Service) with OpenSearch Dashboards to visualise and analyze the logs, collected throughout completely different computing platforms to get utility insights. You possibly can deploy the answer utilizing the AWS Cloud Growth Package (AWS CDK) scripts offered as a part of the answer.

Buyer advantages

A unified aggregated log system gives the next advantages:

- A single level of entry to all of the logs throughout completely different computing platforms

- Assist defining and standardizing the transformations of logs earlier than they get delivered to downstream programs like Amazon Easy Storage Service (Amazon S3), Amazon OpenSearch Service, Amazon Redshift, and different providers

- The flexibility to make use of Amazon OpenSearch Service to rapidly index, and OpenSearch Dashboards to look and visualize logs from its routers, purposes, and different gadgets

Resolution overview

On this publish, we use the next providers to display log aggregation throughout completely different compute platforms:

- Amazon EC2 – An internet service that gives safe, resizable compute capability within the cloud. It’s designed to make web-scale cloud computing simpler for builders.

- Amazon ECS – An internet service that makes it straightforward to run, scale, and handle Docker containers on AWS, designed to make the Docker expertise simpler for builders.

- Amazon EKS – An internet service that makes it straightforward to run, scale, and handle Docker containers on AWS.

- Kinesis Knowledge Firehose – A completely managed service that makes it straightforward to stream information to Amazon S3, Amazon Redshift, or Amazon OpenSearch Service.

- Lambda – A compute service that allows you to run code with out provisioning or managing servers. It’s designed to make web-scale cloud computing simpler for builders.

- Amazon OpenSearch Service – A completely managed service that makes it straightforward so that you can carry out interactive log analytics, real-time utility monitoring, web site search, and extra.

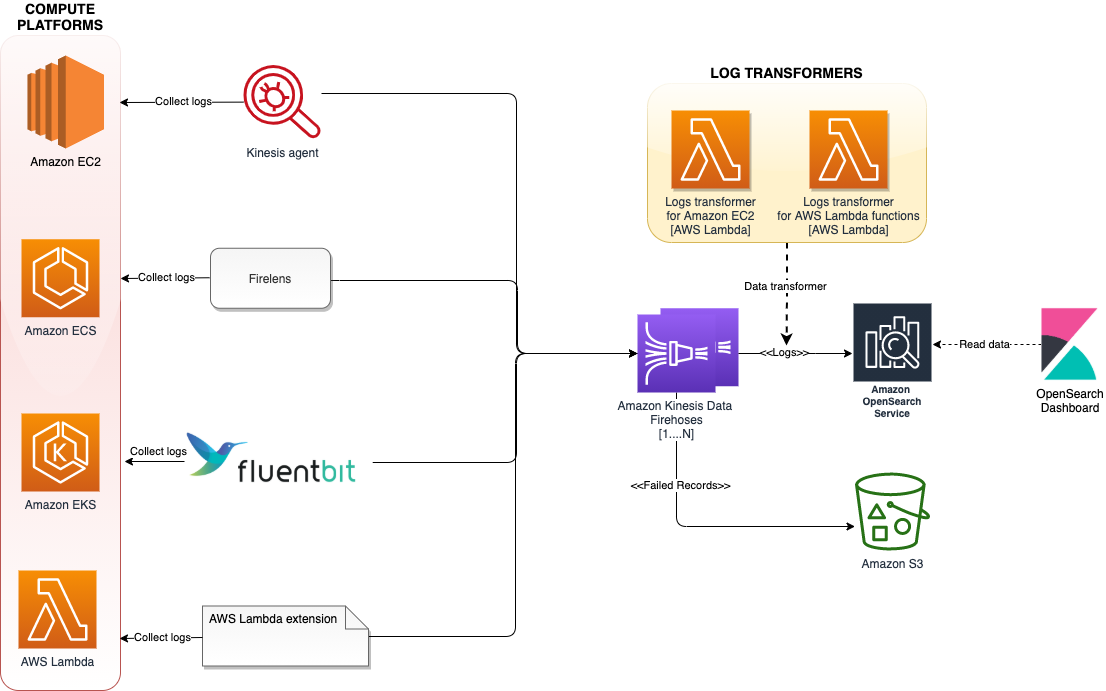

The next diagram exhibits the structure of our answer.

The structure makes use of varied log aggregation instruments corresponding to log brokers, log routers, and Lambda extensions to gather logs from a number of compute platforms and ship them to Kinesis Knowledge Firehose. Kinesis Knowledge Firehose streams the logs to Amazon OpenSearch Service. Log information that fail to get endured in Amazon OpenSearch service will get written to AWS S3. To scale this structure, every of those compute platforms streams the logs to a unique Firehose supply stream, added as a separate index, and rotated each 24 hours.

The next sections display how the answer is carried out on every of those computing platforms.

Amazon EC2

The Kinesis agent collects and streams logs from the purposes working on EC2 situations to Kinesis Knowledge Firehose. The agent is a standalone Java software program utility that gives a straightforward method to acquire and ship information to Kinesis Knowledge Firehose. The agent constantly displays recordsdata and sends logs to the Firehose supply stream.

The AWS CDK script offered as a part of this answer deploys a easy PHP utility that generates logs underneath the /and so on/httpd/logs listing on the EC2 occasion. The Kinesis agent is configured by way of /and so on/aws-kinesis/agent.json to gather information from access_logs and error_logs, and stream them periodically to Kinesis Knowledge Firehose (ec2-logs-delivery-stream).

As a result of Amazon OpenSearch Service expects information in JSON format, you’ll be able to add a name to a Lambda operate to rework the log information to JSON format inside Kinesis Knowledge Firehose earlier than streaming to Amazon OpenSearch Service. The next is a pattern enter for the info transformer:

The next is our output:

We are able to improve the Lambda operate to extract the timestamp, HTTP, and browser data from the log information, and retailer them as separate attributes within the JSON doc.

Amazon ECS

Within the case of Amazon ECS, we use FireLens to ship logs on to Kinesis Knowledge Firehose. FireLens is a container log router for Amazon ECS and AWS Fargate that offers you the extensibility to make use of the breadth of providers at AWS or accomplice options for log analytics and storage.

The structure hosts FireLens as a sidecar, which collects logs from the primary container working an httpd utility and sends them to Kinesis Knowledge Firehose and streams to Amazon OpenSearch Service. The AWS CDK script offered as a part of this answer deploys a httpd container hosted behind an Software Load Balancer. The httpd logs are pushed to Kinesis Knowledge Firehose (ecs-logs-delivery-stream) via the FireLens log router.

Amazon EKS

With the current announcement of Fluent Bit assist for Amazon EKS, you now not have to run a sidecar to route container logs from Amazon EKS pods working on Fargate. With the brand new built-in logging assist, you’ll be able to choose a vacation spot of your option to ship the information to. Amazon EKS on Fargate makes use of a model of Fluent Bit for AWS, an upstream conformant distribution of Fluent Bit managed by AWS.

The AWS CDK script offered as a part of this answer deploys an NGINX container hosted behind an inside Software Load Balancer. The NGINX container logs are pushed to Kinesis Knowledge Firehose (eks-logs-delivery-stream) via the Fluent Bit plugin.

Lambda

For Lambda features, you’ll be able to ship logs on to Kinesis Knowledge Firehose utilizing the Lambda extension. You possibly can deny the information being written to Amazon CloudWatch.

After deployment, the workflow is as follows:

- On startup, the extension subscribes to obtain logs for the platform and performance occasions. An area HTTP server is began contained in the exterior extension, which receives the logs.

- The extension buffers the log occasions in a synchronized queue and writes them to Kinesis Knowledge Firehose by way of PUT information.

- The logs are despatched to downstream programs.

- The logs are despatched to Amazon OpenSearch Service.

The Firehose supply stream title will get specified as an atmosphere variable (AWS_KINESIS_STREAM_NAME).

For this answer, as a result of we’re solely specializing in gathering the run logs of the Lambda operate, the info transformer of the Kinesis Knowledge Firehose supply stream filters out the information of sort operate ("sort":"operate") earlier than sending it to Amazon OpenSearch Service.

The next is a pattern enter for the info transformer:

Conditions

To implement this answer, you want the next stipulations:

Construct the code

Take a look at the AWS CDK code by working the next command:

Construct the lambda extension by working the next command:

Ensure that to exchange default AWS area specified underneath the worth of firehose.endpoint attribute inside lib/computes/ec2/ec2-startup.sh.

Construct the code by working the next command:

Deploy the code

Should you’re working AWS CDK for the primary time, run the next command to bootstrap the AWS CDK atmosphere (present your AWS account ID and AWS Area):

You solely have to bootstrap the AWS CDK one time (skip this step in case you have already executed this).

Run the next command to deploy the code:

You get the next output:

AWS CDK takes care of constructing the required infrastructure, deploying the pattern utility, and gathering logs from completely different sources to Amazon OpenSearch Service.

The next is a number of the key details about the stack:

- ec2ipaddress – The general public IP deal with of the EC2 occasion, deployed with the pattern PHP utility

- ecsloadbalancerurl – The URL of the Amazon ECS Load Balancer, deployed with the httpd utility

- eksclusterClusterNameCE21A0DB – The Amazon EKS cluster title, deployed with the NGINX utility

- samplelambdafunction – The pattern Lambda operate utilizing the Lambda extension to ship logs to Kinesis Knowledge Firehose

- opensearch-domain-arn – The ARN of the Amazon OpenSearch Service area

Generate logs

To visualise the logs, you first have to generate some pattern logs.

- To generate Lambda logs, invoke the operate utilizing the next AWS CLI command (run it just a few occasions):

Ensure that to exchange samplelambdafunction with the precise Lambda operate title. The file path must be up to date based mostly on the underlying working system.

The operate ought to return "StatusCode": 200, with the next output:

- Run the next command a few occasions to generate Amazon EC2 logs:

Ensure that to exchange ec2ipaddress with the general public IP deal with of the EC2 occasion.

- Run the next command a few occasions to generate Amazon ECS logs:

Ensure that to exchange ecsloadbalancerurl with the general public ARN of the AWS Software Load Balancer.

We deployed the NGINX utility with an inside load balancer, so the load balancer hits the well being checkpoint of the appliance, which is adequate to generate the Amazon EKS entry logs.

Visualize the logs

To visualise the logs, full the next steps:

- On the Amazon OpenSearch Service console, select the hyperlink offered for the OpenSearch Dashboard 7URL.

- Configure entry to the OpenSearch Dashboard.

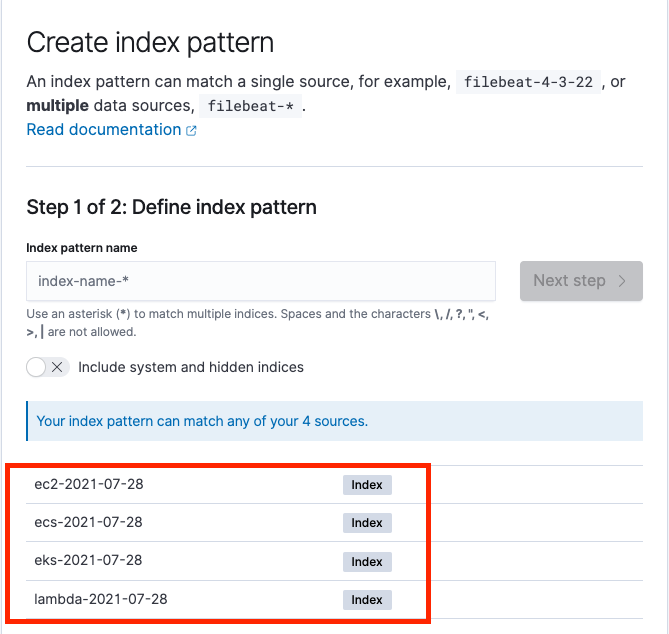

- Underneath OpenSearch Dashboard, on the Uncover menu, begin creating a brand new index sample for every compute log.

We are able to see separate indexes for every compute log partitioned by date, as within the following screenshot.

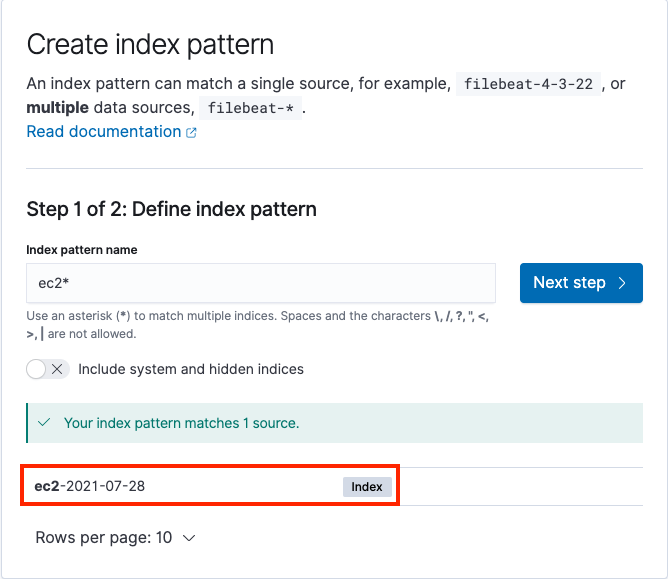

The next screenshot exhibits the method to create index patterns for Amazon EC2 logs.

After you create the index sample, we will begin analyzing the logs utilizing the Uncover menu underneath OpenSearch Dashboard within the navigation pane. This device gives a single searchable and unified interface for all of the information with varied compute platforms. We are able to swap between completely different logs utilizing the Change index sample submenu.

Clear up

Run the next command from the foundation listing to delete the stack:

Conclusion

On this publish, we confirmed the best way to unify and centralize logs throughout completely different compute platforms utilizing Kinesis Knowledge Firehose and Amazon OpenSearch Service. This strategy permits you to analyze logs rapidly and the foundation reason for failures, utilizing a single platform moderately than completely different platforms for various providers.

In case you have suggestions about this publish, submit your feedback within the feedback part.

Sources

For extra data, see the next assets:

In regards to the creator

Hari Ohm Prasath is a Senior Modernization Architect at AWS, serving to prospects with their modernization journey to turn into cloud native. Hari likes to code and actively contributes to the open supply initiatives. You will discover him in Medium, Github & Twitter @hariohmprasath.

Hari Ohm Prasath is a Senior Modernization Architect at AWS, serving to prospects with their modernization journey to turn into cloud native. Hari likes to code and actively contributes to the open supply initiatives. You will discover him in Medium, Github & Twitter @hariohmprasath.

Ballu Singh is a Principal Options Architect at AWS. He lives within the San Francisco Bay space and helps prospects architect and optimize purposes on AWS. In his spare time, he enjoys studying and spending time along with his household.

Ballu Singh is a Principal Options Architect at AWS. He lives within the San Francisco Bay space and helps prospects architect and optimize purposes on AWS. In his spare time, he enjoys studying and spending time along with his household.

[ad_2]