[ad_1]

Detection engineers and risk hunters perceive that concentrating on adversary behaviors is a necessary a part of an efficient detection technique (assume Pyramid of Ache). But, inherent in focusing analytics on adversary behaviors is that malicious conduct will typically sufficient overlap with benign conduct in your setting, particularly as adversaries attempt to mix in and more and more dwell off the land. Think about you’re making ready to deploy a behavioral analytic to enhance your detection technique. Doing so may embody customized growth, attempting out a brand new Sigma rule, or new behavioral detection content material out of your safety info and occasion administration (SIEM) vendor. Maybe you’re contemplating automating a earlier hunt, however sadly you discover that the goal conduct is widespread in your setting.

Is that this a foul detection alternative? Not essentially. What are you able to do to make the analytic outputs manageable and never overwhelm the alert queue? It’s typically stated that you should tune the analytic on your setting to scale back the false constructive charge. However are you able to do it with out sacrificing analytic protection? On this put up, I focus on a course of for tuning and associated work you are able to do to make such analytics extra viable in your setting. I additionally briefly focus on correlation, another and complementary means to handle noisy analytic outputs.

Tuning the Analytic

As you’re growing and testing the analytic, you’re inevitably assessing the next key questions, the solutions to which in the end dictate the necessity for tuning:

- Does the analytic accurately determine the goal conduct and its variations?

- Does the analytic determine different conduct totally different than the intention?

- How widespread is the conduct in your setting?

Right here, let’s assume the analytic is correct and pretty strong to be able to concentrate on the final query. Given these assumptions, let’s depart from the colloquial use of the time period false constructive and as a substitute use benign constructive. This time period refers to benign true constructive occasions wherein the analytic accurately identifies the goal conduct, however the conduct displays benign exercise.

If the conduct principally by no means occurs, or occurs solely sometimes, then the variety of outputs will usually be manageable. You would possibly settle for these small numbers and proceed to documenting and deploying the analytic. Nevertheless, on this put up, the goal conduct is widespread in your setting, which implies you should tune the analytic to stop overwhelming the alert queue and to maximise the potential sign of its outputs. At this level, the essential goal of tuning is to scale back the variety of outcomes produced by the analytic. There are typically two methods to do that:

- Filter out the noise of benign positives (our focus right here).

- Modify the specificity of the analytic.

Whereas not the main target of this put up, let’s briefly focus on adjusting the specificity of the analytic. Adjusting specificity means narrowing the view of the analytic, which entails adjusting its telemetry supply, logical scope, and/or environmental scope. Nevertheless, there are protection tradeoffs related to doing this. Whereas there may be all the time a stability to be struck on account of useful resource constraints, basically it’s higher (for detection robustness and sturdiness) to solid a large internet; that’s, select telemetry sources and assemble analytics that broadly determine the goal conduct throughout the broadest swath of your setting. Primarily, you’re selecting to just accept a bigger variety of doable outcomes to be able to keep away from false negatives (i.e., utterly lacking doubtlessly malicious cases of the goal conduct). Subsequently, it’s preferable to first focus tuning efforts on filtering out benign positives over adjusting specificity, if possible.

Filtering Out Benign Positives

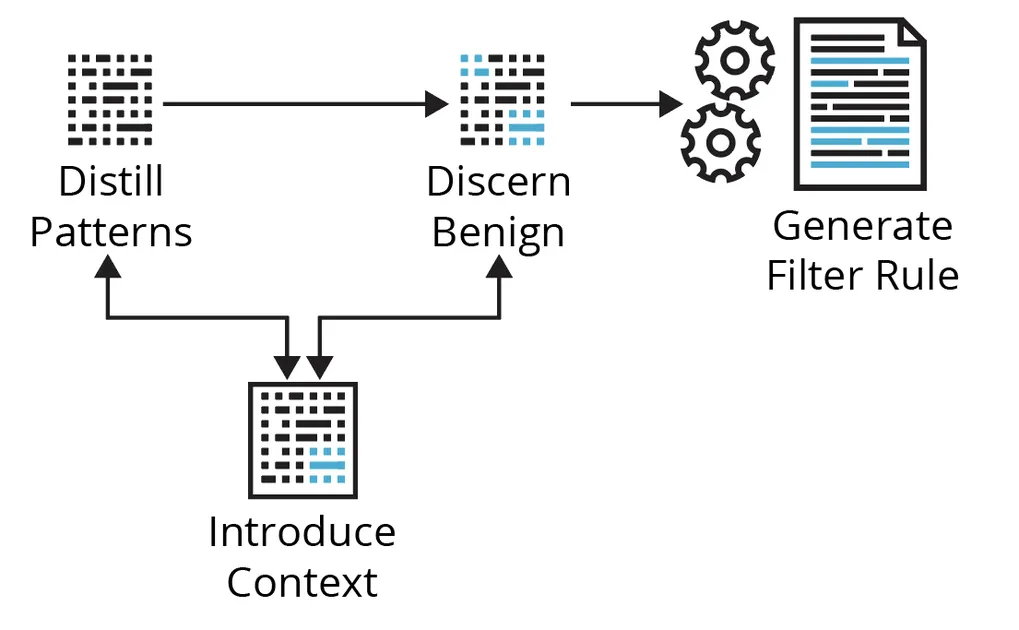

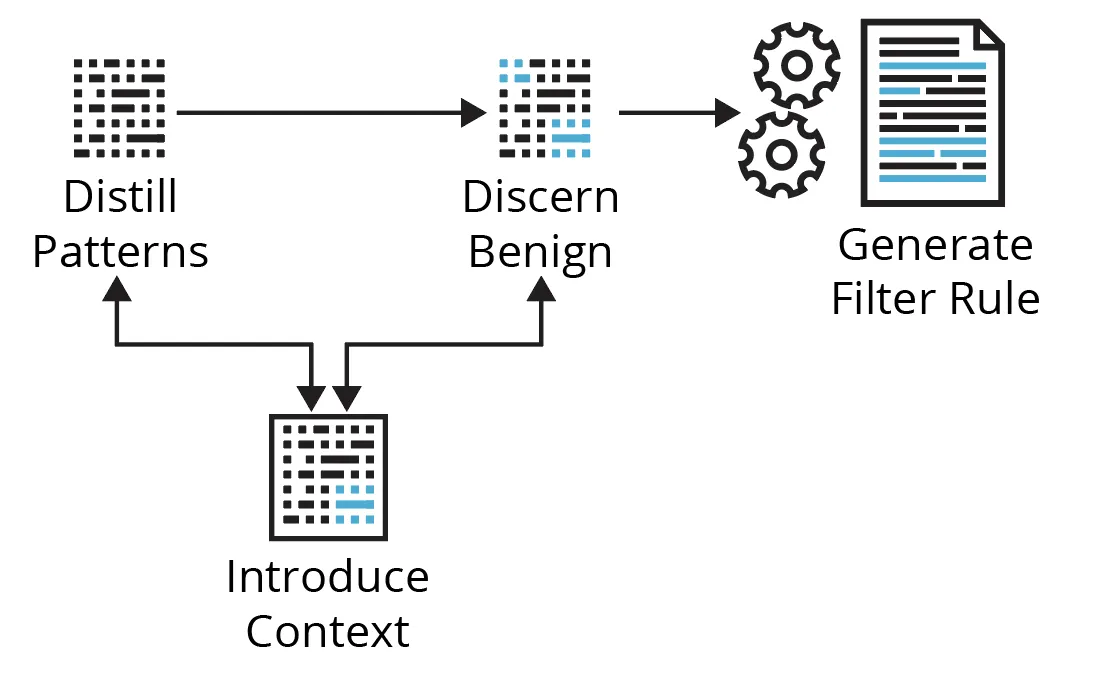

Working the analytic during the last, say, week of manufacturing telemetry, you’re offered with a desk of quite a few outcomes. Now what? Determine 1 under reveals the cyclical course of we’ll stroll by way of utilizing a few examples concentrating on Kerberoasting and Non-Normal Port methods.

Determine 1: A Primary Course of for Filtering Out Benign Positives

Distill Patterns

Coping with quite a few analytic outcomes doesn’t essentially imply you need to monitor down each individually or have a filter for every end result—the sheer quantity makes that impractical. Tons of of outcomes can doubtlessly be distilled to some filters—it is determined by the obtainable context. Right here, you’re trying to discover the info to get a way of the highest entities concerned, the number of related contextual values (context cardinality), how typically these change (context velocity), and which related fields could also be summarized. Begin with entities or values related to essentially the most outcomes; that’s, attempt to deal with the most important chunks of associated occasions first.

Examples

- Kerberoasting—Say this Sigma rule returns outcomes with many alternative AccountNames and ClientAddresses (excessive context cardinality), however most outcomes are related to comparatively few ServiceNames (of sure legacy units; low context cardinality) and TicketOptions. You broaden the search to the final 30 days and discover the ServiceNames and TicketOptions are a lot the identical (low context velocity), however different related fields have extra and/or totally different values (excessive context velocity). You’d concentrate on these ServiceNames and/or TicketOptions, verify it’s anticipated/recognized exercise, then deal with an enormous chunk of the outcomes with a single filter towards these ServiceNames.

- Non-Normal Port—On this instance, you discover there may be excessive cardinality and excessive velocity in nearly each occasion/community movement subject, apart from the service/software label, which signifies that solely SSL/TLS is getting used on non-standard ports. Once more, you broaden the search and see quite a lot of totally different supply IPs that may very well be summarized by a single Classless Inter-Area Routing (CIDR) block, thus abstracting the supply IP into a bit of low-cardinality, low-velocity context. You’d concentrate on this obvious subnet, attempting to grasp what it’s and any related controls round it, verify its anticipated and/or recognized exercise, then filter accordingly.

Luckily, there are normally patterns within the knowledge you can concentrate on. You typically need to goal context with low cardinality and low velocity as a result of it impacts the long-term effectiveness of your filters. You don’t need to continuously be updating your filter guidelines by counting on context that adjustments too typically in case you might help it. Nevertheless, typically there are various high-cardinality, high-velocity fields, and nothing fairly stands out from fundamental stacking, counting, or summarizing. What in case you can’t slim the outcomes as is? There are too many outcomes to research each individually. Is that this only a unhealthy detection alternative? Not but.

Discern Benign

The principle concern on this exercise is rapidly gathering adequate context to disposition analytic outputs with an appropriate stage of confidence. Context is any knowledge or info that meaningfully contributes to understanding and/or decoding the circumstances/circumstances wherein an occasion/alert happens, to discern conduct as benign, malicious, or suspicious/unknown. Desk 1 under describes the most typical sorts of context that you should have or search to collect.

Desk 1: Widespread Sorts of Context

|

Sort |

Description |

Typical Sources |

Instance(s) |

| Occasion | fundamental properties/parameters of the occasion that assist outline it | uncooked telemetry, log fields |

course of creation fields, community movement fields, course of community connection fields, Kerberos service ticket request fields |

| Environmental | knowledge/details about the monitored setting or belongings within the monitored setting |

CMDB /ASM/IPAM, ticket system, documentation, the brains of different analysts, admins, engineers, system/community house owners |

enterprise processes, community structure, routing, proxies, NAT, insurance policies, permitted change requests, providers used/uncovered, recognized vulnerabilities, asset possession, {hardware}, software program, criticality, location, enclave, and so forth. |

| Entity | knowledge/details about the entities (e.g., id, supply/vacation spot host, course of, file) concerned within the occasion |

IdP /IAM, EDR, CMDB /ASM/IPAM, Third-party APIs |

• enriching a public IP deal with with geolocation, ASN data, passive DNS, open ports/protocols/providers, certificates info

• enriching an id with description, sort, function, privileges, division, location, and so forth. |

| Historic | • how typically the occasion occurs

• how typically the occasion occurs with sure traits or entities, and/or • how typically there’s a relationship between choose entities concerned within the occasion |

baselines | • profiling the final 90 days of DNS requests per top-level area (TLD)

• profiling the final 90 days of HTTP on non-standard ports •profiling course of lineage |

| Menace | • assault (sub-)approach(s)

• instance process(s) • doubtless assault stage • particular and/or sort of risk actor/malware/software recognized to exhibit the conduct • status, scoring, and so forth. |

risk intelligence platform (TIP), MITRE ATT&CK, risk intelligence APIs, documentation |

status/detection scores, Sysmon-modular annotations; ADS instance |

| Analytic | • how and why this occasion was raised

• any related values produced/derived by the analytic itself • the analytic logic, recognized/widespread benign instance(s) • really useful follow-on actions • scoring, and so forth. |

analytic processing,

documentation, runbooks |

“occasion”: { “processing”: { “time_since_flow_start”: “0:04:08.641718”, “period”: 0.97 }, “cause”: “SEEN_BUT_RARELY_OCCURRING”, “consistency_score”: 95 } |

| Correlation | knowledge/info from related occasions/alerts (mentioned under in Aggregating the Sign ) |

SIEM/SOAR, customized correlation layer |

risk-based alerting, correlation guidelines |

| Open-source | knowledge/info typically obtainable by way of Web engines like google | Web | vendor documentation states what service names they use, what different individuals have seen concerning TCP/2323 |

Upon preliminary assessment, you’ve the occasion context, however you usually find yourself in search of environmental, entity, and/or historic context to ideally reply (1) which identities and software program prompted this exercise, and (2) is it reputable? That’s, you’re in search of details about the provenance, expectations, controls, belongings, and historical past concerning the noticed exercise. But, that context might or will not be obtainable or too gradual to amass. What in case you can’t inform from the occasion context? How else would possibly you inform these occasions are benign or not? Is that this only a unhealthy detection alternative? Not but. It is determined by your choices for gathering extra context and the pace of these choices.

Introduce Context

If there aren’t apparent patterns and/or the obtainable context is inadequate, you’ll be able to work to introduce patterns/context by way of automated enrichments and baselines. Enrichments could also be from inside or exterior knowledge sources and are normally automated lookups primarily based on some entity within the occasion (e.g., id, supply/vacation spot host, course of, file, and so forth.). Even when enrichment alternatives are scarce, you’ll be able to all the time introduce historic context by constructing baselines utilizing the info you’re already accumulating.

With the multitude of monitoring and detection suggestions utilizing phrases similar to new, uncommon, surprising, uncommon, unusual, irregular, anomalous, by no means been seen earlier than, surprising patterns and metadata, doesn’t usually happen, and so forth., you’ll must be constructing and sustaining baselines anyway. Nobody else can do these for you—baselines will all the time be particular to your setting, which is each a problem and a bonus for defenders.

Kerberoasting

Except you’ve programmatically accessible and up-to-date inside knowledge sources to complement the AccountName (id), ServiceName/ServiceID (id), and/or ClientAddress (supply host; usually RFC1918), there’s not a lot enrichment to do besides, maybe, to translate TicketOptions, TicketEncryptionType, and FailureCode to pleasant names/values. Nevertheless, you’ll be able to baseline these occasions. For instance, you would possibly monitor the next over a rolling 90-day interval:

- % days seen per ServiceName per AccountName → determine new/uncommon/widespread user-service relationships

- imply and mode of distinctive ServiceNames per AccountName per time interval → determine uncommon variety of providers for which a person makes service ticket requests

You can broaden the search (solely to develop a baseline metric) to all related TicketEncryption Sorts and moreover monitor

- % days seen per TicketEncryptionType per ServiceName → determine new/uncommon/widespread service-encryption sort relationships

- % days seen per TicketOptions per AccountName → determine new/uncommon/widespread user-ticket choices relationships

- % days seen per TicketOptions per ServiceName → determine new/uncommon/widespread service-ticket choices relationships

Non-Normal Port

Enrichment of the vacation spot IP addresses (all public) is an effective place to begin, as a result of there are various free and industrial knowledge sources (already codified and programmatically accessible by way of APIs) concerning Web-accessible belongings. You enrich analytic outcomes with geolocation, ASN, passive DNS, hosted ports, protocols, and providers, certificates info, major-cloud supplier info, and so forth. You now discover that the entire connections are going to some totally different netblocks owned by a single ASN, and so they all correspond to a single cloud supplier’s public IP ranges for a compute service in two totally different areas. Furthermore, passive DNS signifies a lot of development-related subdomains all on a well-known father or mother area. Certificates info is constant over time (which signifies one thing about testing) and has acquainted organizational identifiers.

Newness is well derived—the connection is both traditionally there or it isn’t. Nevertheless, you’ll want to find out and set a threshold to be able to say what is taken into account uncommon and what’s thought-about widespread. Having some codified and programmatically accessible inside knowledge sources obtainable wouldn’t solely add doubtlessly priceless context however broaden the choices for baseline relationships and metrics. The artwork and science of baselining includes figuring out thresholds and which baseline relationships/metrics will give you significant sign.

Total, with some further engineering and evaluation work, you’re in a a lot better place to distill patterns, discern which occasions are (in all probability) benign, and to make some filtering choices. Furthermore, whether or not you construct automated enrichments and/or baseline checks into the analytic pipeline, or construct runbooks to collect this context on the level of triage, this work feeds instantly into supporting detection documentation and enhances the general pace and high quality of triage.

Generate Filter Rule

You need to neatly apply filters with out having to handle too many guidelines, however you need to achieve this with out creating guidelines which can be too broad (which dangers filtering out malicious occasions, too). With filter/permit record guidelines, reasonably than be overly broad, it’s higher to lean towards a extra exact description of the benign exercise and presumably need to create/handle just a few extra guidelines.

Kerberoasting

The baseline info helps you perceive that these few ServiceNames do in truth have a standard and constant historical past of occurring with the opposite related entities/properties of the occasions proven within the outcomes. You identify these are OK to filter out, and also you achieve this with a single, easy filter towards these ServiceNames.

Non-Normal Port

Enrichments have supplied priceless context to assist discern benign exercise and, importantly, additionally enabled the abstraction of the vacation spot IP, a high-cardinality, high-velocity subject, from many alternative, altering values to some broader, extra static values described by ASN, cloud, and certificates info. Given this context, you establish these connections are in all probability benign and transfer to filter them out. See Desk 2 under for instance filter guidelines, the place app=443 signifies SSL/TLS and major_csp=true signifies the vacation spot IP of the occasion is in one of many printed public IP ranges of a serious cloud service supplier:

|

Sort |

Filter Rule |

Purpose |

|---|---|---|

|

Too broad |

sip=10.2.16.0/22; app=443; asn=16509; major_csp=true |

You don’t need to permit all non-standard port encrypted connections from the subnet to all cloud supplier public IP ranges in the complete ASN. |

|

Nonetheless too broad |

sip=10.2.16.0/22; app=443; asn=16509; major_csp=true; cloud_provider=aws; cloud_service=EC2; cloud_region=us-west-1,us-west-2 |

You don’t know the character of the inner subnet. You don’t need to permit all non-standard port encrypted visitors to have the ability to hit simply any EC2 IPs throughout two total areas. Cloud IP utilization adjustments as totally different clients spin up/down assets. |

|

Finest possibility |

sip=10.2.16.0/22; app=443; asn=16509; major_csp=true; cloud_provider=aws; cloud_service=EC2; cloud_region=us-west-1,us-west-2; cert_subject_dn=‘L=Earth|O=Your Org|OU=DevTest|CN=dev.your.org’ |

It is restricted to the noticed testing exercise on your org, however broad sufficient that it shouldn’t change a lot. You’ll nonetheless learn about another non-standard port visitors that doesn’t match all of those traits. |

An essential corollary right here is that the filtering mechanism/permit record must be utilized in the fitting place and be versatile sufficient to deal with the context that sufficiently describes the benign exercise. A easy filter on ServiceNames depends solely on knowledge within the uncooked occasions and might be filtered out merely utilizing an additional situation within the analytic itself. Then again, the Non-Normal Port filter rule depends on knowledge from the uncooked occasions in addition to enrichments, wherein case these enrichments have to have been carried out and obtainable within the knowledge earlier than the filtering mechanism is utilized. It’s not all the time adequate to filter out benign positives utilizing solely fields obtainable within the uncooked occasions. There are numerous methods you may account for these filtering eventualities. The capabilities of your detection and response pipeline, and the best way it’s engineered, will impression your capability to successfully tune at scale.

Mixture the Sign

Thus far, I’ve talked a couple of course of for tuning a single analytic. Now, let’s briefly focus on a correlation layer, which operates throughout all analytic outputs. Generally an recognized conduct simply isn’t a robust sufficient sign in isolation; it could solely grow to be a robust sign in relation to different behaviors, recognized by different analytics. Correlating the outputs from a number of analytics can tip the sign sufficient to meaningfully populate the alert queue in addition to present priceless extra context.

Correlation is usually entity-based, similar to aggregating analytic outputs primarily based on a shared entity like an id, host, or course of. These correlated alerts are usually prioritized by way of scoring, the place you assign a danger rating to every analytic output. In flip, correlated alerts could have an mixture rating that’s normally the sum, or some normalized worth, of the scores of the related analytic outputs. You’d kind correlated alerts by the mixture rating, the place larger scores point out entities with essentially the most, or most extreme, analytic findings.

The outputs out of your analytic don’t essentially need to go on to the primary alert queue. Not every analytic output wants be triaged. Maybe the efficacy of the analytic primarily exists in offering extra sign/context in relation to different analytic outputs. As correlated alerts bubble as much as analysts solely when there may be sturdy sufficient sign between a number of related analytic outputs, correlation serves instead and complementary means to make the variety of outputs from a loud analytic much less of a nuisance and total outputs extra manageable.

Enhancing Availability and Pace of Related Context

All of it activates context and the necessity to rapidly collect adequate context. Pace issues. Previous to operational deployment, the extra rapidly and confidently you’ll be able to disposition analytic outputs, the extra outputs you’ll be able to cope with, the quicker and higher the tuning, the upper the potential sign of future analytic outputs, and the earlier you’ll have a viable analytic in place working for you. After deployment, the extra rapidly and confidently you’ll be able to disposition analytic outputs, the quicker and higher the triage and the earlier acceptable responses might be pursued. In different phrases, the pace of gathering adequate context instantly impacts your imply time to detect and imply time to reply. Inversely, boundaries to rapidly gathering adequate context are boundaries to tuning/triage; are boundaries to viable, efficient, and scalable deployment of proactive/behavioral safety analytics; and are boundaries to early warning and danger discount. Consequently, something you are able to do to enhance the supply and/or pace of gathering related context is a worthwhile effort on your detection program. These issues embody:

- constructing and sustaining related baselines

- constructing and sustaining a correlation layer

- investing in automation by getting extra contextual info—particularly inside entities and environmental context—that’s codified, made programmatically accessible, and built-in

- constructing relationships and tightening up safety reporting/suggestions loops with related stakeholders—a holistic individuals, course of, and expertise effort; contemplate one thing akin to these automated safety bot use instances

- constructing relationships with safety engineering and admins so they’re extra keen to help in tweaking the sign

- supporting knowledge engineering, infrastructure, and processing for automated enrichments, baseline checks, and upkeep

- tweaking configurations for detection, e.g., deception engineering, this instance with ticket occasions, and so forth.

- tweaking enterprise processes for detection, e.g., hooks into sure permitted change requests, admins all the time do that little further particular factor to let it’s actually them, and so forth.

Abstract

Analytics concentrating on adversary behaviors will typically sufficient require tuning on your setting as a result of identification of each benign and malicious cases of that conduct. Simply because a conduct could also be widespread in your setting doesn’t essentially imply it’s a foul detection alternative or not definitely worth the analytic effort. One of many major methods of coping with such analytic outputs, with out sacrificing protection, is by utilizing context (typically greater than is contained within the uncooked occasions) and versatile filtering to tune out benign positives. I advocate for detection engineers to carry out most of this work, basically conducting an information examine and a few pre-operational triage of their very own analytic outcomes. This work typically entails a cycle of evaluating analytic outcomes to distill patterns, discerning benign conduct, introducing context as needed, and eventually filtering out benign occasions. We used a pair fundamental examples to point out how that cycle would possibly play out.

If the instant context is inadequate to distill patterns and/or discern benign conduct, detection engineers can nearly all the time complement it with automated enrichments and/or baselines. Automated enrichments are extra widespread for exterior, Web-accessible belongings and could also be tougher to return by for inside entities, however baselines can usually be constructed utilizing the info you’re already accumulating. Plus, historic/entity-based context is among the most helpful context to have.

In searching for to provide viable, high quality analytics, detection engineers ought to exhaust, or not less than strive, these choices earlier than dismissing an analytic effort or sacrificing its protection. It’s further work, however doing this work not solely improves pre-operational tuning however pays dividends on post-operational deployment as analysts triage alerts/leads utilizing the additional context and well-documented analysis. Analysts are then in a greater place to determine and escalate findings but in addition to offer tuning suggestions. Apart from, tuning is a steady course of and a two-pronged effort between detection engineers and analysts, if solely as a result of threats and environments usually are not static.

The opposite major means of coping with such analytic outputs, once more with out sacrificing protection, is by incorporating a correlation layer into your detection pipeline. Correlation can be extra work as a result of it provides one other layer of processing, and you need to rating analytic outputs. Scoring might be tough as a result of there are various issues to contemplate, similar to how dangerous every analytic output is within the grand scheme of issues, if/how you must weight and/or increase scores to account for numerous circumstances (e.g., asset criticality, time), how you must normalize scores, whether or not you must calculate scores throughout a number of entities and which one takes priority, and so forth. Nonetheless, the advantages of correlation make it a worthwhile effort and an amazing possibility to assist prioritize throughout all analytic outputs. Additionally, it successfully diminishes the issue of noisier analytics since not each analytic output is supposed to be triaged.

In the event you need assistance doing any of these items, or want to focus on your detection engineering journey, please contact us.

[ad_2]