[ad_1]

You may question Software Load Balancer (ALB) entry logs for numerous functions, reminiscent of analyzing site visitors distribution and patterns. You can too simply use Amazon Athena to create a desk and question in opposition to the ALB entry logs on Amazon Easy Storage Service (Amazon S3). (For extra info, see How do I analyze my Software Load Balancer entry logs utilizing Amazon Athena? and Querying Software Load Balancer Logs.) All queries are run in opposition to the entire desk as a result of it doesn’t outline any partitions. You probably have a number of years of ALB logs, you could need to use a partitioned desk as a substitute for higher question efficiency and value management. In reality, partitioning knowledge is likely one of the High 10 efficiency tuning suggestions for Athena.

Nonetheless, as a result of ALB log recordsdata aren’t saved in a Hive-style prefix (reminiscent of /12 months=2021/), the method of making hundreds of partitions utilizing ALTER TABLE ADD PARTITION in Athena is cumbersome. This publish reveals a approach to create and schedule an AWS Glue crawler with a Grok customized classifier that infers the schema of all ALB log recordsdata beneath the required Amazon S3 prefix and populates the partition metadata (12 months, month, and day) robotically to the AWS Glue Information Catalog.

Stipulations

To comply with together with this publish, full the next conditions:

- Allow entry logging of the ALBs, and have the recordsdata already ingested within the specified S3 bucket.

- Arrange the Athena question consequence location. For extra info, see Working with Question Outcomes, Output Recordsdata, and Question Historical past.

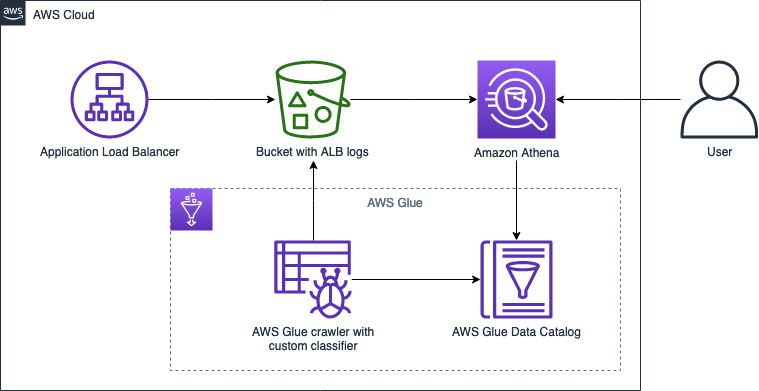

Resolution overview

The next diagram illustrates the answer structure.

To implement this resolution, we full the next steps:

- Put together the Grok sample for our ALB logs, and cross-check with a Grok debugger.

- Create an AWS Glue crawler with a Grok customized classifier.

- Run the crawler to organize a desk with partitions within the Information Catalog.

- Analyze the partitioned knowledge utilizing Athena and evaluate question velocity vs. a non-partitioned desk.

Put together the Grok sample for our ALB logs

As a preliminary step, find the entry log recordsdata on the Amazon S3 console, and manually examine the recordsdata to watch the format and syntax. To permit an AWS Glue crawler to acknowledge the sample, we have to use a Grok sample to match in opposition to an expression and map particular elements into the corresponding fields. Roughly 100 pattern Grok patterns can be found within the Logstash Plugins GitHub, and we will write our personal customized sample if it’s not listed.

The next the essential syntax format for a Grok sample %{PATTERN:FieldName}

The next is an instance of an ALB entry log:

To map the primary area, the Grok sample would possibly seem like the next code:

The sample contains the next elements:

DATAmaps to.*?kindis the column titlesis the whitespace character

To map the second area, the Grok sample would possibly seem like the next:

This sample has the next components:

TIMESTAMP_ISO8601maps to%{YEAR}-%{MONTHNUM}-%{MONTHDAY}[T ]%{HOUR}:?%{MINUTE}(?::?%{SECOND})?%{ISO8601_TIMEZONE}?timeis the column titlesis the whitespace character

When writing Grok patterns, we also needs to contemplate nook instances. For instance, the next code is a traditional case:

However when contemplating the opportunity of null worth, we must always substitute the sample with the next:

When our Grok sample is prepared, we will take a look at the Grok sample with pattern enter utilizing a third-party Grok debugger. The next sample is an efficient begin, however all the time keep in mind to check it with the precise ALB logs.

Take into account that once you copy the Grok sample out of your browser, in some instances there are additional areas ultimately of the strains. Ensure to take away these additional areas.

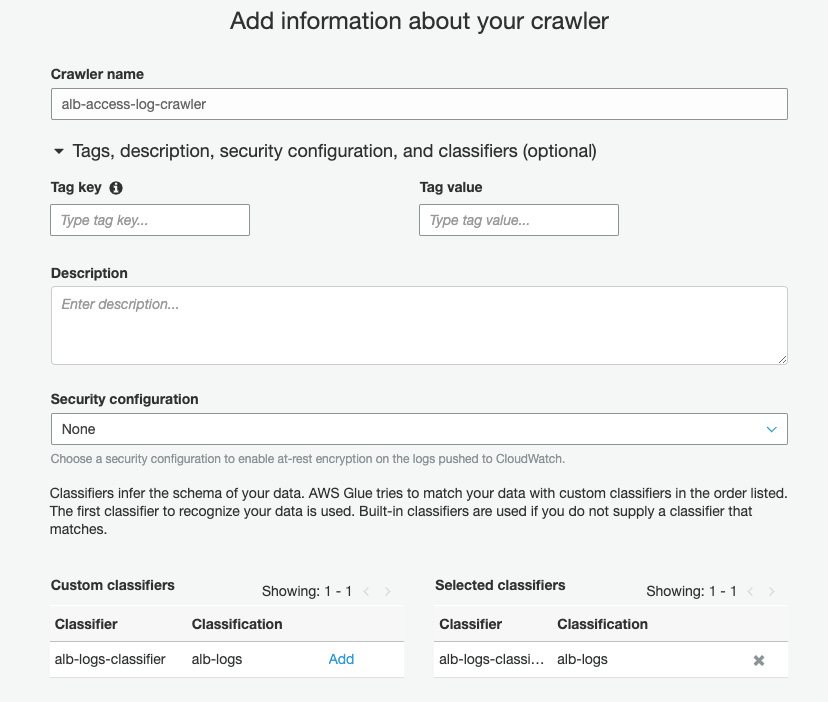

Create an AWS Glue crawler with a Grok customized classifier

Earlier than you create your crawler, you first create a customized classifier. Full the next steps:

- On the AWS Glue console, beneath Crawler, select Classifiers.

- Select Add classifier.

- For Classifier title, enter

alb-logs-classifier. - For Classifier kind¸ choose Grok.

- For Classification, enter

alb-logs. - For Grok sample, enter the sample from the earlier part.

- Select Create.

Now you’ll be able to create your crawler.

- Select Crawlers within the navigation pane.

- Select Add crawler.

- For Crawler title, enter

alb-access-log-crawler. - For Chosen classifiers, enter

alb-logs-classifier.

- Select Subsequent.

- For Crawler supply kind, choose Information shops.

- For Repeat crawls of S3 knowledge shops, choose Crawl new folders solely.

- Select Subsequent.

- For Select a knowledge retailer, select S3.

- For Crawl knowledge in, choose Specified path in my account.

- For Embody path, enter the trail to your ALB logs (for instance,

s3://alb-logs-directory/AWSLogs/<ACCOUNT-ID>/elasticloadbalancing/<REGION>/). - Select Subsequent.

- When prompted so as to add one other knowledge retailer, choose No and select Subsequent.

- Choose Create an IAM function, and provides it a reputation reminiscent of

AWSGlueServiceRole-alb-logs-crawler. - For Frequency, select Every day.

- Point out your begin hour and minute.

- Select Subsequent.

- For Database, enter

elb-access-log-db. - For Prefix added to tables, enter

alb_logs_.

- Develop Configuration choices.

- Choose Replace all new and present partitions with metadata from the desk.

- Hold the opposite choices at their default.

- Select Subsequent.

- Evaluation your settings and select End.

Run your AWS Glue crawler

Subsequent, we run our crawler to organize a desk with partitions within the Information Catalog.

- On the AWS Glue console, select Crawlers.

- Choose the crawler we simply created.

- Select Run crawler.

When the crawler is full, you obtain a notification indicating {that a} desk has been created.

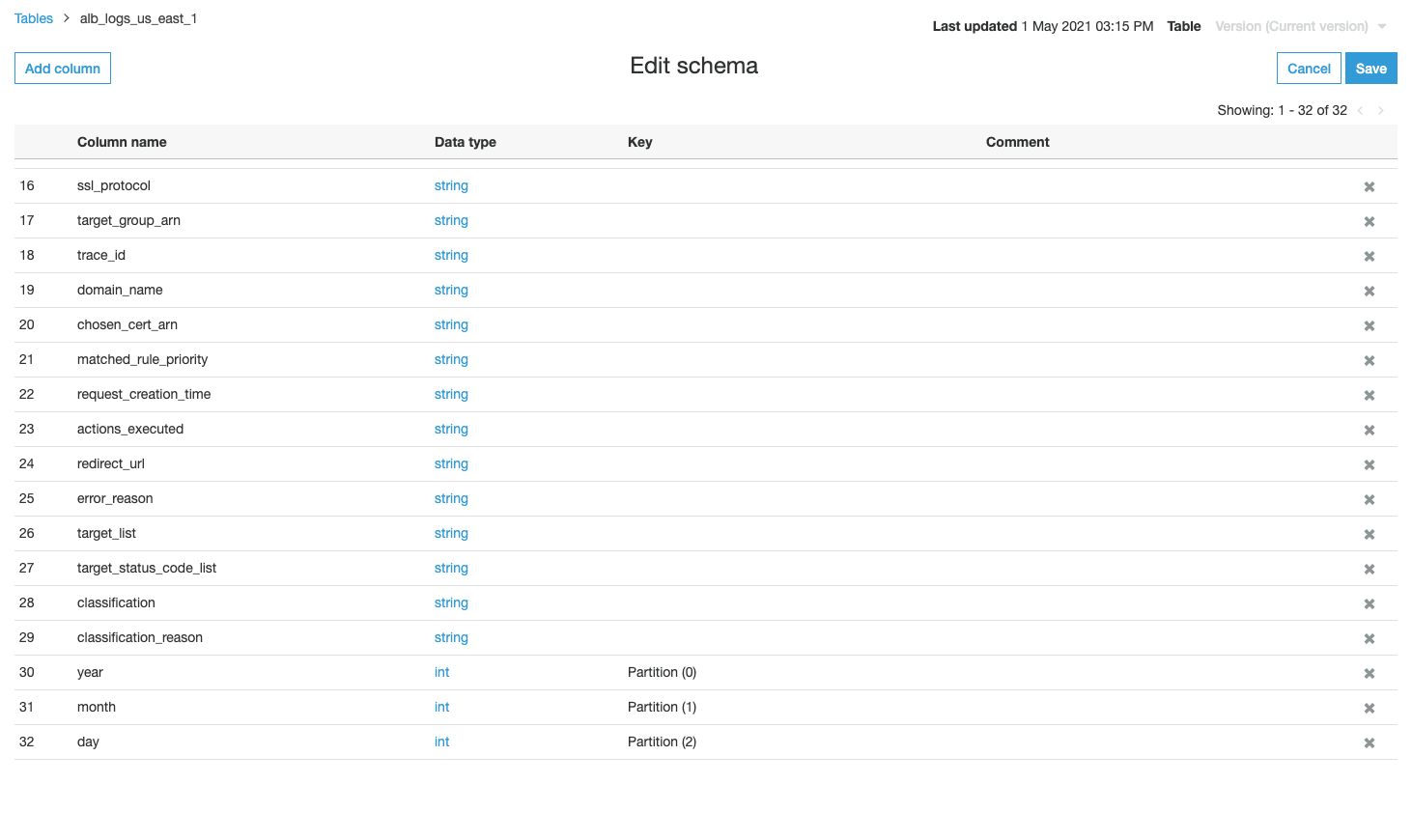

Subsequent, we overview and edit the schema.

- Below Databases, select Tables.

- Select the desk

alb_logs_<area>. - Cross-check the column title and corresponding knowledge kind.

The desk has three columns: partiion_0, partition_1, and partition_2.

Analyze the info utilizing Athena

Subsequent, we analyze our knowledge by querying the entry logs. We evaluate the question velocity between the next tables:

- Non-partitioned desk – All knowledge is handled as a single desk

- Partitioned desk – Information is partitioned by 12 months, month, and day

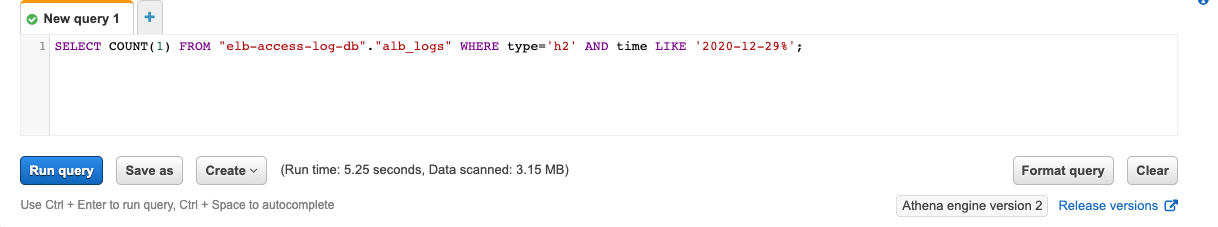

Question the non-partitioned desk

With the non-partitioned desk, if we need to question entry logs on a particular date, we’ve to jot down the WHERE clause utilizing the LIKE operator as a result of the info column was interpreted as a string. See the next code:

The question takes 5.25 seconds to finish, with 3.15 MB knowledge scanned.

Question the partitioned desk

With the 12 months, month, and day columns as partitions, we will use the next assertion to question entry logs on the identical day:

This time the question takes just one.89 seconds to finish, with 25.72 KB knowledge scanned.

This question is quicker and prices much less (as a result of much less knowledge is scanned) resulting from partition pruning.

Clear up

To keep away from incurring future prices, delete the assets created within the Information Catalog, and delete the AWS Glue crawler.

Abstract

On this publish, we illustrated the best way to create an AWS Glue crawler that populates ALB logs metadata within the AWS Glue Information Catalog robotically with partitions by 12 months, month, and day. With partition pruning, we will enhance question efficiency and related prices in Athena.

You probably have questions or ideas, please depart a remark.

In regards to the Authors

Ray Wang is a Options Architect at AWS. With 8 years of expertise within the IT business, Ray is devoted to constructing fashionable options on the cloud, particularly in huge knowledge and machine studying. As a hungry go-getter, he handed all 12 AWS certificates to make his technical area not solely deep however broad. He likes to learn and watch sci-fi motion pictures in his spare time.

Ray Wang is a Options Architect at AWS. With 8 years of expertise within the IT business, Ray is devoted to constructing fashionable options on the cloud, particularly in huge knowledge and machine studying. As a hungry go-getter, he handed all 12 AWS certificates to make his technical area not solely deep however broad. He likes to learn and watch sci-fi motion pictures in his spare time.

Corvus Lee is a Information Lab Options Architect at AWS. He enjoys all types of data-related discussions with clients, from high-level like white boarding a knowledge lake structure, to the main points of information modeling, writing Python/Spark code for knowledge processing, and extra.

Corvus Lee is a Information Lab Options Architect at AWS. He enjoys all types of data-related discussions with clients, from high-level like white boarding a knowledge lake structure, to the main points of information modeling, writing Python/Spark code for knowledge processing, and extra.

[ad_2]