[ad_1]

As organizations wrangle with the explosive progress in knowledge quantity they’re offered with immediately, effectivity and scalability of storage turn into pivotal to working a profitable knowledge platform for driving enterprise perception and worth. Apache Ozone is a distributed, scalable, and excessive efficiency object retailer, out there with Cloudera Knowledge Platform Personal Cloud. CDP Personal Cloud makes use of Ozone to separate storage from compute, which allows it to deal with billions of objects on-premises, akin to Public Cloud deployments which profit from the likes of S3. Ozone can also be totally appropriate with S3 API*, establishing it as a future proof answer and enabling CDP Hybrid Cloud to satisfy the rising demand for a hybrid knowledge cloud .

Apache Ozone has added a brand new characteristic referred to as File System Optimization (“FSO”) in HDDS-2939. This characteristic is merged upstream into the grasp department and will probably be out there within the subsequent Ozone launch. The FSO characteristic gives file system semantics (hierarchical namespace) effectively whereas retaining the inherent scalability of an object retailer. With FSO, Apache Ozone ensures atomic listing operations, and renaming or deleting a listing is a straightforward metadata operation even when the listing has a big set of sub-paths (directories/recordsdata) inside it. In truth, this offers Apache Ozone a big efficiency benefit over different object shops within the knowledge analytics ecosystem. Furthermore, Ozone seamlessly integrates with Apache knowledge analytics instruments like Hive, Spark and Impala. Additionally, varied use instances like Apache Hive drop desk question, recursive listing deletion, listing shifting operations at the moment are a lot quicker and are strongly constant with none partial ends in case of any failure.

Apache Ozone helps interoperability of the identical knowledge for varied use instances. For instance, a consumer can ingest knowledge into Apache Ozone utilizing FileSystem API, and the identical knowledge could be accessed through Ozone S3 API*. This may probably enhance the effectivity of the consumer platform with on-prem ObjectStore.

Please confer with Apache Ozone documentation for extra particulars concerning Apache Ozone’s atomicity ensures.

On this weblog put up, we are going to look into benchmark check outcomes measuring the efficiency of Apache Hadoop Teragen and a listing/file rename operation with Apache Ozone (native o3fs) vs. Ozone S3 API*. We enabled Apache Ozone’s FSO characteristic for the benchmarking assessments.

Job Committers:

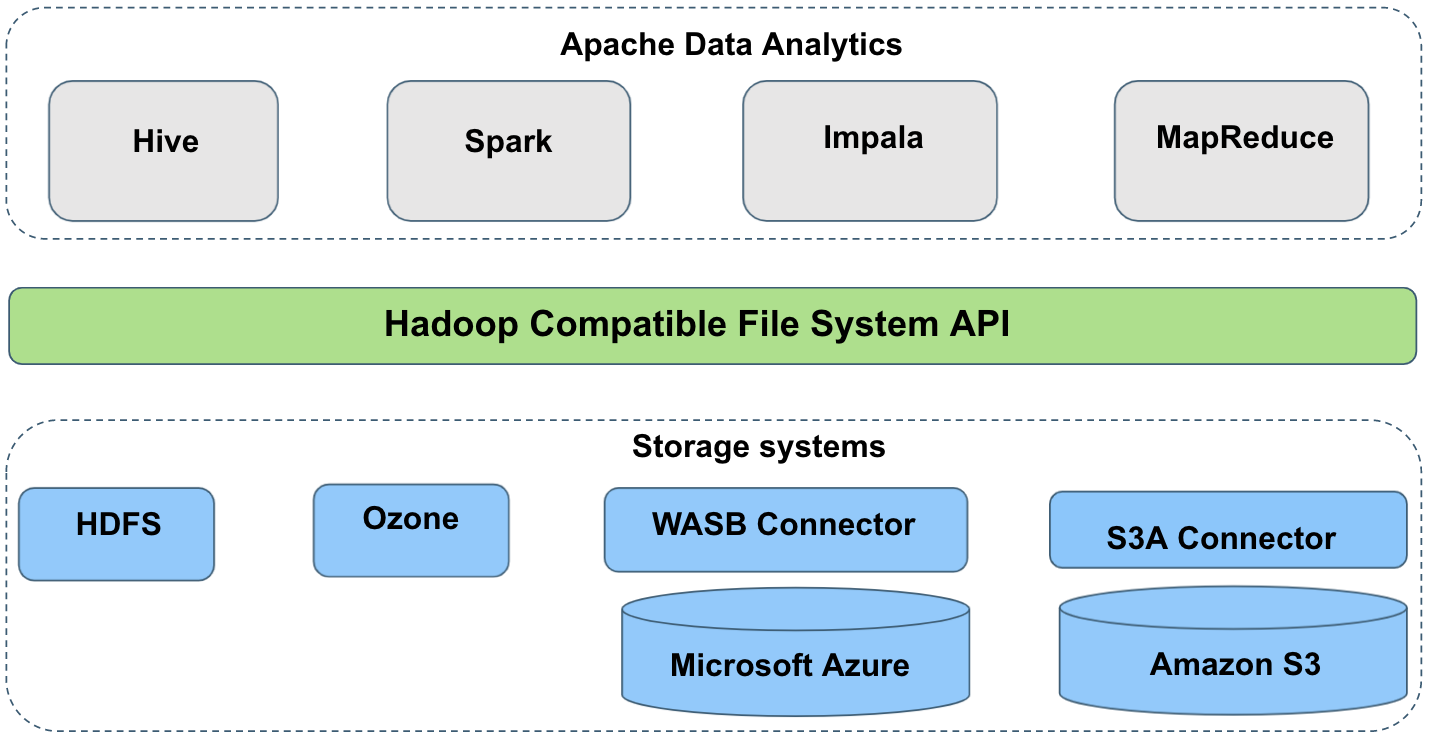

Apache knowledge analytics historically assumes that rename and delete operations are strictly atomic. Most knowledge analytics instruments like Apache Hive, Apache Impala, Apache Spark, MR, and many others. typically write output to momentary areas after which rename it on the finish of the job to turn into publicly seen. For instance, the job committers of Hive and Impala require consistency of listing itemizing and atomicity of rename operations. Consequently, the efficiency of the question is immediately impacted by how shortly the intermediate rename operation is accomplished. Which means that job output is noticed by readers on an all-or-nothing foundation. Beneath is a high-level view of Apache knowledge analytics and the interactions between the storage programs like Apache HDFS, Apache Ozone, S3-like object shops, and many others. Though Ozone is an object retailer, it doesn’t want any particular output committers.

Efficiency comparability between Apache Ozone and S3 API*

- Benchmarking Apache Ozone vs. S3 API* utilizing Teragen:

We ran Apache Hadoop Teragen benchmark assessments in a standard Hadoop stack consisting of YARN and HDFS aspect by aspect with Apache Ozone. We used an Apache Hadoop S3A Filesystem connector to connect with the S3 API* and likewise used Hadoop’s default file committer to commit work to S3.

The next measurements have been obtained utilizing Teragen for varied runs with knowledge dimension within the vary of 1GB, 10GB and 100GB respectively. We carried out multi-run testing (three runs) for every knowledge dimension, and the efficiency numbers have been averaged out with a max deviation of ~10% between runs. The outcomes present that the efficiency of Native Ozone is quicker than S3 object shops, e.g., S3 API*, and many others).

- File motion efficiency comparability:

We ran “hadoop mv command” assessments on a listing of dimension within the vary of 1GB, 10GB and 100GB respectively, saved in Apache Ozone and S3 API*. This listing contained a uniform-sized 30 recordsdata. Apache Native Ozone (o3fs) carried out the renaming of supply listing to vacation spot listing much like HDFS however in contrast to S3a (S3 API*, and many others) which does a replica object and delete unique object operation.

The next chart exhibits that Ozone efficiency for the transfer operation is in the identical order as HDFS whereas retaining the atomicity assure. We carried out multi-run testing (three runs) for every listing dimension, and the efficiency numbers have been averaged out with a max deviation of ~10% between runs.

Check Atmosphere Particulars:

The cluster setup consisted of 10 uniform bodily nodes with 40 core Intel® Xeon® processors, 128 GB of RAM, 3 x 2 TB disks, 1 x 1 TB disk and a ten Gb/s community, configured with 3 devoted disks for knowledge storage. The nodes ran CentOS 7, and Cloudera Runtime 7.1.7, which incorporates Hadoop 3.1.1, ZooKeeper 3.5.5 and Ozone constructed from Apache grasp department, model 1.1.0, github commit hash 19ed79464ca9ed2210ca8ac47a4736fb67d8bd3e.

SSL/TLS was turned off and in unsecure mode. Excessive availability was enabled for the Apache Ozone service.

We used an Apache Hadoop S3A Filesystem connector to connect with the AWS S3 object retailer and likewise used Hadoop’s default file committer to commit work to S3.

Conclusion

The benchmark outcomes confirmed that Apache Ozone with the File System Optimization (“FSO”) characteristic enabled was quicker than an S3 API*-like an object retailer and really engaging for high-performance data-intensive workloads. With FSO, Ozone listing/file rename and delete operations are strongly constant and provides deterministic efficiency numbers regardless of the big set of subpaths (directories/recordsdata) contained inside it.

Briefly, Ozone with FSO helps customers to attain the identical atomicity ensures as HDFS with job and activity commits thus making it natively built-in with Apache knowledge analytics instruments like Hive, Spark and Impala, and many others. with out the necessity for an S3Guard-like layer, whereas retaining its efficiency traits. Ozone in CDP Personal Cloud gives out of the field safety integration with Apache Ranger and Apache Atlas. Moreover, knowledge saved in Ozone could be shared between use instances deployed as a part of CDP in addition to exterior third-party analytics, eliminating the necessity for knowledge duplication, which in flip reduces threat and optimizes useful resource utilization.

Additional Studying

Apache Ozone – Object Retailer Overview

Apache Ozone – Object Retailer Structure

S3 API* – refers to Amazon S3 implementation of the S3 API protocol.

[ad_2]