[ad_1]

As organizations throughout sectors grapple with the alternatives and challenges offered by utilizing giant language fashions (LLMs), the infrastructure wanted to construct, practice, check, and deploy LLMs presents its personal distinctive challenges. As a part of the SEI’s current investigation into use circumstances for LLMs inside the Intelligence Neighborhood (IC), we would have liked to deploy compliant, cost-effective infrastructure for analysis and improvement. On this submit, we describe present challenges and cutting-edge of cost-effective AI infrastructure, and we share 5 classes discovered from our personal experiences standing up an LLM for a specialised use case.

The Problem of Architecting MLOps Pipelines

Architecting machine studying operations (MLOps) pipelines is a troublesome course of with many transferring components, together with knowledge units, workspace, logging, compute assets, and networking—and all these components have to be thought-about in the course of the design part. Compliant, on-premises infrastructure requires superior planning, which is usually a luxurious in quickly advancing disciplines resembling AI. By splitting duties between an infrastructure crew and a improvement crew who work carefully collectively, challenge necessities for carrying out ML coaching and deploying the assets to make the ML system succeed may be addressed in parallel. Splitting the duties additionally encourages collaboration for the challenge and reduces challenge pressure like time constraints.

Approaches to Scaling an Infrastructure

The present cutting-edge is a multi-user, horizontally scalable atmosphere situated on a company’s premises or in a cloud ecosystem. Experiments are containerized or saved in a manner so they’re simple to copy or migrate throughout environments. Information is saved in particular person elements and migrated or built-in when essential. As ML fashions grow to be extra complicated and because the quantity of information they use grows, AI groups might have to extend their infrastructure’s capabilities to take care of efficiency and reliability. Particular approaches to scaling can dramatically have an effect on infrastructure prices.

When deciding how one can scale an atmosphere, an engineer should think about elements of price, pace of a given spine, whether or not a given challenge can leverage sure deployment schemes, and general integration goals. Horizontal scaling is using a number of machines in tandem to distribute workloads throughout all infrastructure out there. Vertical scaling offers further storage, reminiscence, graphics processing items (GPUs), and many others. to enhance system productiveness whereas reducing price. The sort of scaling has particular software to environments which have already scaled horizontally or see an absence of workload quantity however require higher efficiency.

Usually, each vertical and horizontal scaling may be price efficient, with a horizontally scaled system having a extra granular stage of management. In both case it’s doable—and extremely really useful—to establish a set off perform for activation and deactivation of expensive computing assets and implement a system below that perform to create and destroy computing assets as wanted to attenuate the general time of operation. This technique helps to scale back prices by avoiding overburn and idle assets, which you’re in any other case nonetheless paying for, or allocating these assets to different jobs. Adapting sturdy orchestration and horizontal scaling mechanisms resembling containers, offers granular management, which permits for clear useful resource utilization whereas reducing working prices, significantly in a cloud atmosphere.

Classes Discovered from Mission Mayflower

From Might-September 2023, the SEI performed the Mayflower Mission to discover how the Intelligence Neighborhood may arrange an LLM, customise LLMs for particular use circumstances, and consider the trustworthiness of LLMs throughout use circumstances. You may learn extra about Mayflower in our report, A Retrospective in Engineering Giant Language Fashions for Nationwide Safety. Our crew discovered that the flexibility to quickly deploy compute environments primarily based on the challenge wants, knowledge safety, and making certain system availability contributed on to the success of our challenge. We share the next classes discovered to assist others construct AI infrastructures that meet their wants for price, pace, and high quality.

1. Account on your property and estimate your wants up entrance.

Take into account every bit of the atmosphere an asset: knowledge, compute assets for coaching, and analysis instruments are just some examples of the property that require consideration when planning. When these elements are recognized and correctly orchestrated, they will work collectively effectively as a system to ship outcomes and capabilities to finish customers. Figuring out your property begins with evaluating the information and framework the groups will probably be working with. The method of figuring out every part of your atmosphere requires experience from—and ideally, cross coaching and collaboration between—each ML engineers and infrastructure engineers to perform effectively.

2. Construct in time for evaluating toolkits.

Some toolkits will work higher than others, and evaluating them could be a prolonged course of that must be accounted for early on. In case your group has grow to be used to instruments developed internally, then exterior instruments might not align with what your crew members are aware of. Platform as a service (PaaS) suppliers for ML improvement supply a viable path to get began, however they might not combine properly with instruments your group has developed in-house. Throughout planning, account for the time to guage or adapt both device set, and examine these instruments in opposition to each other when deciding which platform to leverage. Value and value are the first elements it is best to think about on this comparability; the significance of those elements will fluctuate relying in your group’s assets and priorities.

3. Design for flexibility.

Implement segmented storage assets for flexibility when attaching storage elements to a compute useful resource. Design your pipeline such that your knowledge, outcomes, and fashions may be handed from one place to a different simply. This method permits assets to be positioned on a standard spine, making certain quick switch and the flexibility to connect and detach or mount modularly. A standard spine offers a spot to retailer and name on giant knowledge units and outcomes of experiments whereas sustaining good knowledge hygiene.

A apply that may assist flexibility is offering a normal “springboard” for experiments: versatile items of {hardware} which can be independently highly effective sufficient to run experiments. The springboard is much like a sandbox and helps fast prototyping, and you’ll reconfigure the {hardware} for every experiment.

For the Mayflower Mission, we applied separate container workflows in remoted improvement environments and built-in these utilizing compose scripts. This methodology permits a number of GPUs to be referred to as in the course of the run of a job primarily based on out there marketed assets of joined machines. The cluster offers multi-node coaching capabilities inside a job submission format for higher end-user productiveness.

4. Isolate your knowledge and shield your gold requirements.

Correctly isolating knowledge can resolve quite a lot of issues. When working collaboratively, it’s simple to exhaust storage with redundant knowledge units. By speaking clearly along with your crew and defining a normal, frequent, knowledge set supply, you may keep away from this pitfall. Which means that a main knowledge set have to be extremely accessible and provisioned with the extent of use—that’s, the quantity of information and the pace and frequency at which crew members want entry—your crew expects on the time the system is designed. The supply ought to be capable to assist the anticipated reads from nevertheless many crew members might have to make use of this knowledge at any given time to carry out their duties. Any output or remodeled knowledge should not be injected again into the identical space during which the supply knowledge is saved however ought to as a substitute be moved into one other working listing or designated output location. This method maintains the integrity of a supply knowledge set whereas minimizing pointless storage use and permits replication of an atmosphere extra simply than if the information set and dealing atmosphere weren’t remoted.

5. Save prices when working with cloud assets.

Authorities cloud assets have totally different availability than industrial assets, which regularly require further compensations or compromises. Utilizing an present on-premises useful resource may also help scale back prices of cloud operations. Particularly, think about using native assets in preparation for scaling up as a springboard. This apply limits general compute time on costly assets that, primarily based in your use case, could also be way more highly effective than required to carry out preliminary testing and analysis.

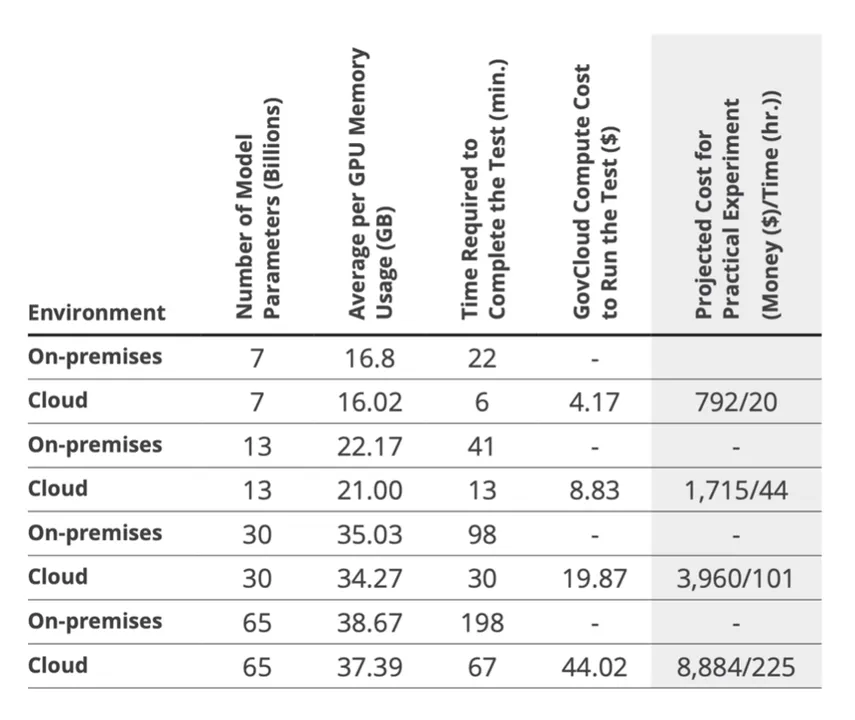

Determine 1: On this desk from our report A Retrospective in Engineering Giant Language Fashions for Nationwide Safety, we offer data on efficiency benchmark checks for coaching LlaMA fashions of various parameter sizes on our customized 500-document set. For the estimates within the rightmost column, we outline a sensible experiment as LlaMA with 10k coaching paperwork for 3 epochs with GovCloud at $39.33/ hour, LoRA (r=1, α=2, dropout = 0.05), and DeepSpeed. On the time of the report, High Secret charges had been $79.0533/hour.

Trying Forward

Infrastructure is a significant consideration as organizations look to construct, deploy, and use LLMs—and different AI instruments. Extra work is required, particularly to fulfill challenges in unconventional environments, resembling these on the edge.

Because the SEI works to advance the self-discipline of AI engineering, a robust infrastructure base can assist the scalability and robustness of AI techniques. Specifically, designing for flexibility permits builders to scale an AI answer up or down relying on system and use case wants. By defending knowledge and gold requirements, groups can make sure the integrity and assist the replicability of experiment outcomes.

Because the Division of Protection more and more incorporates AI into mission options, the infrastructure practices outlined on this submit can present price financial savings and a shorter runway to fielding AI capabilities. Particular practices like establishing a springboard platform can save time and prices in the long term.

[ad_2]