[ad_1]

Getting a fast and correct studying of an X-ray or another medical pictures might be very important to a affected person’s well being and may even save a life. Acquiring such an evaluation is dependent upon the provision of a talented radiologist and, consequently, a fast response will not be all the time potential. For that cause, says Ruizhi “Ray” Liao, a postdoc and a latest PhD graduate at MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL), “we need to practice machines which might be able to reproducing what radiologists do daily.” Liao is first creator of a brand new paper, written with different researchers at MIT and Boston-area hospitals, that’s being offered this fall at MICCAI 2021, a global convention on medical picture computing.

Though the thought of using computer systems to interpret pictures will not be new, the MIT-led group is drawing on an underused useful resource — the huge physique of radiology experiences that accompany medical pictures, written by radiologists in routine scientific observe — to enhance the interpretive skills of machine studying algorithms. The workforce can be using an idea from info concept known as mutual info — a statistical measure of the interdependence of two totally different variables — with a purpose to enhance the effectiveness of their method.

Right here’s the way it works: First, a neural community is educated to find out the extent of a illness, similar to pulmonary edema, by being offered with quite a few X-ray pictures of sufferers’ lungs, together with a physician’s ranking of the severity of every case. That info is encapsulated inside a group of numbers. A separate neural community does the identical for textual content, representing its info in a special assortment of numbers. A 3rd neural community then integrates the data between pictures and textual content in a coordinated approach that maximizes the mutual info between the 2 datasets. “When the mutual info between pictures and textual content is excessive, that implies that pictures are extremely predictive of the textual content and the textual content is extremely predictive of the pictures,” explains MIT Professor Polina Golland, a principal investigator at CSAIL.

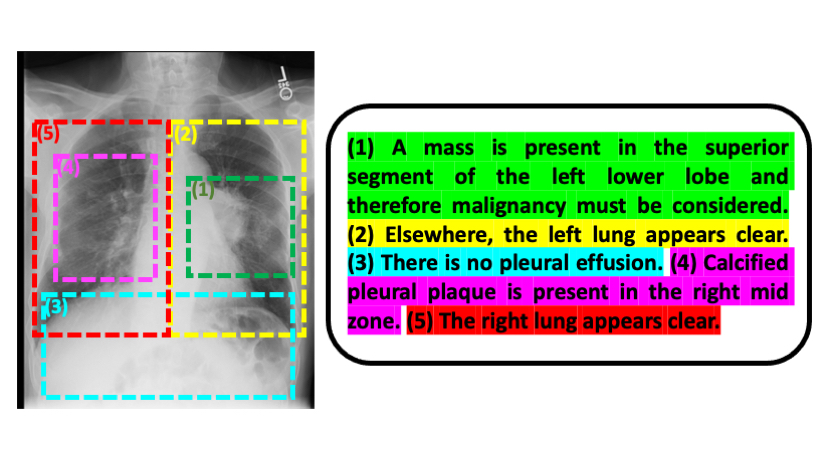

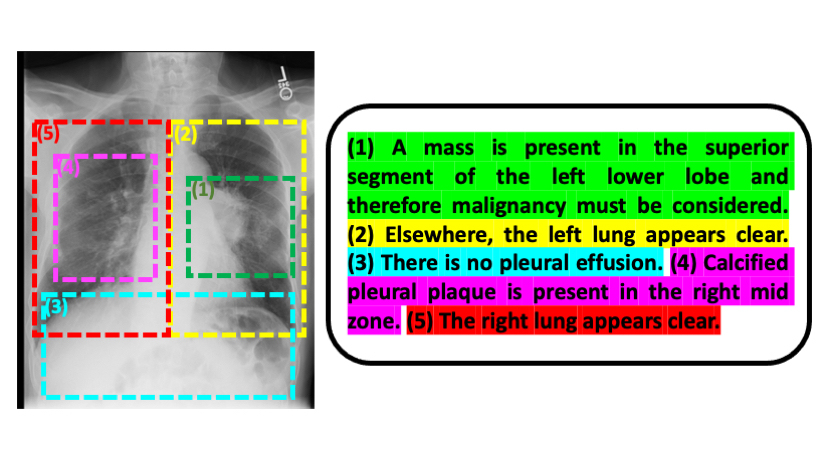

Liao, Golland, and their colleagues have launched one other innovation that confers a number of benefits: Fairly than working from whole pictures and radiology experiences, they break the experiences right down to particular person sentences and the parts of these pictures that the sentences pertain to. Doing issues this fashion, Golland says, “estimates the severity of the illness extra precisely than should you view the entire picture and complete report. And since the mannequin is analyzing smaller items of information, it could possibly study extra readily and has extra samples to coach on.”

Whereas Liao finds the pc science features of this venture fascinating, a main motivation for him is “to develop expertise that’s clinically significant and relevant to the true world.”

To that finish, a pilot program is presently underway on the Beth Israel Deaconess Medical Heart to see how MIT’s machine studying mannequin might affect the way in which medical doctors managing coronary heart failure sufferers make choices, particularly in an emergency room setting the place pace is of the essence.

The mannequin might have very broad applicability, in line with Golland. “It could possibly be used for any form of imagery and related textual content — inside or outdoors the medical realm. This normal method, furthermore, could possibly be utilized past pictures and textual content, which is thrilling to consider.”

Liao wrote the paper alongside MIT CSAIL postdoc Daniel Moyer and Golland; Miriam Cha and Keegan Quigley at MIT Lincoln Laboratory; William M. Wells at Harvard Medical Faculty and MIT CSAIL; and scientific collaborators Seth Berkowitz and Steven Horng at Beth Israel Deaconess Medical Heart.

The work was sponsored by the NIH NIBIB Neuroimaging Evaluation Heart, Wistron, MIT-IBM Watson AI Lab, MIT Deshpande Heart for Technological Innovation, MIT Abdul Latif Jameel Clinic for Machine Studying in Well being (J-Clinic), and MIT Lincoln Lab.

[ad_2]