[ad_1]

An instance of our methodology deployed on a Clearpath Jackal floor robotic (left) exploring a suburban atmosphere to discover a visible goal (inset). (Proper) Selfish observations of the robotic.

Think about you’re in an unfamiliar neighborhood with no home numbers and I provide you with a photograph that I took just a few days in the past of my home, which isn’t too distant. When you tried to seek out my home, you would possibly comply with the streets and go across the block on the lookout for it. You would possibly take just a few incorrect turns at first, however finally you’ll find my home. Within the course of, you’ll find yourself with a psychological map of my neighborhood. The subsequent time you’re visiting, you’ll probably be capable to navigate to my home straight away, with out taking any incorrect turns.

Such exploration and navigation habits is simple for people. What would it not take for a robotic studying algorithm to allow this type of intuitive navigation functionality? To construct a robotic able to exploring and navigating like this, we have to study from numerous prior datasets in the true world. Whereas it’s attainable to gather a considerable amount of knowledge from demonstrations, and even with randomized exploration, studying significant exploration and navigation habits from this knowledge may be difficult – the robotic must generalize to unseen neighborhoods, acknowledge visible and dynamical similarities throughout scenes, and study a illustration of visible observations that’s strong to distractors like climate circumstances and obstacles. Since such components may be arduous to mannequin and switch from simulated environments, we sort out these issues by instructing the robotic to discover utilizing solely real-world knowledge.

Formally, we studied the issue of goal-directed exploration for visible navigation in novel environments. A robotic is tasked with navigating to a aim location (G), specified by a picture (o_G) taken at (G). Our methodology makes use of an offline dataset of trajectories, over 40 hours of interactions within the real-world, to study navigational affordances and builds a compressed illustration of perceptual inputs. We deploy our methodology on a cell robotic system in industrial and leisure out of doors areas across the metropolis of Berkeley. RECON can uncover a brand new aim in a beforehand unexplored atmosphere in below 10 minutes, and within the course of construct a “psychological map” of that atmosphere that enables it to then attain objectives once more in simply 20 seconds. Moreover, we make this real-world offline dataset publicly obtainable to be used in future analysis.

RECON, or Rapid Exploration Controllers for Outcome-driven Navigation, explores new environments by “imagining” potential aim photos and making an attempt to succeed in them. This exploration permits RECON to incrementally collect details about the brand new atmosphere.

Our methodology consists of two parts that allow it to discover new environments. The primary element is a realized illustration of objectives. This illustration ignores task-irrelevant distractors, permitting the agent to rapidly adapt to novel settings. The second element is a topological graph. Our methodology learns each parts utilizing datasets or real-world robotic interactions gathered in prior work. Leveraging such giant datasets permits our methodology to generalize to new environments and scale past the unique dataset.

Studying to Signify Objectives

A helpful technique to study complicated goal-reaching habits in an unsupervised method is for an agent to set its personal objectives, primarily based on its capabilities, and try to succeed in them. In actual fact, people are very proficient at setting summary objectives for themselves in an effort to study numerous expertise. Current progress in reinforcement studying and robotics has additionally proven that instructing brokers to set its personal objectives by “imagining” them can lead to studying of spectacular unsupervised goal-reaching expertise. To have the ability to “think about”, or pattern, such objectives, we have to construct a previous distribution over the objectives seen throughout coaching.

For our case, the place objectives are represented by high-dimensional photos, how ought to we pattern objectives for exploration? As a substitute of explicitly sampling aim photos, we as an alternative have the agent study a compact illustration of latent objectives, permitting us to carry out exploration by sampling new latent aim representations, quite than by sampling photos. This illustration of objectives is realized from context-goal pairs beforehand seen by the robotic. We use a variational info bottleneck to study these representations as a result of it supplies two vital properties. First, it learns representations that throw away irrelevant info, comparable to lighting and pixel noise. Second, the variational info bottleneck packs the representations collectively in order that they appear like a selected prior distribution. That is helpful as a result of we will then pattern imaginary representations by sampling from this prior distribution.

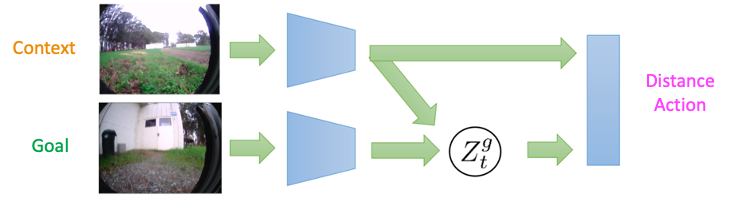

The structure for studying a previous distribution for these representations is proven under. Because the encoder and decoder are conditioned on the context, the illustration (Z_t^g) solely encodes details about relative location of the aim from the context – this permits the mannequin to characterize possible objectives. If, as an alternative, we had a typical VAE (wherein the enter photos are autoencoded), the samples from the prior over these representations wouldn’t essentially characterize objectives which can be reachable from the present state. This distinction is essential when exploring new environments, the place most states from the coaching environments aren’t legitimate objectives.

The structure for studying a previous over objectives in RECON. The context-conditioned embedding learns to characterize possible objectives.

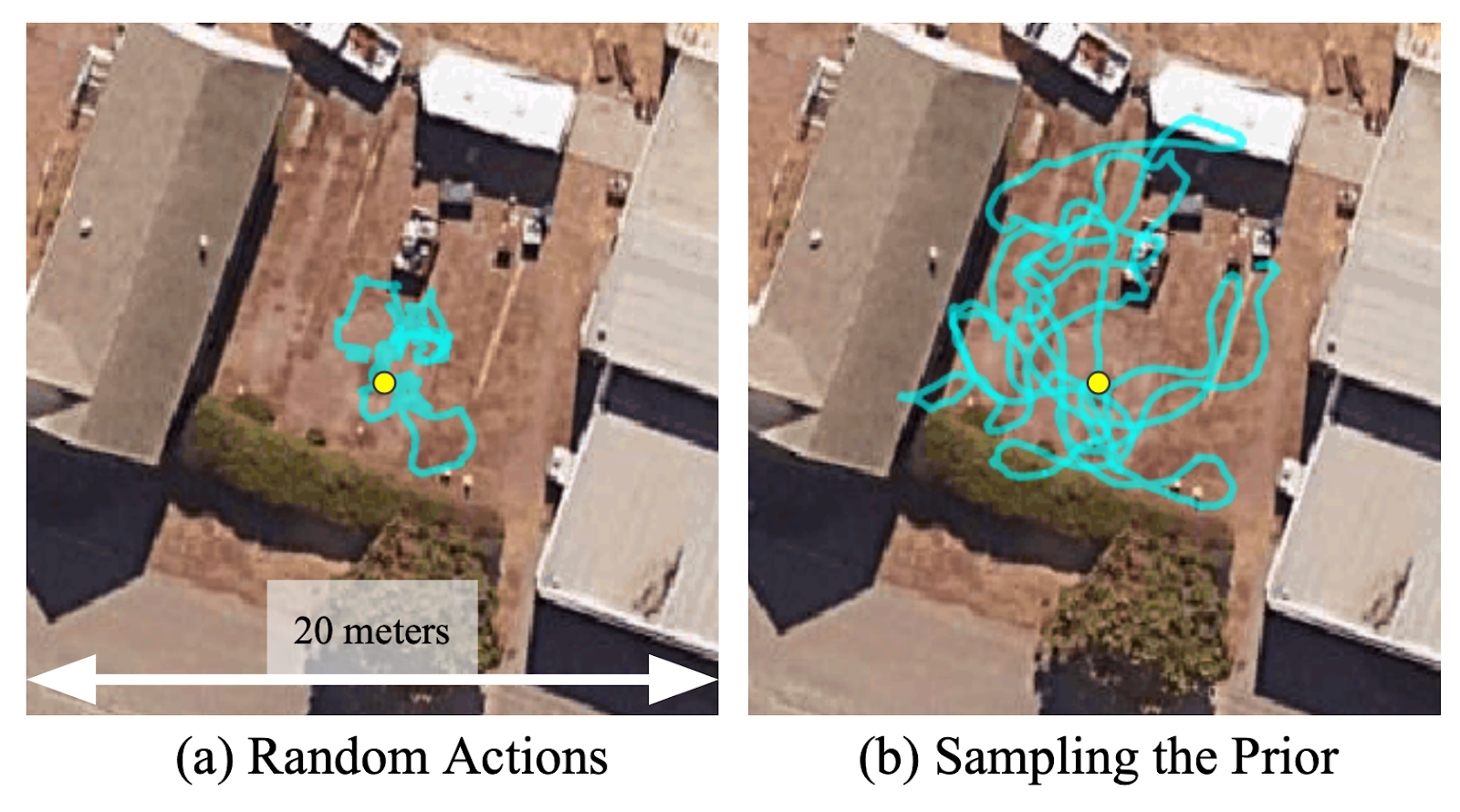

To know the significance of studying this illustration, we run a easy experiment the place the robotic is requested to discover in an undirected method ranging from the yellow circle within the determine under. We discover that sampling representations from the realized prior vastly accelerates the range of exploration trajectories and permits a wider space to be explored. Within the absence of a previous over beforehand seen objectives, utilizing random actions to discover the atmosphere may be fairly inefficient. Sampling from the prior distribution and making an attempt to succeed in these “imagined” objectives permits RECON to discover the atmosphere effectively.

Sampling from a realized prior permits the robotic to discover 5 instances quicker than utilizing random actions.

Objective-Directed Exploration with a Topological Reminiscence

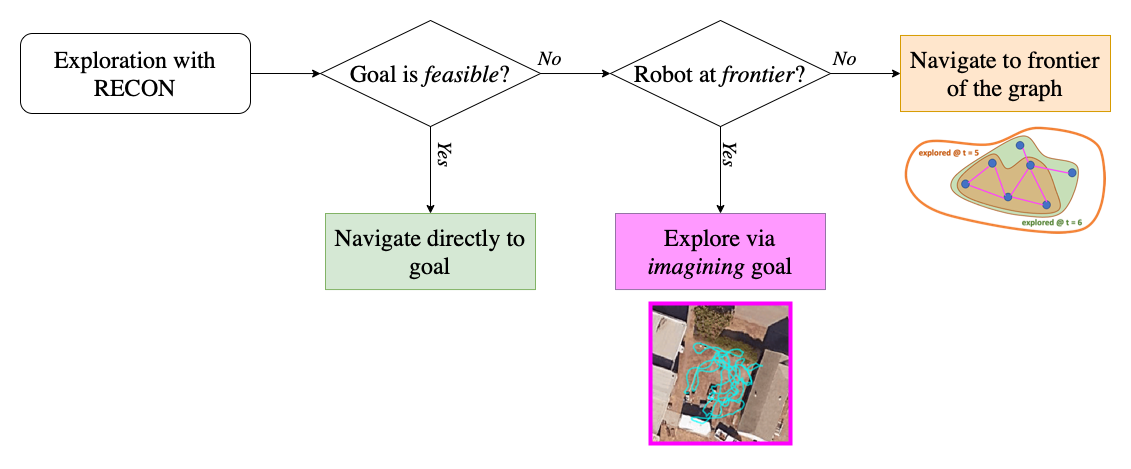

We mix this aim sampling scheme with a topological reminiscence to incrementally construct a “psychological map” of the brand new atmosphere. This map supplies an estimate of the exploration frontier in addition to steerage for subsequent exploration. In a brand new atmosphere, RECON encourages the robotic to discover on the frontier of the map – whereas the robotic will not be on the frontier, RECON directs it to navigate to a beforehand seen subgoal on the frontier of the map.

On the frontier, RECON makes use of the realized aim illustration to study a previous over objectives it will possibly reliably navigate to and are thus, possible to succeed in. RECON makes use of this aim illustration to pattern, or “think about”, a possible aim that helps it discover the atmosphere. This successfully signifies that, when positioned in a brand new atmosphere, if RECON doesn’t know the place the goal is, it “imagines” an appropriate subgoal that it will possibly drive in the direction of to discover and collects info, till it believes it will possibly attain the goal aim picture. This permits RECON to “search” for the aim in an unknown atmosphere, all of the whereas build up its psychological map. Notice that the target of the topological graph is to construct a compact map of the atmosphere and encourage the robotic to succeed in the frontier; it doesn’t inform aim sampling as soon as the robotic is on the frontier.

Illustration of the exploration algorithm of RECON.

Studying from Various Actual-world Knowledge

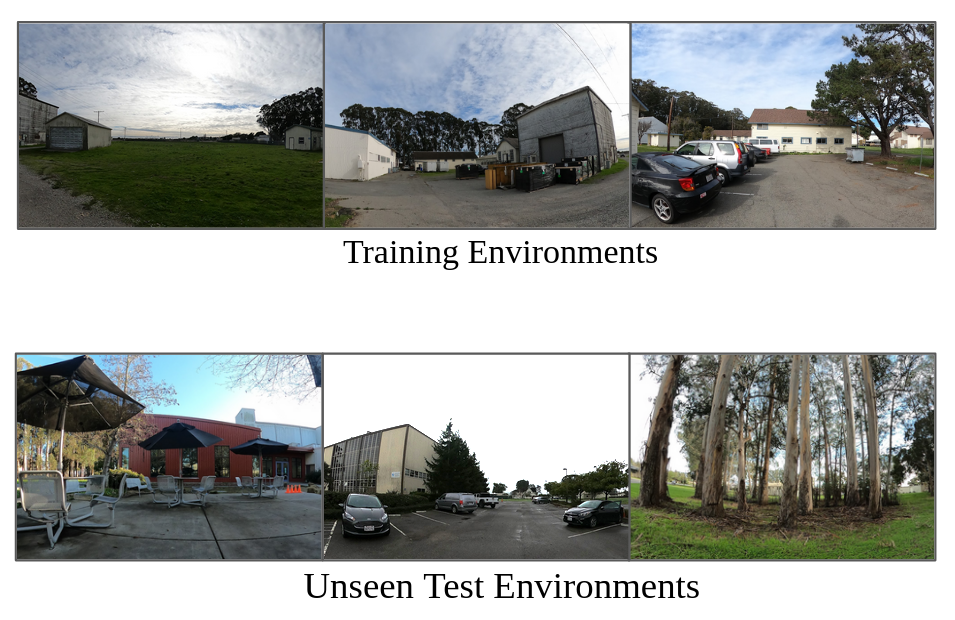

We practice these fashions in RECON solely utilizing offline knowledge collected in a various vary of outside environments. Apparently, we have been capable of practice this mannequin utilizing knowledge collected for 2 impartial initiatives within the fall of 2019 and spring of 2020, and have been profitable in deploying the mannequin to discover novel environments and navigate to objectives throughout late 2020 and the spring of 2021. This offline dataset of trajectories consists of over 40 hours of knowledge, together with off-road navigation, driving by parks in Berkeley and Oakland, parking tons, sidewalks and extra, and is a wonderful instance of noisy real-world knowledge with visible distractors like lighting, seasons (rain, twilight and many others.), dynamic obstacles and many others. The dataset consists of a combination of teleoperated trajectories (2-3 hours) and open-loop security controllers programmed to gather random knowledge in a self-supervised method. This dataset presents an thrilling benchmark for robotic studying in real-world environments because of the challenges posed by offline studying of management, illustration studying from high-dimensional visible observations, generalization to out-of-distribution environments and test-time adaptation.

We’re releasing this dataset publicly to assist future analysis in machine studying from real-world interplay datasets, take a look at the dataset web page for extra info.

We practice from numerous offline knowledge (high) and check in new environments (backside).

RECON in Motion

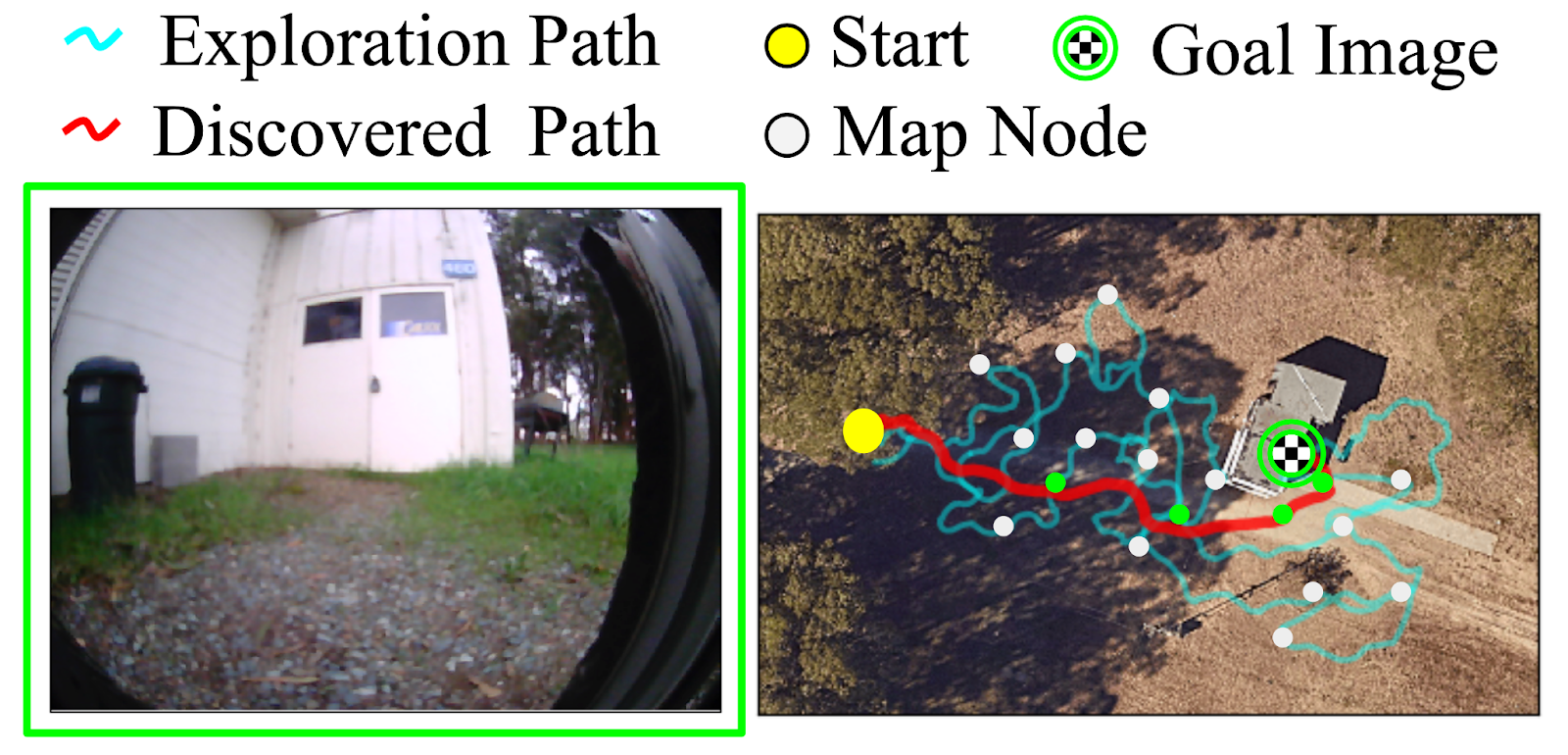

Placing these parts collectively, let’s see how RECON performs when deployed in a park close to Berkeley. Notice that the robotic has by no means seen photos from this park earlier than. We positioned the robotic in a nook of the park and supplied a goal picture of a white cabin door. Within the animation under, we see RECON exploring and efficiently discovering the specified aim. “Run 1” corresponds to the exploration course of in a novel atmosphere, guided by a user-specified goal picture on the left. After it finds the aim, RECON makes use of the psychological map to distill its expertise within the atmosphere to seek out the shortest path for subsequent traversals. In “Run 2”, RECON follows this path to navigate on to the aim with out wanting round.

In “Run 1”, RECON explores a brand new atmosphere and builds a topological psychological map. In “Run 2”, it makes use of this psychological map to rapidly navigate to a user-specified aim within the atmosphere.

An illustration of this two-step course of from an overhead view is present under, exhibiting the paths taken by the robotic in subsequent traversals of the atmosphere:

(Left) The aim specified by the person. (Proper) The trail taken by the robotic when exploring for the primary time (proven in cyan) to construct a psychological map with nodes (proven in white), and the trail it takes when revisiting the identical aim utilizing the psychological map (proven in purple).

To judge the efficiency of RECON in novel environments, examine its habits below a variety of perturbations and perceive the contributions of its parts, we run in depth real-world experiments within the hills of Berkeley and Richmond, which have a various terrain and all kinds of testing environments.

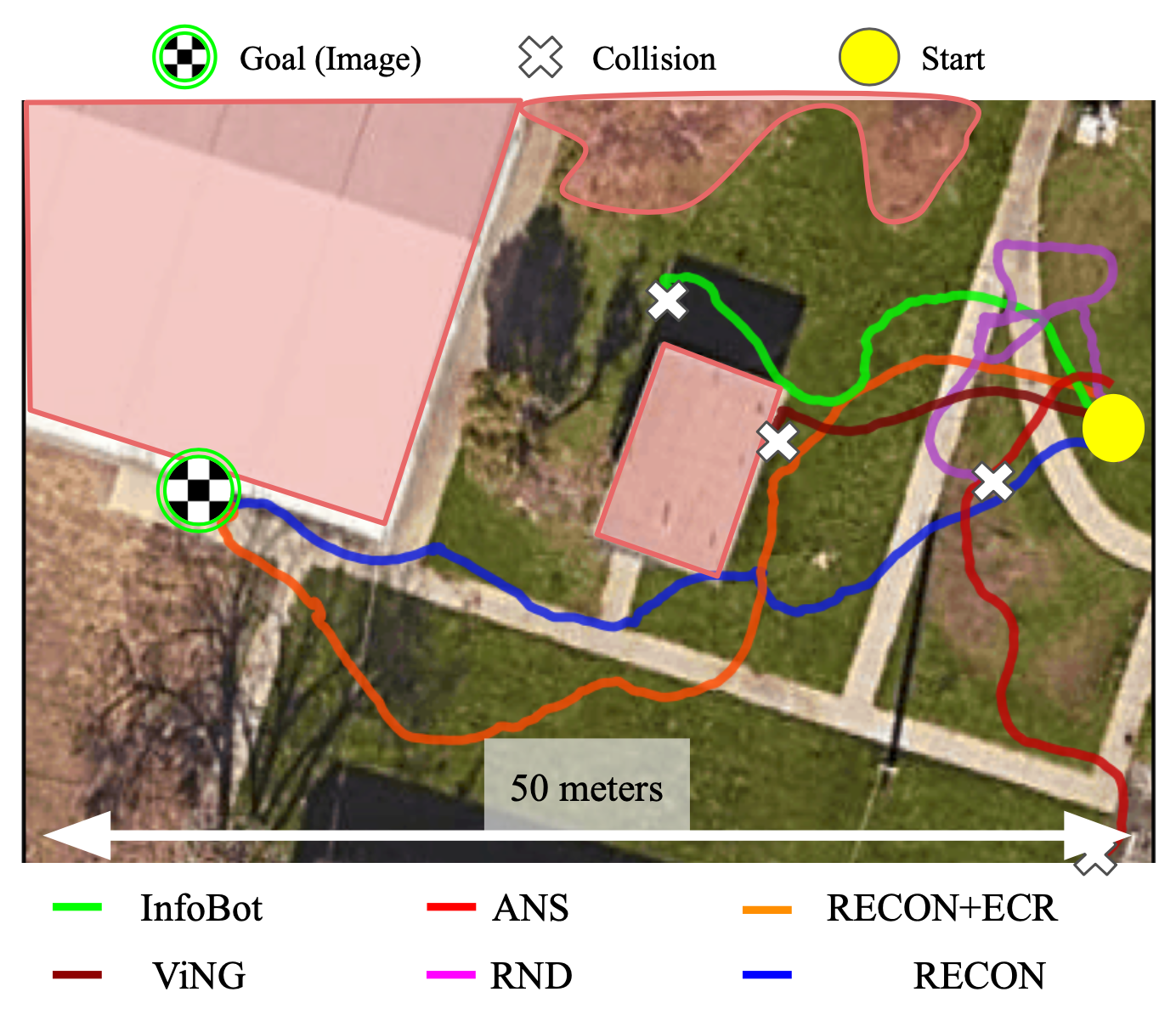

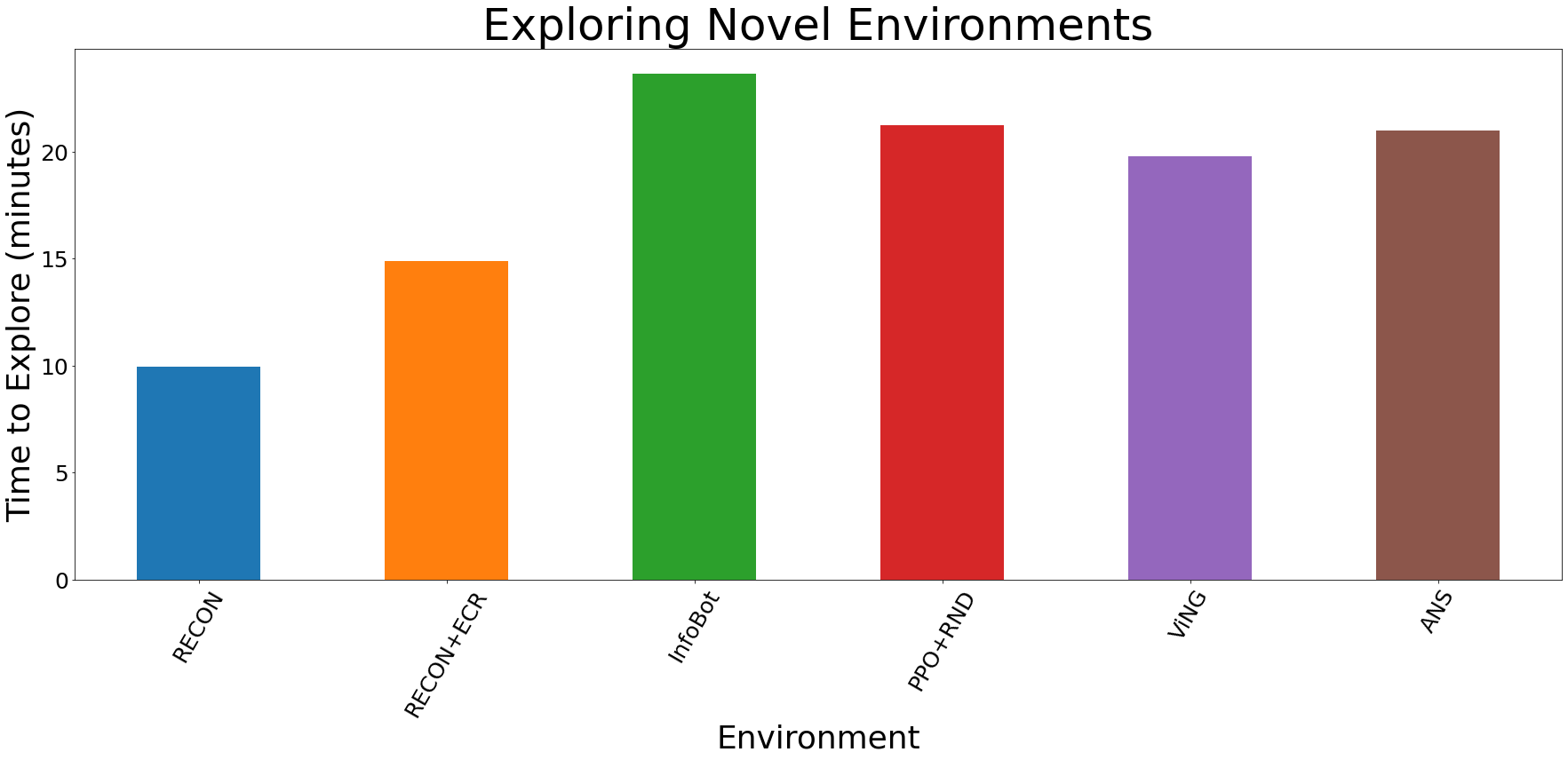

We examine RECON to 5 baselines – RND, InfoBot, Energetic Neural SLAM, ViNG and Episodic Curiosity – every educated on the identical offline trajectory dataset as our methodology, and fine-tuned within the goal atmosphere with on-line interplay. Notice that this knowledge is collected from previous environments and comprises no knowledge from the goal atmosphere. The determine under reveals the trajectories taken by the completely different strategies for one such atmosphere.

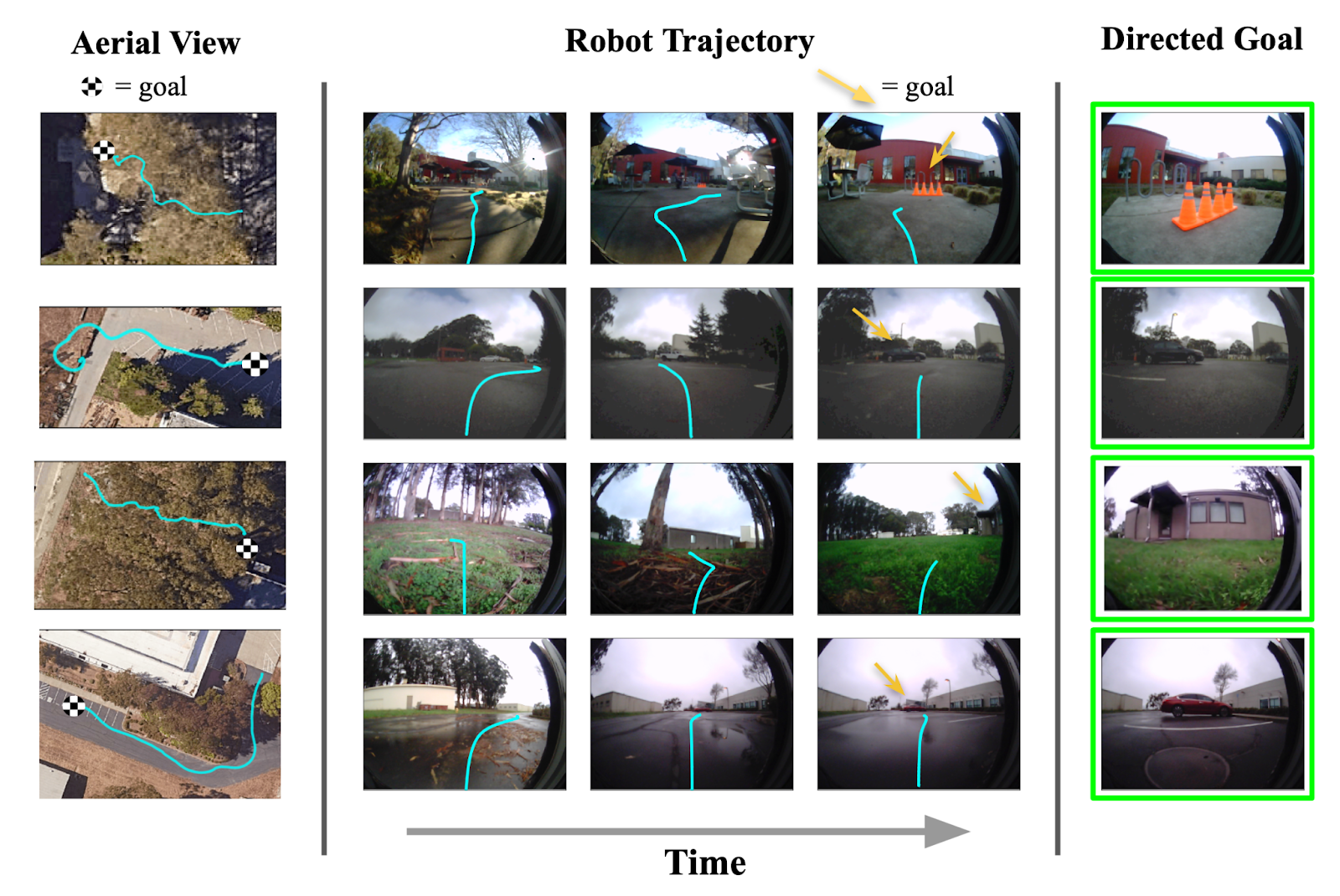

We discover that solely RECON (and a variant) is ready to efficiently uncover the aim in over half-hour of exploration, whereas all different baselines lead to collision (see determine for an overhead visualization). We visualize profitable trajectories found by RECON in 4 different environments under.

(Left) When evaluating to different baselines, solely RECON is ready to efficiently discover the aim. (Proper) Trajectories to objectives in 4 different environments found by RECON.

Quantitatively, we observe that our methodology finds objectives over 50% quicker than one of the best prior methodology; after discovering the aim and constructing a topological map of the atmosphere, it will possibly navigate to objectives in that atmosphere over 25% quicker than one of the best different methodology.

Quantitative leads to novel environments. RECON outperforms all baselines by over 50%.

Exploring Non-Stationary Environments

One of many vital challenges in designing real-world robotic navigation programs is dealing with variations between coaching situations and testing situations. Usually, programs are developed in well-controlled environments, however are deployed in much less structured environments. Additional, the environments the place robots are deployed typically change over time, so tuning a system to carry out effectively on a cloudy day would possibly degrade efficiency on a sunny day. RECON makes use of express illustration studying in makes an attempt to deal with this kind of non-stationary dynamics.

Our ultimate experiment examined how adjustments within the atmosphere affected the efficiency of RECON. We first had RECON discover a brand new “junkyard” to study to succeed in a blue dumpster. Then, with none extra supervision or exploration, we evaluated the realized coverage when offered with beforehand unseen obstacles (trash cans, visitors cones, a automobile) and climate circumstances (sunny, overcast, twilight). As proven under, RECON is ready to efficiently navigate to the aim in these situations, exhibiting that the realized representations are invariant to visible distractors that don’t have an effect on the robotic’s choices to succeed in the aim.

First-person movies of RECON efficiently navigating to a “blue dumpster” within the presence of novel obstacles (above) and ranging climate circumstances (under).

The issue setup studied on this paper – utilizing previous expertise to speed up studying in a brand new atmosphere – is reflective of a number of real-world robotics situations. RECON supplies a sturdy strategy to remedy this downside through the use of a mix of aim sampling and topological reminiscence.

A cell robotic able to reliably exploring and visually observing real-world environments generally is a useful gizmo for all kinds of helpful purposes comparable to search and rescue, inspecting giant workplaces or warehouses, discovering leaks in oil pipelines or making rounds at a hospital, delivering mail in suburban communities. We demonstrated simplified variations of such purposes in an earlier mission, the place the robotic has prior expertise within the deployment atmosphere; RECON allows these outcomes to scale past the coaching set of environments and leads to a very open-world studying system that may adapt to novel environments on deployment.

We’re additionally releasing the aforementioned offline trajectory dataset, with over XX hours of real-world interplay of a cell floor robotic in a wide range of out of doors environments. We hope that this dataset can assist future analysis in machine studying utilizing real-world knowledge for visible navigation purposes. The dataset can also be a wealthy supply of sequential knowledge from a large number of sensors and can be utilized to check sequence prediction fashions together with, however not restricted to, video prediction, LiDAR, GPS and many others. Extra details about the dataset may be discovered within the full-text article.

This weblog submit is predicated on our paper Speedy Exploration for Open-World Navigation with Latent Objective Fashions, which will likely be offered as an Oral Speak on the fifth Annual Convention on Robotic Studying in London, UK on November 8-11, 2021. You’ll find extra details about our outcomes and the dataset launch on the mission web page.

Large due to Sergey Levine and Benjamin Eysenbach for useful feedback on an earlier draft of this text.

[ad_2]