[ad_1]

Multi-horizon forecasting, i.e. predicting variables-of-interest at a number of future time steps, is an important problem in time sequence machine studying. Most real-world datasets have a time element, and forecasting the longer term can unlock nice worth. For instance, retailers can use future gross sales to optimize their provide chain and promotions, funding managers are interested by forecasting the longer term costs of monetary belongings to maximise their efficiency, and healthcare establishments can use the variety of future affected person admissions to have enough personnel and gear.

Deep neural networks (DNNs) have more and more been utilized in multi-horizon forecasting, demonstrating sturdy efficiency enhancements over conventional time sequence fashions. Whereas many fashions (e.g., DeepAR, MQRNN) have targeted on variants of recurrent neural networks (RNNs), current enhancements, together with Transformer-based fashions, have used attention-based layers to reinforce the choice of related time steps up to now past the inductive bias of RNNs – sequential ordered processing of data together with. Nonetheless, these usually don’t take into account the completely different inputs generally current in multi-horizon forecasting and both assume that each one exogenous inputs are identified into the longer term or neglect essential static covariates.

|

| Multi-horizon forecasting with static covariates and varied time-dependent inputs. |

Moreover, standard time sequence fashions are managed by advanced nonlinear interactions between many parameters, making it tough to clarify how such fashions arrive at their predictions. Sadly, widespread strategies to clarify the habits of DNNs have limitations. For instance, post-hoc strategies (e.g., LIME and SHAP) don’t take into account the order of enter options. Some attention-based fashions are proposed with inherent interpretability for sequential knowledge, primarily language or speech, however multi-horizon forecasting has many several types of inputs, not simply language or speech. Consideration-based fashions can present insights into related time steps, however they can’t distinguish the significance of various options at a given time step. New strategies are wanted to sort out the heterogeneity of knowledge in multi-horizon forecasting for prime efficiency and to render these forecasts interpretable.

To that finish, we announce “Temporal Fusion Transformers for Interpretable Multi-horizon Time Sequence Forecasting”, printed within the Worldwide Journal of Forecasting, the place we suggest the Temporal Fusion Transformer (TFT), an attention-based DNN mannequin for multi-horizon forecasting. TFT is designed to explicitly align the mannequin with the final multi-horizon forecasting job for each superior accuracy and interpretability, which we show throughout varied use instances.

Temporal Fusion Transformer

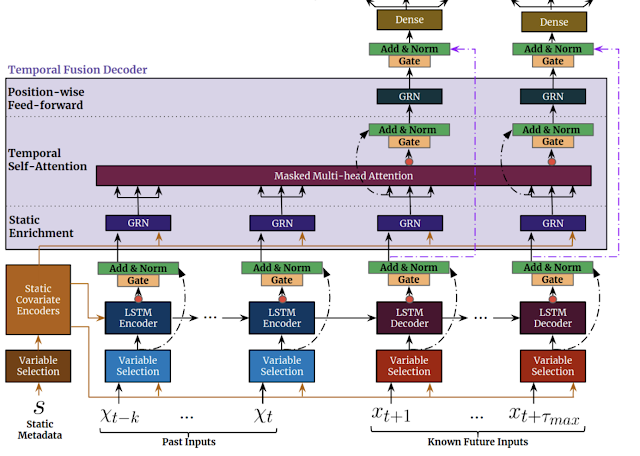

We design TFT to effectively construct function representations for every enter kind (i.e., static, identified, or noticed inputs) for prime forecasting efficiency. The key constituents of TFT (proven under) are:

- Gating mechanismsto skip over any unused elements of the mannequin (realized from the information), offering adaptive depth and community complexity to accommodate a variety of datasets.

- Variable choice networksto pick related enter variables at every time step. Whereas standard DNNs could overfit to irrelevant options, attention-based variable choice can enhance generalization by encouraging the mannequin to anchor most of its studying capability on probably the most salient options.

- Static covariate encoderscombine static options to manage how temporal dynamics are modeled. Static options can have an essential affect on forecasts, e.g., a retailer location might have completely different temporal dynamics for gross sales (e.g., a rural retailer might even see larger weekend site visitors, however a downtown retailer might even see each day peaks after working hours).

- Temporal processingto be taught each long- and short-term temporal relationships from each noticed and identified time-varying inputs. A sequence-to-sequence layer is employed for native processing because the inductive bias it has for ordered data processing is useful, whereas long-term dependencies are captured utilizing a novel interpretable multi-head consideration block. This could reduce the efficient path size of data, i.e., any previous time step with related data (e.g. gross sales from final 12 months) will be targeted on instantly.

- Prediction intervals present quantile forecasts to find out the vary of goal values at every prediction horizon, which assist customers perceive the distribution of the output, not simply the purpose forecasts.

|

| TFT inputs static metadata, time-varying previous inputs and time-varying a priori identified future inputs. Variable Choice is used for considered choice of probably the most salient options based mostly on the enter. Gated data is added as a residual enter, adopted by normalization. Gated residual community (GRN) blocks allow environment friendly data move with skip connections and gating layers. Time-dependent processing relies on LSTMs for native processing, and multi-head consideration for integrating data from any time step. |

Forecasting Efficiency

We evaluate TFT to a variety of fashions for multi-horizon forecasting, together with varied deep studying fashions with iterative strategies (e.g., DeepAR, DeepSSM, ConvTrans) and direct strategies (e.g., LSTM Seq2Seq, MQRNN), in addition to conventional fashions akin to ARIMA, ETS, and TRMF. Under is a comparability to a truncated checklist of fashions.

| Mannequin | Electrical energy | Visitors | Volatility | Retail |

| ARIMA | 0.154 (+180%) | 0.223 (+135%) | – | – |

| ETS | 0.102 (+85%) | 0.236 (+148%) | – | – |

| DeepAR | 0.075 (+36%) | 0.161 (+69%) | 0.050 (+28%) | 0.574 (+62%) |

| Seq2Seq | 0.067 (+22%) | 0.105 (+11%) | 0.042 (+7%) | 0.411 (+16%) |

| MQRNN | 0.077 (+40%) | 0.117 (+23%) | 0.042 (+7%) | 0.379 (+7%) |

| TFT | 0.055 | 0.095 | 0.039 | 0.354 |

As proven above, TFT outperforms all benchmarks over quite a lot of datasets. This is applicable to each level forecasts and uncertainty estimates, with TFT yielding a mean 7% decrease P50 and 9% decrease P90 losses, respectively, in comparison with the subsequent finest mannequin.

Interpretability Use Circumstances

We show how TFT’s design permits for evaluation of its particular person elements for enhanced interpretability with three use instances.

- Variable Significance

One can observe how completely different variables affect retail gross sales by observing their mannequin weights. For instance, the biggest weights for static variables had been the precise retailer and merchandise, whereas the biggest weights for future variables had been promotion interval and nationwide vacation (proven under).Variable significance for the retail dataset. The tenth, fiftieth, and ninetieth percentiles of the variable choice weights are proven, with values bigger than 0.1 in daring purple. - Persistent Temporal Patterns

Visualizing persistent temporal patterns will help in understanding the time-dependent relationships current in a given dataset. We establish related persistent patterns by measuring the contributions of options at mounted lags up to now forecasts at varied horizons. Proven under, consideration weights reveal an important previous time steps on which TFT bases its choices.The above reveals the eye weight patterns throughout time, indicating how TFT learns persistent temporal patterns with none hard-coding. Such functionality will help construct belief with customers as a result of the output confirms anticipated identified patterns. Mannequin builders may use these in direction of mannequin enhancements, e.g., by way of particular function engineering or knowledge assortment.

- Figuring out Vital Occasions

Figuring out sudden modifications will be helpful, as short-term shifts can happen as a result of presence of great occasions. TFT makes use of the space between consideration patterns at every level with the typical sample to establish the numerous deviations. The figures under present that TFT can alter its consideration between occasions — putting equal consideration throughout previous inputs when volatility is low, whereas attending extra to sharp development modifications throughout excessive volatility durations.Occasion identification for S&P 500 realized volatility from 2002 by means of 2014. Vital deviations in consideration patterns will be noticed above round durations of excessive volatility, akin to the peaks noticed in dist(t), distance between consideration patterns (purple line). We use a threshold to indicate important occasions, as highlighted in purple.

Specializing in durations across the 2008 monetary disaster, the underside plot under zooms on halfway by means of the numerous occasion (evident from the elevated consideration on sharp development modifications), in comparison with the conventional occasion within the high plot (the place consideration is equal over low volatility durations).

Occasion identification for S&P 500 realized volatility, a zoom of the above on a interval from 2004 and 2005. Occasion identification for S&P 500 realized volatility, a zoom of the above on a interval from 2008 and 2009.

Actual-World Affect

Lastly, TFT has been used to assist retail and logistics firms with demand forecasting by each enhancing forecasting accuracy and offering interpretability capabilities.

Moreover, TFT has potential functions for climate-related challenges: for instance, decreasing greenhouse fuel emissions by balancing electrical energy provide and demand in actual time, and enhancing the accuracy and interpretability of rainfall forecasting outcomes.

Conclusion

We current a novel attention-based mannequin for high-performance multi-horizon forecasting. Along with improved efficiency throughout a variety of datasets, TFT additionally incorporates specialised elements for inherent interpretability — i.e., variable choice networks and interpretable multi-head consideration. With three interpretability use-cases, we additionally show how these elements can be utilized to extract insights on function significance and temporal dynamics.

Acknowledgements

We gratefully acknowledge contributions of Bryan Lim, Nicolas Loeff, Minho Jin, Yaguang Li, and Andrew Moore.

[ad_2]