[ad_1]

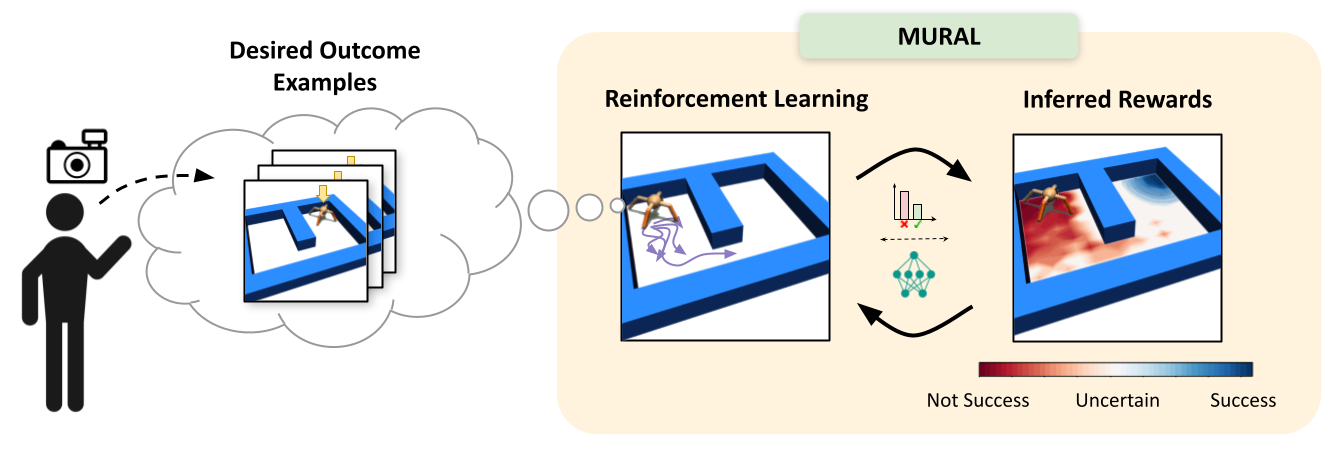

Diagram of MURAL, our methodology for studying uncertainty-aware rewards for RL. After the consumer supplies a couple of examples of desired outcomes, MURAL routinely infers a reward operate that takes under consideration these examples and the agent’s uncertainty for every state.

Though reinforcement studying has proven success in domains such as robotics, chip placement and enjoying video video games, it’s often intractable in its most normal kind. Specifically, deciding when and the right way to go to new states within the hopes of studying extra concerning the atmosphere might be difficult, particularly when the reward sign is uninformative. These questions of reward specification and exploration are carefully related — the extra directed and “properly formed” a reward operate is, the better the issue of exploration turns into. The reply to the query of the right way to discover most successfully is more likely to be carefully knowledgeable by the actual alternative of how we specify rewards.

For unstructured downside settings comparable to robotic manipulation and navigation — areas the place RL holds substantial promise for enabling higher real-world clever brokers — reward specification is usually the important thing issue stopping us from tackling tougher duties. The problem of efficient reward specification is two-fold: we require reward capabilities that may be laid out in the actual world with out considerably instrumenting the atmosphere, but additionally successfully information the agent to resolve tough exploration issues. In our latest work, we deal with this problem by designing a reward specification method that naturally incentivizes exploration and allows brokers to discover environments in a directed method.

Whereas RL in its most normal kind might be fairly tough to sort out, we will contemplate a extra managed set of subproblems that are extra tractable whereas nonetheless encompassing a big set of attention-grabbing issues. Specifically, we contemplate a subclass of issues which has been known as final result pushed RL. In final result pushed RL issues, the agent just isn’t merely tasked with exploring the atmosphere till it probabilities upon reward, however as a substitute is supplied with examples of profitable outcomes within the atmosphere. These profitable outcomes can then be used to deduce an acceptable reward operate that may be optimized to resolve the specified issues in new situations.

Extra concretely, in final result pushed RL issues, a human supervisor first supplies a set of profitable final result examples ${s_g^i}_{i=1}^N$, representing states wherein the specified process has been completed. Given these final result examples, an acceptable reward operate $r(s, a)$ might be inferred that encourages an agent to realize the specified final result examples. In some ways, this downside is analogous to that of inverse reinforcement studying, however solely requires examples of profitable states moderately than full skilled demonstrations.

When desirous about the right way to truly infer the specified reward operate $r(s, a)$ from profitable final result examples ${s_g^i}_{i=1}^N$, the only method that involves thoughts is to easily deal with the reward inference downside as a classification downside – “Is the present state a profitable final result or not?” Prior work has applied this instinct, inferring rewards by coaching a easy binary classifier to differentiate whether or not a specific state $s$ is a profitable final result or not, utilizing the set of offered aim states as positives, and all on-policy samples as negatives. The algorithm then assigns rewards to a specific state utilizing the success chances from the classifier. This has been proven to have an in depth connection to the framework of inverse reinforcement studying.

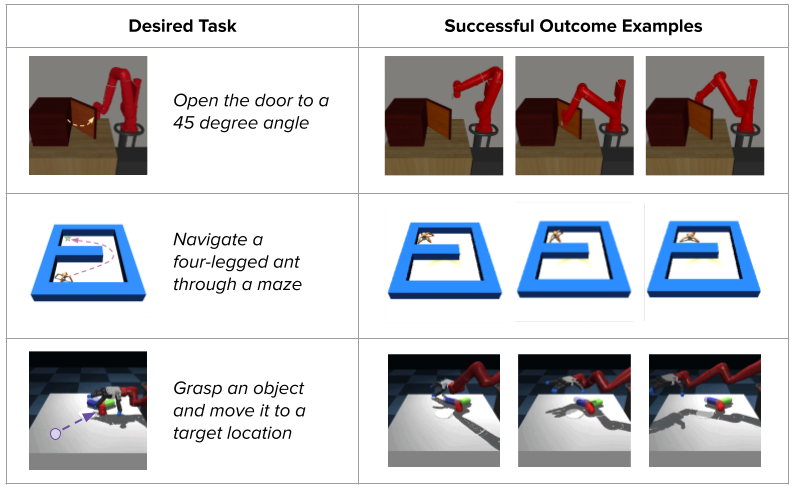

Classifier-based strategies present a way more intuitive option to specify desired outcomes, eradicating the necessity for hand-designed reward capabilities or demonstrations:

These classifier-based strategies have achieved promising outcomes on robotics duties comparable to cloth placement, mug pushing, bead and screw manipulation, and extra. Nevertheless, these successes are typically restricted to easy shorter-horizon duties, the place comparatively little exploration is required to seek out the aim.

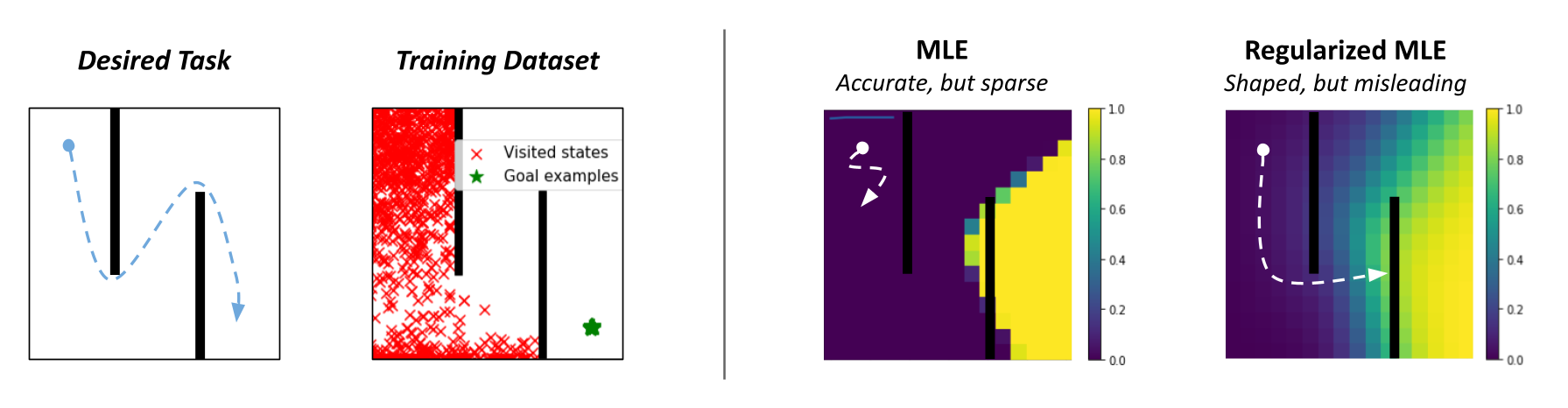

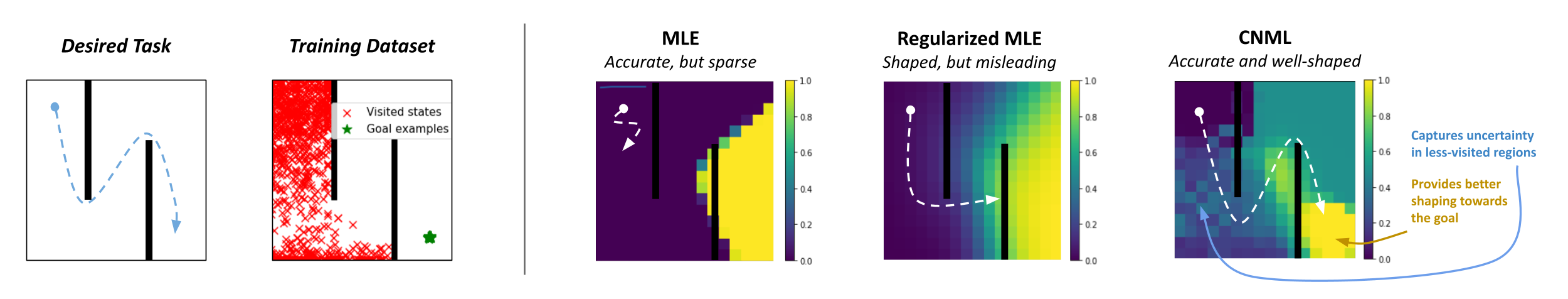

Customary success classifiers in RL undergo from the important thing challenge of overconfidence, which prevents them from offering helpful shaping for onerous exploration duties. To know why, let’s contemplate a toy 2D maze atmosphere the place the agent should navigate in a zigzag path from the highest left to the underside proper nook. Throughout coaching, classifier-based strategies would label all on-policy states as negatives and user-provided final result examples as positives. A typical neural community classifier would simply assign success chances of 0 to all visited states, leading to uninformative rewards within the intermediate levels when the aim has not been reached.

Since such rewards wouldn’t be helpful for guiding the agent in any specific path, prior works are likely to regularize their classifiers utilizing strategies like weight decay or mixup, which permit for extra easily growing rewards as we strategy the profitable final result states. Nevertheless, whereas this works on many shorter-horizon duties, such strategies can truly produce very deceptive rewards. For instance, on the 2D maze, a regularized classifier would assign comparatively excessive rewards to states on the other facet of the wall from the true aim, since they’re near the aim in x-y house. This causes the agent to get caught in a neighborhood optima, by no means bothering to discover past the ultimate wall!

In actual fact, that is precisely what occurs in follow:

As mentioned above, the important thing challenge with unregularized success classifiers for RL is overconfidence — by instantly assigning rewards of 0 to all visited states, we shut off many paths that may ultimately result in the aim. Ideally, we want our classifier to have an acceptable notion of uncertainty when outputting success chances, in order that we will keep away from excessively low rewards with out affected by the deceptive native optima that consequence from regularization.

Conditional Normalized Most Probability (CNML)

One methodology notably well-suited for this process is Conditional Normalized Most Probability (CNML). The idea of normalized most probability (NML) has sometimes been used within the Bayesian inference literature for mannequin choice, to implement the minimal description size precept. In newer work, NML has been tailored to the conditional setting to supply fashions which might be a lot better calibrated and keep a notion of uncertainty, whereas attaining optimum worst case classification remorse. Given the challenges of overconfidence described above, this is a perfect alternative for the issue of reward inference.

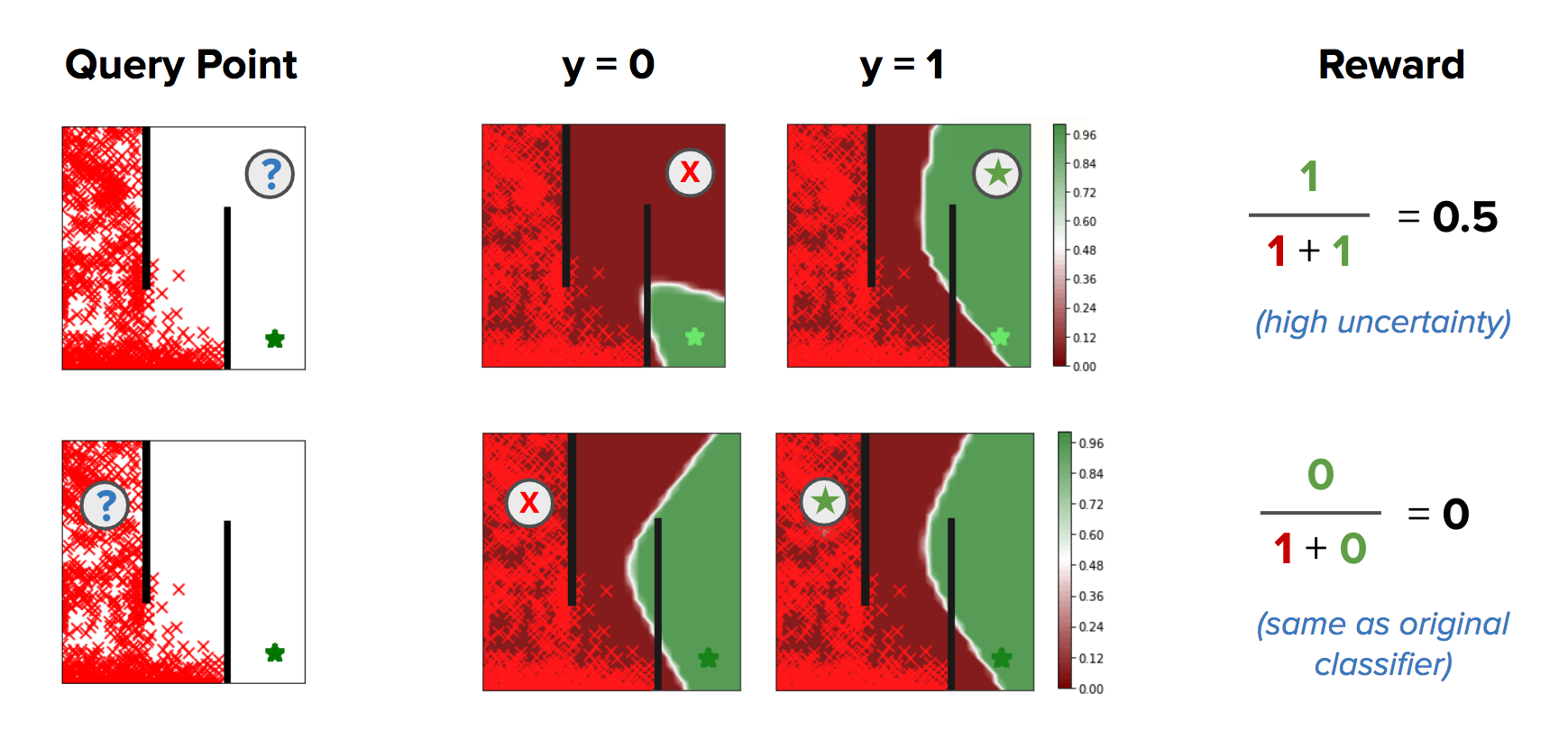

Moderately than merely coaching fashions through most probability, CNML performs a extra advanced inference process to supply likelihoods for any level that’s being queried for its label. Intuitively, CNML constructs a set of various most probability issues by labeling a specific question level $x$ with each doable label worth that it’d take, then outputs a last prediction based mostly on how simply it was capable of adapt to every of these proposed labels given your complete dataset noticed to this point. Given a specific question level $x$, and a previous dataset $mathcal{D} = left[x_0, y_0, … x_N, y_Nright]$, CNML solves okay totally different most probability issues and normalizes them to supply the specified label probability $p(y mid x)$, the place $okay$ represents the variety of doable values that the label could take. Formally, given a mannequin $f(x)$, loss operate $mathcal{L}$, coaching dataset $mathcal{D}$ with courses $mathcal{C}_1, …, mathcal{C}_k$, and a brand new question level $x_q$, CNML solves the next $okay$ most probability issues:

[theta_i = text{arg}max_{theta} mathbb{E}_{mathcal{D} cup (x_q, C_i)}left[ mathcal{L}(f_{theta}(x), y)right]]

It then generates predictions for every of the $okay$ courses utilizing their corresponding fashions, and normalizes the outcomes for its last output:

[p_text{CNML}(C_i|x) = frac{f_{theta_i}(x)}{sum limits_{j=1}^k f_{theta_j}(x)}]

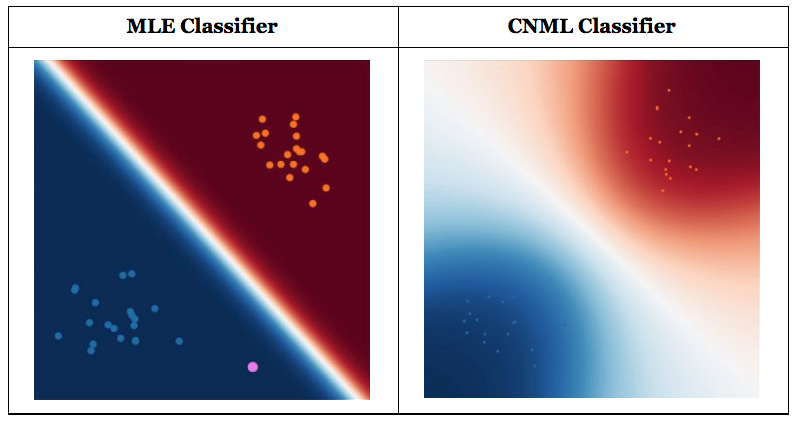

Comparability of outputs from a regular classifier and a CNML classifier. CNML outputs extra conservative predictions on factors which might be removed from the coaching distribution, indicating uncertainty about these factors’ true outputs. (Credit score: Aurick Zhou, BAIR Weblog)

Intuitively, if the question level is farther from the unique coaching distribution represented by D, CNML will be capable to extra simply adapt to any arbitrary label in $mathcal{C}_1, …, mathcal{C}_k$, making the ensuing predictions nearer to uniform. On this method, CNML is ready to produce higher calibrated predictions, and keep a transparent notion of uncertainty based mostly on which knowledge level is being queried.

Leveraging CNML-based classifiers for Reward Inference

Given the above background on CNML as a way to supply higher calibrated classifiers, it turns into clear that this supplies us an easy method to deal with the overconfidence downside with classifier based mostly rewards in final result pushed RL. By changing a regular most probability classifier with one educated utilizing CNML, we’re capable of seize a notion of uncertainty and procure directed exploration for final result pushed RL. In actual fact, within the discrete case, CNML corresponds to imposing a uniform prior on the output house — in an RL setting, that is equal to utilizing a count-based exploration bonus because the reward operate. This seems to provide us a really acceptable notion of uncertainty within the rewards, and solves lots of the exploration challenges current in classifier based mostly RL.

Nevertheless, we don’t often function within the discrete case. Typically, we use expressive operate approximators and the ensuing representations of various states on the earth share similarities. When a CNML based mostly classifier is discovered on this situation, with expressive operate approximation, we see that it might present extra than simply process agnostic exploration. In actual fact, it might present a directed notion of reward shaping, which guides an agent in direction of the aim moderately than merely encouraging it to broaden the visited area naively. As visualized beneath, CNML encourages exploration by giving optimistic success chances in less-visited areas, whereas additionally offering higher shaping in direction of the aim.

As we’ll present in our experimental outcomes, this instinct scales to greater dimensional issues and extra advanced state and motion areas, enabling CNML based mostly rewards to resolve considerably tougher duties than is feasible with typical classifier based mostly rewards.

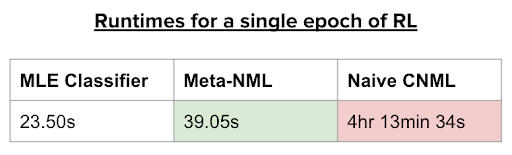

Nevertheless, on nearer inspection of the CNML process, a serious problem turns into obvious. Every time a question is made to the CNML classifier, $okay$ totally different most probability issues have to be solved to convergence, then normalized to supply the specified probability. As the scale of the dataset will increase, because it naturally does in reinforcement studying, this turns into a prohibitively sluggish course of. In actual fact, as seen in Desk 1, RL with commonplace CNML based mostly rewards takes round 4 hours to coach a single epoch (1000 timesteps). Following this process blindly would take over a month to coach a single RL agent, necessitating a extra time environment friendly answer. That is the place we discover meta-learning to be an important device.

Meta-learning is a device that has seen plenty of use circumstances in few-shot studying for picture classification, studying faster optimizers and even studying extra environment friendly RL algorithms. In essence, the concept behind meta-learning is to leverage a set of “meta-training” duties to be taught a mannequin (and infrequently an adaptation process) that may in a short time adapt to a brand new process drawn from the identical distribution of issues.

Meta-learning methods are notably properly suited to our class of computational issues because it entails shortly fixing a number of totally different most probability issues to guage the CNML probability. Every the utmost probability issues share important similarities with one another, enabling a meta-learning algorithm to in a short time adapt to supply options for every particular person downside. In doing so, meta-learning supplies us an efficient device for producing estimates of normalized most probability considerably extra shortly than doable earlier than.

The instinct behind the right way to apply meta-learning to the CNML (meta-NML) might be understood by the graphic above. For a data-set of $N$ factors, meta-NML would first assemble $2N$ duties, equivalent to the constructive and unfavorable most probability issues for every datapoint within the dataset. Given these constructed duties as a (meta) coaching set, a meta–studying algorithm might be utilized to be taught a mannequin that may in a short time be tailored to supply options to any of those $2N$ most probability issues. Outfitted with this scheme to in a short time resolve most probability issues, producing CNML predictions round $400$x sooner than doable earlier than. Prior work studied this downside from a Bayesian strategy, however we discovered that it usually scales poorly for the issues we thought-about.

Outfitted with a device for effectively producing predictions from the CNML distribution, we will now return to the aim of fixing outcome-driven RL with uncertainty conscious classifiers, leading to an algorithm we name MURAL.

To extra successfully resolve final result pushed RL issues, we incorporate meta-NML into the usual classifier based mostly process as follows:

After every epoch of RL, we pattern a batch of $n$ factors from the replay buffer and use them to assemble $2n$ meta-tasks. We then run $1$ iteration of meta-training on our mannequin.

We assign rewards utilizing NML, the place the NML outputs are approximated utilizing just one gradient step for every enter level.

The ensuing algorithm, which we name MURAL, replaces the classifier portion of normal classifier-based RL algorithms with a meta-NML mannequin as a substitute. Though meta-NML can solely consider enter factors one by one as a substitute of in batches, it’s considerably sooner than naive CNML, and MURAL remains to be comparable in runtime to straightforward classifier-based RL, as proven in Desk 1 beneath.

Desk 1. Runtimes for a single epoch of RL on the 2D maze process.

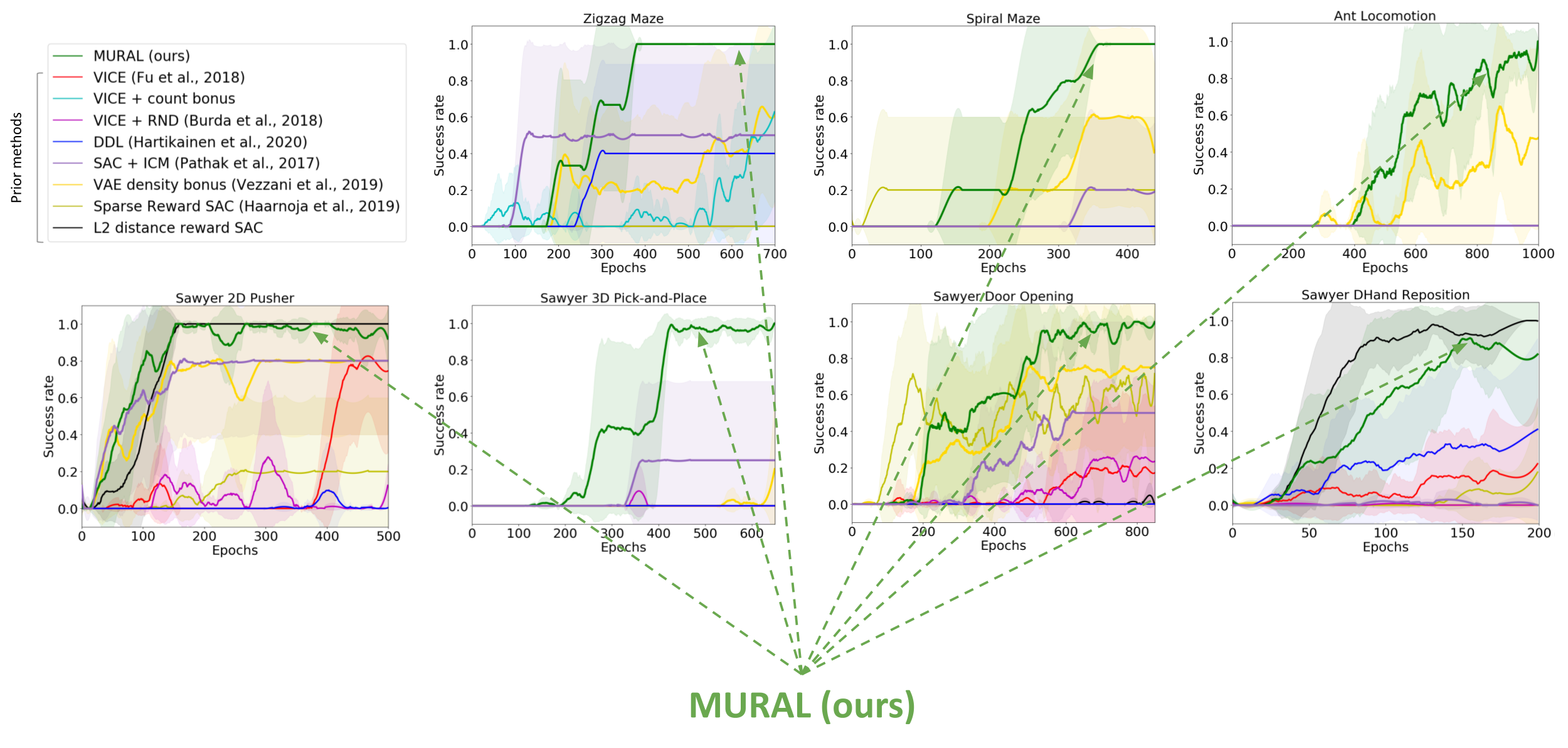

We consider MURAL on a wide range of navigation and robotic manipulation duties, which current a number of challenges together with native optima and tough exploration. MURAL solves all of those duties efficiently, outperforming prior classifier-based strategies in addition to commonplace RL with exploration bonuses.

Visualization of behaviors discovered by MURAL. MURAL is ready to carry out a wide range of behaviors in navigation and manipulation duties, inferring rewards from final result examples.

Quantitative comparability of MURAL to baselines. MURAL is ready to outperform baselines which carry out task-agnostic exploration, commonplace most probability classifiers.

This implies that utilizing meta-NML based mostly classifiers for final result pushed RL supplies us an efficient method to offer rewards for RL issues, offering advantages each when it comes to exploration and directed reward shaping.

In conclusion, we confirmed how final result pushed RL can outline a category of extra tractable RL issues. Customary strategies utilizing classifiers can usually fall brief in these settings as they’re unable to offer any advantages of exploration or steerage in direction of the aim. Leveraging a scheme for coaching uncertainty conscious classifiers through conditional normalized most probability permits us to extra successfully resolve this downside, offering advantages when it comes to exploration and reward shaping in direction of profitable outcomes. The final rules outlined on this work counsel that contemplating tractable approximations to the final RL downside could permit us to simplify the problem of reward specification and exploration in RL whereas nonetheless encompassing a wealthy class of management issues.

This put up is predicated on the paper “MURAL: Meta-Studying Uncertainty-Conscious Rewards for Final result-Pushed Reinforcement Studying”, which was introduced at ICML 2021. You possibly can see outcomes on our web site, and we present code to breed our experiments.

[ad_2]