[ad_1]

A key pattern we observe with industrial IoT tasks is that new industrial tools include out-of-the-box cloud connectivity and permit close to real-time processing of sensor information. This makes it potential to instantly generate KPIs permitting to observe these tools intently and to optimize their efficiency. Nevertheless, a variety of industrial IoT information continues to be saved in legacy techniques (e.g. SCADA techniques, messaging techniques, on-premises databases) with completely different codecs (e.g. CSV, XML, MIME). This raises a number of challenges in regards to the real-time administration of that sensor information, similar to:

- Information transformation orchestration: On-premises industrial information comes from completely different sources every having their very own format and their very own communication protocol. It’s subsequently essential to discover a means to orchestrate the administration of all that incoming information, with a view to construction it earlier than evaluation.

- Occasion pushed processing: Batch jobs can introduce latencies within the information administration pipeline, which motivates the necessity for an event-driven structure to ingest industrial information.

- Information enrichment with exterior sources: Information enrichment is usually a compulsory step with a view to permit environment friendly evaluation.

- Enhanced information evaluation: Complicated queries are generally wanted to generate KPIs.

- Ease of upkeep: Corporations don’t all the time have the time or the inner assets to deploy and handle advanced instruments.

- Actual-time processing of datasets: Some metrics must be always monitored, which suggests quick processing of datasets to take actions.

- Information integration with different companies or functions: Lastly, it will likely be essential to automate the output of the evaluation outcomes to different companies which have to course of them in actual time.

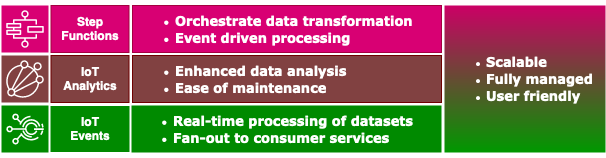

On this weblog put up we present how clients can use AWS Step Capabilities, AWS IoT Analytics, and AWS IoT Occasions as the idea of a light-weight structure to handle the aforementioned challenges in a scalable means.

Within the use case we focus on on this weblog put up, we assume that an organization is receiving sensor information from its industrial websites by way of CSV information which are saved in an Amazon S3 bucket. The corporate has to dynamically course of these information to generate key efficiency indicators (KPIs) each 5 minutes. We assume the sensor information should be enriched with extra info, similar to higher and decrease threshold limits, earlier than being saved in Amazon DynamoDB. The move of knowledge might be always monitored in order that alarms may be raised if there are lacking information data.

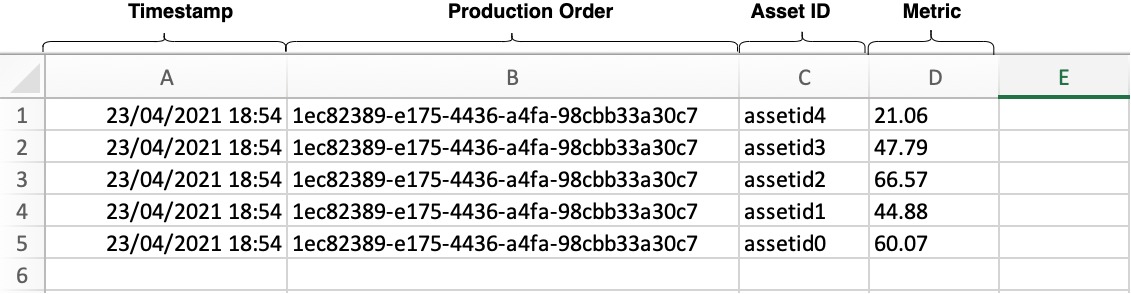

The AWS CloudFormation template supplied with this weblog provisions the assets wanted to simulate this use case. It features a built-in information simulator which feeds a Step Capabilities workflow with CSV information containing sensor information having 4 fields: 1/ timestamp, 2/ manufacturing order id, 3/ asset id, 4/ worth. This information is then transformed to JSON objects utilizing AWS Lambda capabilities, that are then forwarded to AWS IoT Analytics to counterpoint the info (with higher and decrease limits) and retrieve it each 5 minutes by making a dataset. Lastly, this AWS IoT Analytics dataset is shipped mechanically to AWS IoT Occasions to be monitored and saved in DynamoDB.

Consult with the CloudFormation template: iot-data-ingestion.json

For this walkthrough, you must have the next stipulations:

- An AWS account.

- Fundamental programming data in Python3.8 and SQL.

- Fundamental data of AWS CloudFormation, Amazon S3, Amazon DynamoDB, AWS Step Capabilities, AWS IoT Occasions, and AWS IoT Analytics.

The information simulator is coded in Python3.8 and runs in a Lambda perform which is invoked each minute by way of a scheduled Amazon CloudWatch occasion. The information simulator shops 5 metrics in a CSV file in an Amazon S3 bucket, as proven within the following picture. These 5 metrics symbolize measurements from a manufacturing line.

A manufacturing order ID is related to every metric. This manufacturing order ID is modified each 100 invocations of the Lambda perform, and for every change, higher and decrease limits of the metrics are saved in DynamoDB. These limits might be used to counterpoint the metrics when processed by AWS IoT Analytics. For instance, let’s take into account that one of many 5 metrics represents a revolutions per minute (RPM) measurement on a machine inside a manufacturing line. Relying on the manufacturing order being fulfilled by the manufacturing line, the machine could should be set to run at a particular RPM. Subsequently, the higher and decrease limits that symbolize the boundaries of regular operation for the machine will should be completely different for every manufacturing order. The information simulator will retailer these higher and decrease limits in DynamoDB to symbolize info from an exterior information supply that might be used to counterpoint the info generated from the manufacturing line (the info saved within the CSV file).

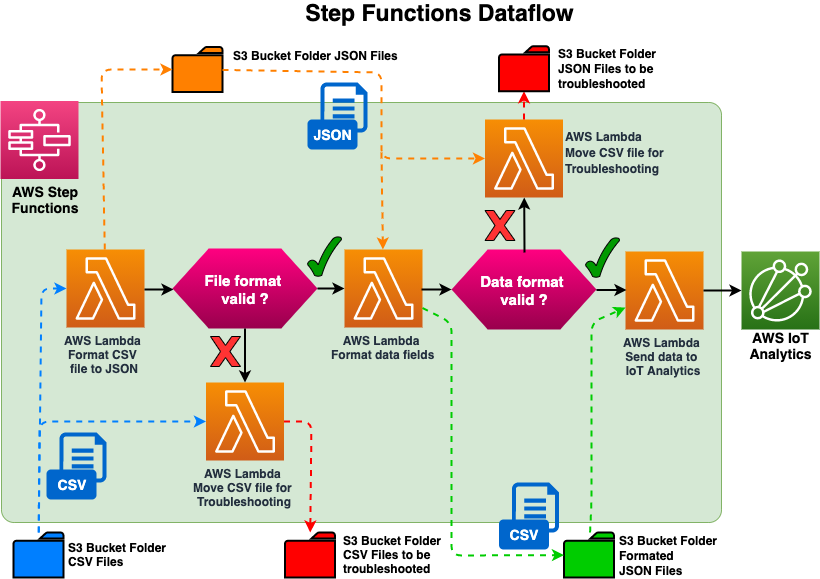

The simulated information that represents the info coming from an industrial website is saved in a CSV file. This information should then be transformed right into a JSON format with a view to be ingested by AWS IoT Analytics. Step Capabilities supply a versatile service to organize information. So, as a substitute of constructing a customized monolithic utility, we unfold the complexity of the info transformation throughout three Lambda capabilities, that are sequenced by way of a JSON canvas. The primary Lambda perform will format the CSV file into JSON, the second Lambda perform will format the info area and append a timestamp, and the third Lambda perform will ship the info to AWS IoT Analytics. As well as, we’ve carried out checks to redirect invalid information to an Amazon S3 bucket if there’s an error throughout the execution of the primary two Lambda capabilities to be troubleshooted and reprocessed later.

By continuing in the identical means, codecs of various file sorts like MIME or XML might be dealt with throughout the similar information move. Getting began with Step Capabilities is simple as a result of the sequencing is finished with a easy JSON file that may be created straight by way of the Step Capabilities console which has a graphical interface that clearly identifies the sequencing of every step (see screenshot beneath). Passing parameters from one Lambda perform to a different is as straightforward has manipulating JSON objects.

The information preparation structure makes use of an event-driven strategy, the place the saving of the CSV file to Amazon S3 will generate a CloudWatch occasion that triggers a Step Operate to course of the CSV file instantly. Let’s take into account a situation the place as a substitute we used a batch processing strategy, the place we’ve a CSV file containing manufacturing line information being saved to Amazon S3 each one minute. On this instance we run a batch job each hour to course of the CSV information, which is able to introduce a most information processing latency of as much as 1 hour to ingest and course of the info. Nevertheless, with an occasion pushed structure, every CSV file might be processed as quickly as it’s saved to an Amazon S3 bucket, and eradicate any latency.

We use AWS IoT Analytics to carry out information enrichment and evaluation. 4 of its elements are wanted:

- Channels: Acquire uncooked or unprocessed information from exterior sources, and feed AWS IoT Analytics pipelines.

- Pipelines: Clear, filter, remodel, and enrich information from AWS IoT Analytics channels.

- Information Shops: Retailer processed information from the AWS IoT Analytics pipeline .

- Datasets: Analyze information with ad-hoc SQL queries on the AWS IoT Analytics information retailer.

On this use case, we used a single AWS IoT Analytics channel. Given the info preparation carried out by the Step Capabilities, we assume that every one the incoming information are legitimate and able to be analyzed. That information will move by way of to an AWS IoT Analytics pipeline,which is able to enrich the metrics with the higher and decrease limits sourced from the DynamoDB desk, after which routes the info to an AWS IoT Analytics information retailer. As described earlier, these limits are saved in a DynamoDB desk by the info simulator after which queried in AWS IoT Analytics primarily based on the asset id and the manufacturing order id.

We may have determined to append manufacturing line information with the higher and decrease limits earlier than sending the document to AWS IoT Analytics, however as soon as the higher and decrease limits are saved along with the manufacturing measurement information within the AWS IoT Analytics channel, it may possibly’t be up to date. Nevertheless, appending the higher and decrease limits by way of the AWS IoT Analytics pipeline offers the pliability to replay the data with completely different limits. For instance, if the higher and decrease limits related to the manufacturing line information have modified for the earlier month, you then simply have to reprocess the info utilizing an AWS IoT Analytics pipeline and enrich with the newest limits. Consult with the next diagram for extra info.

As soon as the enriched information is saved within the AWS IoT Analytics information retailer the info is able to be queried. You retrieve information from an information retailer by creating an AWS IoT Analytics dataset. Datasets are generated utilizing SQL expressions on the info in an information retailer, and in our use case, the dataset repeats the question each 5 minutes.

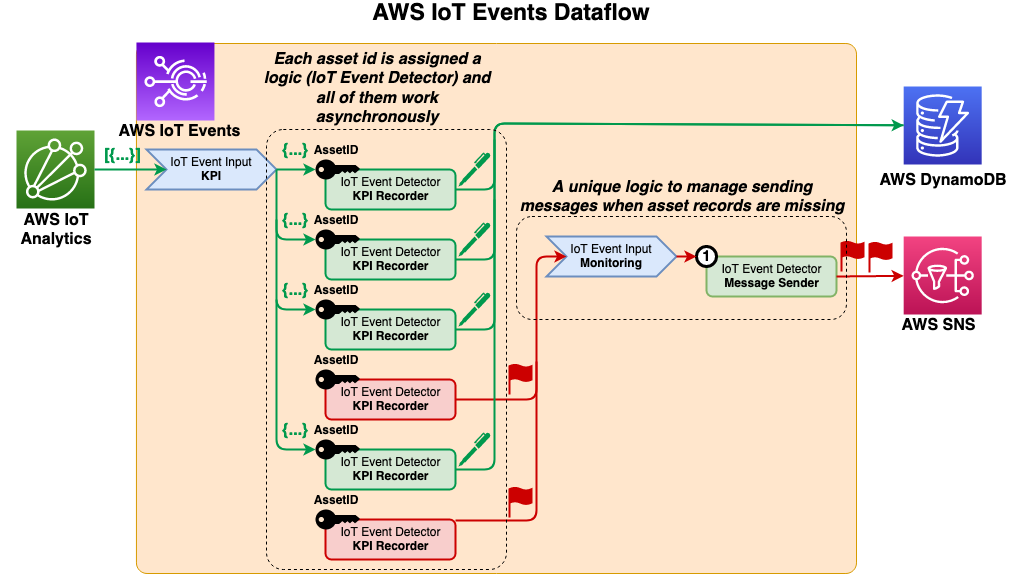

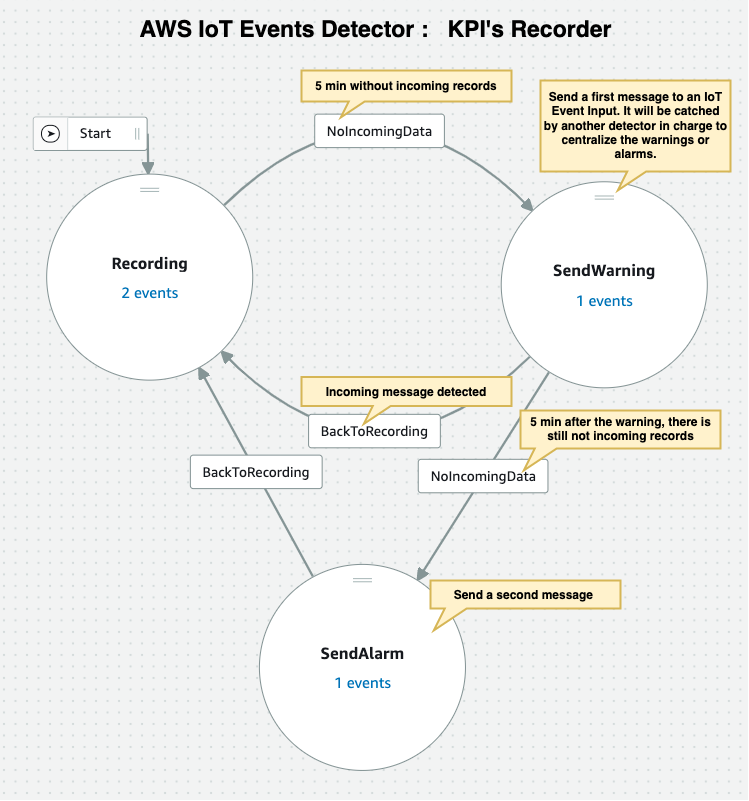

To document and monitor the incoming move of knowledge from AWS IoT Analytics, use AWS IoT Occasions, which may mechanically ship the dataset contents to an AWS IoT Occasions enter. In our use case we’re sending datasets each 5 minutes. We use the asset ID as a key by an occasion detector created in AWS IoT Occasions for every asset, which then runs customized, pre-defined conditional logic that’s triggered by the incoming information and an inner timer.

The occasion detector saves the enter information to a DynamoDB desk and resets a 5 minute timer. After this time interval, the occasion detector triggers a message as an enter to a second occasion detector which is used to merge all of the incoming messages to ship a single consolidated message. Let’s take into account a situation the place we’re monitoring 100 property in a producing plant, and each asset is sending an alarm. With out this second occasion detector recognized to output a single alarm on behalf of all these property, every asset occasion detector would ship its personal alarm message that will inundate the goal mailbox. This might require extra and time consuming evaluation of many e-mail notifications, thereby additional delaying any response wanted to handle the alarms. As a result of this particular occasion detector is have the ability to monitor alarms throughout the entire website, it may possibly escalate the alarms accordingly.

To keep away from incurring future prices, delete the CloudFormation stack.

This put up has introduced a easy structure constructed round three companies: AWS IoT Occasions, AWS IoT Analytics, and Step Capabilities. The structure addresses challenges typically encountered relating to ingesting information from disparate techniques. This structure will also be used for accumulating and processing information for use for coaching machine studying fashions.

To get began and deploy the structure described on this article, use this CloudFormation template.

[ad_2]