[ad_1]

Final Up to date on October 30, 2021

Latest advance in machine studying has made face recognition not a troublesome drawback. However within the earlier, researchers have made varied makes an attempt and developed varied expertise to make pc able to figuring out individuals. One of many early try with average success is eigenface, which is predicated on linear algebra methods.

On this tutorial, we are going to see how we will construct a primitive face recognition system with some easy linear algebra method corresponding to principal element evaluation.

After finishing this tutorial, you’ll know:

- The event of eigenface method

- The right way to use principal element evaluation to extract attribute pictures from a picture dataset

- The right way to specific any picture as a weighted sum of the attribute pictures

- The right way to examine the similarity of pictures from the load of principal parts

Let’s get began.

Face Recognition utilizing Principal Part Evaluation

Photograph by Rach Teo, some rights reserved.

Tutorial overview

This tutorial is split into 3 elements; they’re:

- Picture and Face Recognition

- Overview of Eigenface

- Implementing Eigenface

Picture and Face Recognition

In pc, footage are represented as a matrix of pixels, with every pixel a selected coloration coded in some numerical values. It’s pure to ask if pc can learn the image and perceive what it’s, and if that’s the case, whether or not we will describe the logic utilizing matrix arithmetic. To be much less formidable, individuals attempt to restrict the scope of this drawback to figuring out human faces. An early try for face recognition is to think about the matrix as a excessive dimensional element and we infer a decrease dimension data vector from it, then attempt to acknowledge the individual in decrease dimension. It was crucial within the previous time as a result of the pc was not highly effective and the quantity of reminiscence may be very restricted. Nonetheless, by exploring methods to compress picture to a a lot smaller measurement, we developed a ability to check if two pictures are portraying the identical human face even when the photographs usually are not similar.

In 1987, a paper by Sirovich and Kirby thought of the concept that all footage of human face to be a weighted sum of some “key footage”. Sirovich and Kirby referred to as these key footage the “eigenpictures”, as they’re the eigenvectors of the covariance matrix of the mean-subtracted footage of human faces. Within the paper they certainly supplied the algorithm of principal element evaluation of the face image dataset in its matrix kind. And the weights used within the weighted sum certainly correspond to the projection of the face image into every eigenpicture.

In 1991, a paper by Turk and Pentland coined the time period “eigenface”. They constructed on prime of the thought of Sirovich and Kirby and use the weights and eigenpictures as attribute options to acknowledge faces. The paper by Turk and Pentland laid out a memory-efficient strategy to compute the eigenpictures. It additionally proposed an algorithm on how the face recognition system can function, together with methods to replace the system to incorporate new faces and methods to mix it with a video seize system. The identical paper additionally identified that the idea of eigenface may also help reconstruction of partially obstructed image.

Overview of Eigenface

Earlier than we leap into the code, let’s define the steps in utilizing eigenface for face recognition, and level out how some easy linear algebra method may also help the duty.

Assume we’ve a bunch of images of human faces, all in the identical pixel dimension (e.g., all are r×c grayscale pictures). If we get M totally different footage and vectorize every image into L=r×c pixels, we will current the complete dataset as a L×M matrix (let’s name it matrix $A$), the place every aspect within the matrix is the pixel’s grayscale worth.

Recall that principal element evaluation (PCA) might be utilized to any matrix, and the result’s plenty of vectors referred to as the principal parts. Every principal element has the size similar because the column size of the matrix. The totally different principal parts from the identical matrix are orthogonal to one another, which means that the vector dot-product of any two of them is zero. Due to this fact the assorted principal parts constructed a vector house for which every column within the matrix might be represented as a linear mixture (i.e., weighted sum) of the principal parts.

The best way it’s executed is to first take $C=A – a$ the place $a$ is the imply vector of the matrix $A$. So $C$ is the matrix that subtract every column of $A$ with the imply vector $a$. Then the covariance matrix is

$$S = Ccdot C^T$$

from which we discover its eigenvectors and eigenvalues. The principal parts are these eigenvectors in reducing order of the eigenvalues. As a result of matrix $S$ is a L×L matrix, we might take into account to seek out the eigenvectors of a M×M matrix $C^Tcdot C$ as a substitute because the eigenvector $v$ for $C^Tcdot C$ might be remodeled into eigenvector $u$ of $Ccdot C^T$ by $u=Ccdot v$, besides we often favor to put in writing $u$ as normalized vector (i.e., norm of $u$ is 1).

The bodily which means of the principal element vectors of $A$, or equivalently the eigenvectors of $S=Ccdot C^T$, is that they’re the important thing instructions that we will assemble the columns of matrix $A$. The relative significance of the totally different principal element vectors might be inferred from the corresponding eigenvalues. The higher the eigenvalue, the extra helpful (i.e., holds extra details about $A$) the principal element vector. Therefore we will maintain solely the primary Okay principal element vectors. If matrix $A$ is the dataset for face footage, the primary Okay principal element vectors are the highest Okay most vital “face footage”. We name them the eigenface image.

For any given face image, we will undertaking its mean-subtracted model onto the eigenface image utilizing vector dot-product. The result’s how shut this face image is said to the eigenface. If the face image is completely unrelated to the eigenface, we might count on its result’s zero. For the Okay eigenfaces, we will discover Okay dot-product for any given face image. We are able to current the end result as weights of this face image with respect to the eigenfaces. The load is often offered as a vector.

Conversely, if we’ve a weight vector, we will add up every eigenfaces subjected to the load and reconstruct a brand new face. Let’s denote the eigenfaces as matrix $F$, which is a L×Okay matrix, and the load vector $w$ is a column vector. Then for any $w$ we will assemble the image of a face as

$$z=Fcdot w$$

which $z$ is resulted as a column vector of size L. As a result of we’re solely utilizing the highest Okay principal element vectors, we should always count on the ensuing face image is distorted however retained some facial attribute.

For the reason that eigenface matrix is fixed for the dataset, a various weight vector $w$ means a various face image. Due to this fact we will count on the photographs of the identical individual would offer comparable weight vectors, even when the photographs usually are not similar. Consequently, we might make use of the space between two weight vectors (such because the L2-norm) as a metric of how two footage resemble.

Implementing Eigenface

Now we try and implement the thought of eigenface with numpy and scikit-learn. We may even make use of OpenCV to learn image recordsdata. It’s possible you’ll want to put in the related bundle with pip command:

|

pip set up opencv–python |

The dataset we use are the ORL Database of Faces, which is sort of of age however we will obtain it from Kaggle:

The file is a zipper file of round 4MB. It has footage of 40 individuals and every individual has 10 footage. Whole to 400 footage. Within the following we assumed the file is downloaded to the native listing and named as attface.zip.

We might extract the zip file to get the photographs, or we will additionally make use of the zipfile bundle in Python to learn the contents from the zip file instantly:

|

import cv2 import zipfile import numpy as np

faces = {} with zipfile.ZipFile(“attface.zip”) as facezip: for filename in facezip.namelist(): if not filename.endswith(“.pgm”): proceed # not a face image with facezip.open(filename) as picture: # If we extracted recordsdata from zip, we will use cv2.imread(filename) as a substitute faces[filename] = cv2.imdecode(np.frombuffer(picture.learn(), np.uint8), cv2.IMREAD_GRAYSCALE) |

The above is to learn each PGM file within the zip. PGM is a grayscale picture file format. We extract every PGM file right into a byte string by means of picture.learn() and convert it right into a numpy array of bytes. Then we use OpenCV to decode the byte string into an array of pixels utilizing cv2.imdecode(). The file format shall be detected routinely by OpenCV. We save every image right into a Python dictionary faces for later use.

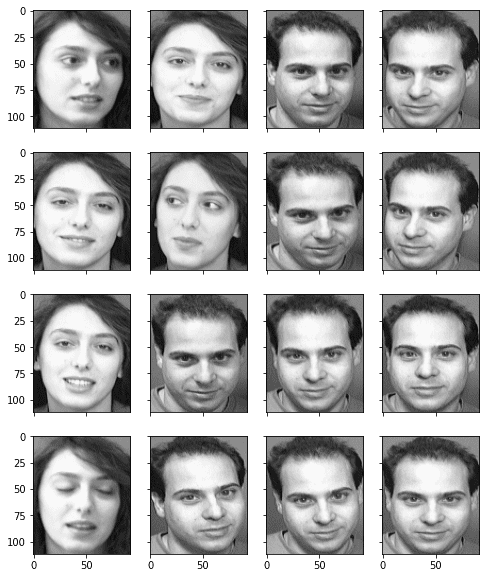

Right here we will have a look on these image of human faces, utilizing matplotlib:

|

... import matplotlib.pyplot as plt

fig, axes = plt.subplots(4,4,sharex=True,sharey=True,figsize=(8,10)) faceimages = checklist(faces.values())[–16:] # take final 16 pictures for i in vary(16): axes[i%4][i//4].imshow(faceimages[i], cmap=”grey”) plt.present() |

We are able to additionally discover the pixel measurement of every image:

|

... faceshape = checklist(faces.values())[0].form print(“Face picture form:”, faceshape) |

|

Face picture form: (112, 92) |

The photographs of faces are recognized by their file title within the Python dictionary. We are able to take a peek on the filenames:

|

... print(checklist(faces.keys())[:5]) |

|

[‘s1/1.pgm’, ‘s1/10.pgm’, ‘s1/2.pgm’, ‘s1/3.pgm’, ‘s1/4.pgm’] |

and subsequently we will put faces of the identical individual into the identical class. There are 40 lessons and completely 400 footage:

|

... lessons = set(filename.cut up(“/”)[0] for filename in faces.keys()) print(“Variety of lessons:”, len(lessons)) print(“Variety of footage:”, len(faces)) |

|

Variety of lessons: 40 Variety of footage: 400 |

As an example the potential of utilizing eigenface for recognition, we wish to maintain out among the footage earlier than we generate our eigenfaces. We maintain out all the photographs of 1 individual in addition to one image for one more individual as our check set. The remaining footage are vectorized and transformed right into a 2D numpy array:

|

... # Take lessons 1-39 for eigenfaces, maintain complete class 40 and # picture 10 of sophistication 39 as out-of-sample check facematrix = [] facelabel = [] for key,val in faces.objects(): if key.startswith(“s40/”): proceed # that is our check set if key == “s39/10.pgm”: proceed # that is our check set facematrix.append(val.flatten()) facelabel.append(key.cut up(“/”)[0])

# Create facematrix as (n_samples,n_pixels) matrix facematrix = np.array(facematrix) |

Now we will carry out principal element evaluation on this dataset matrix. As an alternative of computing the PCA step-by-step, we make use of the PCA operate in scikit-learn, which we will simply retrieve all outcomes we would have liked:

|

... # Apply PCA to extract eigenfaces from sklearn.decomposition import PCA

pca = PCA().match(facematrix) |

We are able to determine how important is every principal element from the defined variance ratio:

|

... print(pca.explained_variance_ratio_) |

|

[1.77824822e-01 1.29057925e-01 6.67093882e-02 5.63561346e-02 5.13040312e-02 3.39156477e-02 2.47893586e-02 2.27967054e-02 1.95632067e-02 1.82678428e-02 1.45655853e-02 1.38626271e-02 1.13318896e-02 1.07267786e-02 9.68365599e-03 9.17860717e-03 8.60995215e-03 8.21053028e-03 7.36580634e-03 7.01112888e-03 6.69450840e-03 6.40327943e-03 5.98295099e-03 5.49298705e-03 5.36083980e-03 4.99408106e-03 4.84854321e-03 4.77687371e-03 … 1.12203331e-04 1.11102187e-04 1.08901471e-04 1.06750318e-04 1.05732991e-04 1.01913786e-04 9.98164783e-05 9.85530209e-05 9.51582720e-05 8.95603083e-05 8.71638147e-05 8.44340263e-05 7.95894118e-05 7.77912922e-05 7.06467912e-05 6.77447444e-05 2.21225931e-32] |

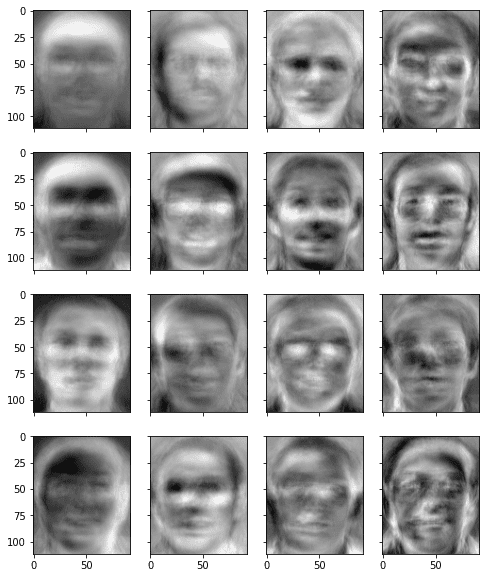

or we will merely make up a average quantity, say, 50, and take into account these many principal element vectors because the eigenface. For comfort, we extract the eigenface from PCA end result and retailer it as a numpy array. Be aware that the eigenfaces are saved as rows in a matrix. We are able to convert it again to 2D if we wish to show it. In under, we present among the eigenfaces to see how they appear to be:

|

... # Take the primary Okay principal parts as eigenfaces n_components = 50 eigenfaces = pca.components_[:n_components]

# Present the primary 16 eigenfaces fig, axes = plt.subplots(4,4,sharex=True,sharey=True,figsize=(8,10)) for i in vary(16): axes[i%4][i//4].imshow(eigenfaces[i].reshape(faceshape), cmap=”grey”) plt.present() |

From this image, we will see eigenfaces are blurry faces, however certainly every eigenfaces holds some facial traits that can be utilized to construct an image.

Since our objective is to construct a face recognition system, we first calculate the load vector for every enter image:

|

... # Generate weights as a KxN matrix the place Okay is the variety of eigenfaces and N the variety of samples weights = eigenfaces @ (facematrix – pca.mean_).T |

The above code is utilizing matrix multiplication to switch loops. It’s roughly equal to the next:

|

... weights = [] for i in vary(facematrix.form[0]): weight = [] for j in vary(n_components): w = eigenfaces[j] @ (facematrix[i] – pca.mean_) weight.append(w) weights.append(weight) |

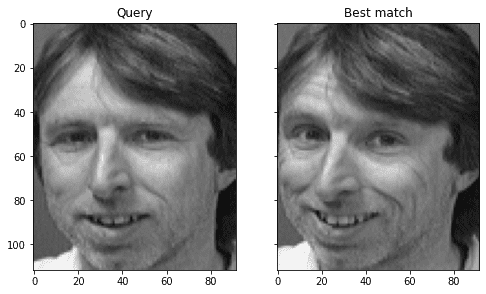

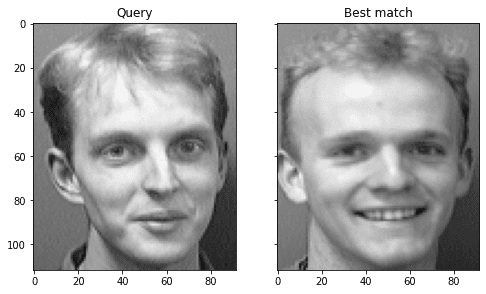

As much as right here, our face recognition system has been accomplished. We used footage of 39 individuals to construct our eigenface. We use the check image that belongs to considered one of these 39 individuals (the one held out from the matrix that educated the PCA mannequin) to see if it could actually efficiently acknowledge the face:

|

... # Take a look at on out-of-sample picture of present class question = faces[“s39/10.pgm”].reshape(1,–1) query_weight = eigenfaces @ (question – pca.mean_).T euclidean_distance = np.linalg.norm(weights – query_weight, axis=0) best_match = np.argmin(euclidean_distance) print(“Greatest match %s with Euclidean distance %f” % (facelabel[best_match], euclidean_distance[best_match])) # Visualize fig, axes = plt.subplots(1,2,sharex=True,sharey=True,figsize=(8,6)) axes[0].imshow(question.reshape(faceshape), cmap=“grey”) axes[0].set_title(“Question”) axes[1].imshow(facematrix[best_match].reshape(faceshape), cmap=“grey”) axes[1].set_title(“Greatest match”) plt.present() |

Above, we first subtract the vectorized picture by the typical vector that retrieved from the PCA end result. Then we compute the projection of this mean-subtracted vector to every eigenface and take it as the load for this image. Afterwards, we examine the load vector of the image in query to that of every present image and discover the one with the smallest L2 distance as one of the best match. We are able to see that it certainly can efficiently discover the closest match in the identical class:

|

Greatest match s39 with Euclidean distance 1559.997137 |

and we will visualize the end result by evaluating the closest match aspect by aspect:

We are able to strive once more with the image of the fortieth individual that we held out from the PCA. We’d by no means get it right as a result of it’s a new individual to our mannequin. Nonetheless, we wish to see how fallacious it may be in addition to the worth within the distance metric:

|

... # Take a look at on out-of-sample picture of recent class question = faces[“s40/1.pgm”].reshape(1,–1) query_weight = eigenfaces @ (question – pca.mean_).T euclidean_distance = np.linalg.norm(weights – query_weight, axis=0) best_match = np.argmin(euclidean_distance) print(“Greatest match %s with Euclidean distance %f” % (facelabel[best_match], euclidean_distance[best_match])) # Visualize fig, axes = plt.subplots(1,2,sharex=True,sharey=True,figsize=(8,6)) axes[0].imshow(question.reshape(faceshape), cmap=“grey”) axes[0].set_title(“Question”) axes[1].imshow(facematrix[best_match].reshape(faceshape), cmap=“grey”) axes[1].set_title(“Greatest match”) plt.present() |

We are able to see that it’s finest match has a higher L2 distance:

|

Greatest match s5 with Euclidean distance 2690.209330 |

however we will see that the mistaken end result has some resemblance to the image in query:

Within the paper by Turk and Petland, it’s instructed that we arrange a threshold for the L2 distance. If one of the best match’s distance is lower than the edge, we might take into account the face is acknowledged to be the identical individual. If the space is above the edge, we declare the image is somebody we by no means noticed even when a finest match might be discover numerically. On this case, we might take into account to incorporate this as a brand new individual into our mannequin by remembering this new weight vector.

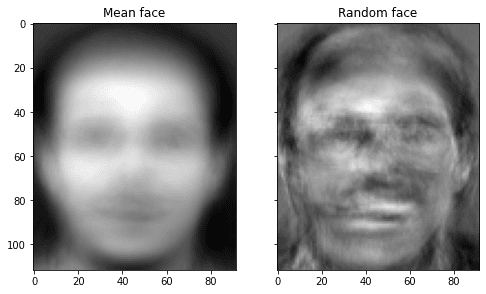

Really, we will do one step additional, to generate new faces utilizing eigenfaces, however the end result isn’t very practical. In under, we generate one utilizing random weight vector and present it aspect by aspect with the “common face”:

|

... # Visualize the imply face and random face fig, axes = plt.subplots(1,2,sharex=True,sharey=True,figsize=(8,6)) axes[0].imshow(pca.mean_.reshape(faceshape), cmap=“grey”) axes[0].set_title(“Imply face”) random_weights = np.random.randn(n_components) * weights.std() newface = random_weights @ eigenfaces + pca.mean_ axes[1].imshow(newface.reshape(faceshape), cmap=“grey”) axes[1].set_title(“Random face”) plt.present() |

How good is eigenface? It’s surprisingly overachieved for the simplicity of the mannequin. Nonetheless, Turk and Pentland examined it with varied situations. It discovered that its accuracy was “a mean of 96% with mild variation, 85% with orientation variation, and 64% with measurement variation.” Therefore it is probably not very sensible as a face recognition system. In any case, the image as a matrix shall be distorted lots within the principal element area after zoom-in and zoom-out. Due to this fact the fashionable various is to make use of convolution neural community, which is extra tolerant to varied transformations.

Placing every little thing collectively, the next is the whole code:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 |

import zipfile import cv2 import numpy as np import matplotlib.pyplot as plt from sklearn.decomposition import PCA

# Learn face picture from zip file on the fly faces = {} with zipfile.ZipFile(“attface.zip”) as facezip: for filename in facezip.namelist(): if not filename.endswith(“.pgm”): proceed # not a face image with facezip.open(filename) as picture: # If we extracted recordsdata from zip, we will use cv2.imread(filename) as a substitute faces[filename] = cv2.imdecode(np.frombuffer(picture.learn(), np.uint8), cv2.IMREAD_GRAYSCALE)

# Present pattern faces utilizing matplotlib fig, axes = plt.subplots(4,4,sharex=True,sharey=True,figsize=(8,10)) faceimages = checklist(faces.values())[–16:] # take final 16 pictures for i in vary(16): axes[i%4][i//4].imshow(faceimages[i], cmap=”grey”) print(“Displaying pattern faces”) plt.present()

# Print some particulars faceshape = checklist(faces.values())[0].form print(“Face picture form:”, faceshape)

lessons = set(filename.cut up(“/”)[0] for filename in faces.keys()) print(“Variety of lessons:”, len(lessons)) print(“Variety of pictures:”, len(faces))

# Take lessons 1-39 for eigenfaces, maintain complete class 40 and # picture 10 of sophistication 39 as out-of-sample check facematrix = [] facelabel = [] for key,val in faces.objects(): if key.startswith(“s40/”): proceed # that is our check set if key == “s39/10.pgm”: proceed # that is our check set facematrix.append(val.flatten()) facelabel.append(key.cut up(“/”)[0])

# Create a NxM matrix with N pictures and M pixels per picture facematrix = np.array(facematrix)

# Apply PCA and take first Okay principal parts as eigenfaces pca = PCA().match(facematrix)

n_components = 50 eigenfaces = pca.components_[:n_components]

# Present the primary 16 eigenfaces fig, axes = plt.subplots(4,4,sharex=True,sharey=True,figsize=(8,10)) for i in vary(16): axes[i%4][i//4].imshow(eigenfaces[i].reshape(faceshape), cmap=”grey”) print(“Displaying the eigenfaces”) plt.present()

# Generate weights as a KxN matrix the place Okay is the variety of eigenfaces and N the variety of samples weights = eigenfaces @ (facematrix – pca.mean_).T print(“Form of the load matrix:”, weights.form)

# Take a look at on out-of-sample picture of present class question = faces[“s39/10.pgm”].reshape(1,–1) query_weight = eigenfaces @ (question – pca.mean_).T euclidean_distance = np.linalg.norm(weights – query_weight, axis=0) best_match = np.argmin(euclidean_distance) print(“Greatest match %s with Euclidean distance %f” % (facelabel[best_match], euclidean_distance[best_match])) # Visualize fig, axes = plt.subplots(1,2,sharex=True,sharey=True,figsize=(8,6)) axes[0].imshow(question.reshape(faceshape), cmap=“grey”) axes[0].set_title(“Question”) axes[1].imshow(facematrix[best_match].reshape(faceshape), cmap=“grey”) axes[1].set_title(“Greatest match”) plt.present()

# Take a look at on out-of-sample picture of recent class question = faces[“s40/1.pgm”].reshape(1,–1) query_weight = eigenfaces @ (question – pca.mean_).T euclidean_distance = np.linalg.norm(weights – query_weight, axis=0) best_match = np.argmin(euclidean_distance) print(“Greatest match %s with Euclidean distance %f” % (facelabel[best_match], euclidean_distance[best_match])) # Visualize fig, axes = plt.subplots(1,2,sharex=True,sharey=True,figsize=(8,6)) axes[0].imshow(question.reshape(faceshape), cmap=“grey”) axes[0].set_title(“Question”) axes[1].imshow(facematrix[best_match].reshape(faceshape), cmap=“grey”) axes[1].set_title(“Greatest match”) plt.present() |

Additional studying

This part offers extra sources on the subject if you’re seeking to go deeper.

Papers

Books

APIs

Articles

Abstract

On this tutorial, you found methods to construct a face recognition system utilizing eigenface, which is derived from principal element evaluation.

Particularly, you discovered:

- The right way to extract attribute pictures from the picture dataset utilizing principal element evaluation

- The right way to use the set of attribute pictures to create a weight vector for any seen or unseen pictures

- The right way to use the load vectors of various pictures to measure for his or her similarity, and apply this method to face recognition

- The right way to generate a brand new random picture from the attribute pictures

[ad_2]