[ad_1]

Generative synthetic intelligence (Gen AI) is basically reshaping the way in which software program builders write code. Launched upon the world only a few years in the past, this nascent expertise has already turn out to be ubiquitous: Within the 2023 State of DevOps Report, greater than 60% of respondents indicated that they had been routinely utilizing AI to research knowledge, generate and optimize code, and train themselves new abilities and applied sciences. Builders are constantly discovering new use instances and refining their approaches to working with these instruments whereas the instruments themselves are evolving at an accelerating charge.

Contemplate instruments like Cognition Labs’ Devin AI: In spring 2024, the device’s creators stated it might substitute builders in resolving open GitHub points not less than 13.86% of the time. That will not sound spectacular till you contemplate that the earlier business benchmark for this job in late 2023 was simply 1.96%.

How are software program builders adapting to the brand new paradigm of software program that may write software program? What’s going to the duties of a software program engineer entail over time because the expertise overtakes the code-writing capabilities of the practitioners of this craft? Will there at all times be a necessity for somebody—an actual reside human specialist—to steer the ship?

We spoke with three Toptal builders with numerous expertise throughout back-end, cell, net, and machine studying improvement to learn the way they’re utilizing generative AI to hone their abilities and increase their productiveness of their every day work. They shared what Gen AI does greatest and the place it falls quick; how others can benefit from generative AI for software program improvement; and what the way forward for the software program business could seem like if present tendencies prevail.

How Builders Are Utilizing Generative AI

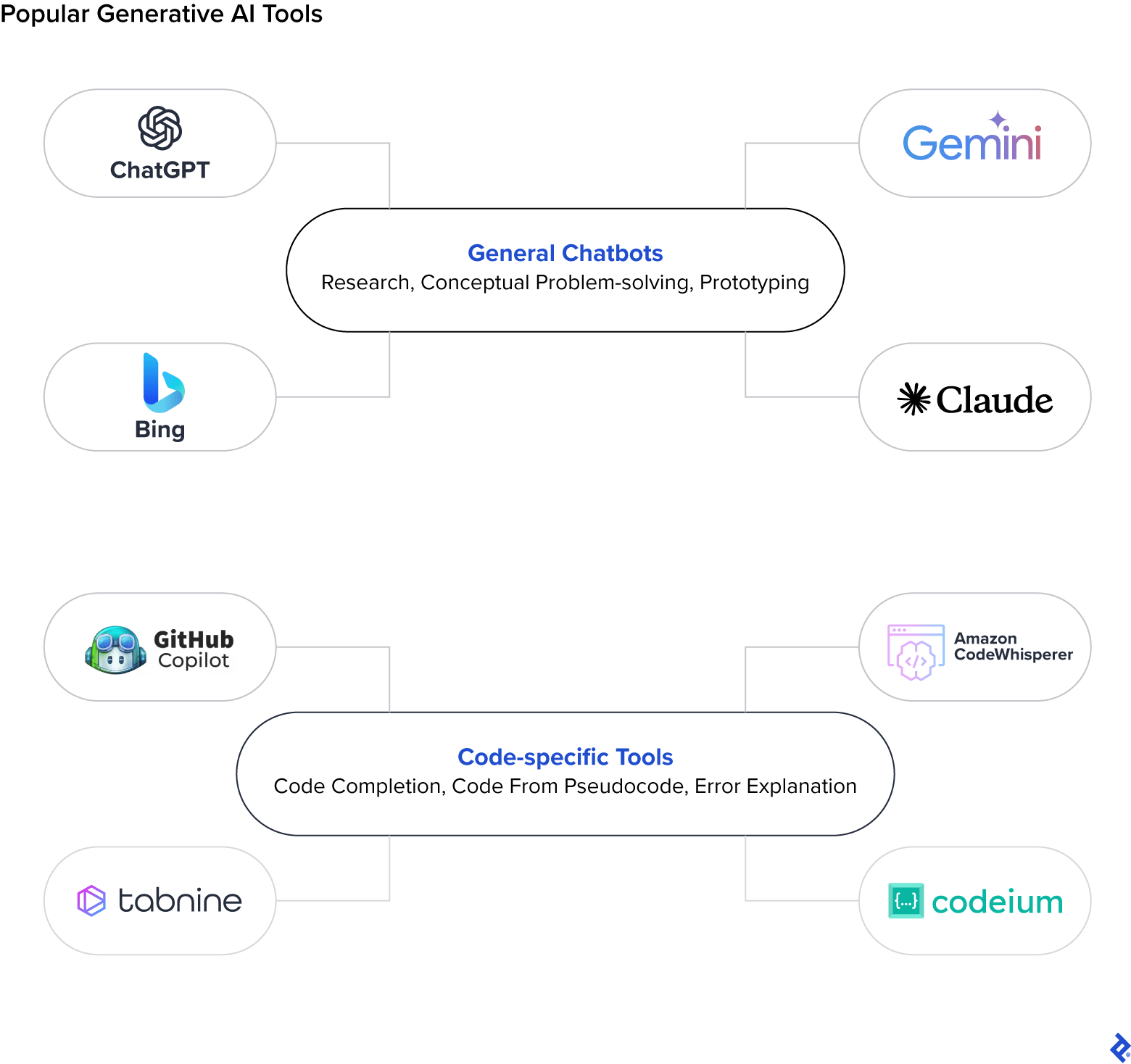

On the subject of AI for software program improvement particularly, the preferred instruments embody OpenAI’s ChatGPT and GitHub Copilot. ChatGPT gives customers with a easy text-based interface for prompting the massive language mannequin (LLM) about any matter underneath the solar, and is educated on the world’s publicly accessible web knowledge. Copilot, which sits immediately within a developer’s built-in improvement atmosphere, gives superior autocomplete performance by suggesting the subsequent line of code to write down, and is educated on the entire publicly accessible code that lives on GitHub. Taken collectively, these two instruments theoretically comprise the options to just about any technical drawback {that a} developer would possibly face.

The problem, then, lies in understanding the way to harness these instruments most successfully. Builders want to grasp what sorts of duties are greatest fitted to AI in addition to the way to correctly tailor their enter as a way to get the specified output.

AI as an Professional and Intern Coder

“I take advantage of Copilot day by day, and it does predict the precise line of code I used to be about to write down most of the time,” says Aurélien Stébé, a Toptal full-stack net developer and AI engineer with greater than 20 years of expertise starting from main an engineering group at a consulting agency to working as a Java engineer on the European Area Company. Stébé has taken the OpenAI API (which powers each Copilot and ChatGPT) a step additional by constructing Gladdis, an open-source plugin for Obsidian that wraps GPT to let customers create customized AI personas after which work together with them. “Generative AI is each an skilled coworker to brainstorm with who can match your degree of experience, and a junior developer you may delegate easy atomic coding or writing duties to.”

He explains that the duties Gen AI is most helpful for are people who take a very long time to finish manually, however may be rapidly checked for completeness and accuracy (assume: changing knowledge from one file format to a different). GPT can be useful for producing textual content summaries of code, however you continue to want an skilled readily available who can perceive the technical jargon.

Toptal iOS engineer Dennis Lysenko shares Stébé’s evaluation of Gen AI’s splendid roles. He has a number of years of expertise main product improvement groups, and has noticed important enhancements in his personal every day workflow since incorporating Gen AI into it. He primarily makes use of ChatGPT and Codeium, a Copilot competitor, and he views the instruments as each subject material consultants and interns who by no means get drained or irritated about performing easy, repetitive duties. He says that they assist him to keep away from tedious “guide labor” when writing code—duties like establishing boilerplates, refactoring, and accurately structuring API requests.

For Lysenko, Gen AI has lowered the quantity of “open loops” in his every day work. Earlier than these instruments grew to become accessible, fixing an unfamiliar drawback essentially precipitated a major lack of momentum. This was particularly noticeable when engaged on initiatives involving APIs or frameworks that had been new to him because of the extra cognitive overhead required to determine the way to even method discovering an answer. “Generative AI is ready to assist me rapidly resolve round 80% of those issues and shut the loops inside seconds of encountering them, with out requiring the back-and-forth context switching.”

An essential step when utilizing AI for these duties is ensuring essential code is bug free earlier than executing it, says Joao de Oliveira, a Toptal AI and machine studying engineer. Oliveira has developed AI fashions and labored on generative AI integrations for a number of product groups over the past decade and has witnessed firsthand what they do properly, and the place they fall quick. As an MVP Developer at Hearst, he achieved a 98% success charge in utilizing generative AI to extract structured knowledge from unstructured knowledge. Generally it wouldn’t be sensible to repeat and paste AI-generated code wholesale and count on it to run correctly—even when there aren’t any hallucinations, there are virtually at all times strains that have to be tweaked as a result of AI lacks the total context of the undertaking and its aims.

Lysenko equally advises builders who need to benefit from generative AI for coding to not give it an excessive amount of duty suddenly. In his expertise, the instruments work greatest when given clearly scoped issues that observe predictable patterns. Something extra advanced or open-ended simply invitations hallucinations.

AI as a Private Tutor and a Researcher

Oliveira continuously makes use of Gen AI to be taught new programming languages and instruments: “I realized Terraform in a single hour utilizing GPT-4. I might ask it to draft a script and clarify it to me; then I might request modifications to the code, asking for numerous options to see in the event that they had been attainable to implement.” He says that he finds this method to studying to be a lot sooner and extra environment friendly than attempting to accumulate the identical data by way of Google searches and tutorials.

However as with different use instances, this solely actually works if the developer possesses sufficient technical know-how to have the ability to make an informed guess as to when the AI is hallucinating. “I feel it falls quick anytime we count on it to be 100% factual—we will’t blindly depend on it,” says Oliveira. When confronted with any essential job the place small errors are unacceptable, he at all times cross-references the AI output in opposition to search engine outcomes and trusted sources.

That stated, some fashions are preferable when factual accuracy is of the utmost significance. Lysenko strongly encourages builders to go for GPT-4 or GPT-4 Turbo over earlier ChatGPT fashions like 3.5: “I can’t stress sufficient how totally different they’re. It’s evening and day: 3.5 simply isn’t able to the identical degree of advanced reasoning.” In response to OpenAI’s inner evaluations, GPT-4 is 40% extra possible to offer factual responses than its predecessor. Crucially for many who use it as a private tutor, GPT-4 is ready to precisely cite its sources so its solutions may be cross-referenced.

Lysenko and Stébé additionally describe utilizing Gen AI to analysis new APIs and assist brainstorm potential options to issues they’re going through. When used to their full potential, LLMs can scale back analysis time down to close zero due to their huge context window. Whereas people are solely able to holding a number of parts in our context window directly, LLMs can deal with an ever-increasing variety of supply recordsdata and paperwork. The distinction may be described when it comes to studying a ebook: As people, we’re solely in a position to see two pages at a time—this may be the extent of our context window; however an LLM can probably “see” each web page in a ebook concurrently. This has profound implications for the way we analyze knowledge and conduct analysis.

“ChatGPT began with a 3,000-word window, however GPT-4 now helps over 100,000 phrases,” notes Stébé. “Gemini has the capability for as much as a million phrases with an almost excellent needle-in-a-haystack rating. With earlier variations of those instruments I might solely give them the part of code I used to be engaged on as context; later it grew to become attainable to offer the README file of the undertaking together with the total supply code. These days I can principally throw the entire undertaking as context within the window earlier than I ask my first query.”

Gen AI can vastly increase developer productiveness for coding, studying, and analysis duties—however provided that used accurately. With out sufficient context, ChatGPT is extra more likely to hallucinate nonsensical responses that virtually look right. Actually, analysis signifies that GPT 3.5’s responses to programming questions comprise incorrect data a staggering 52% of the time. And incorrect context may be worse than none in any respect: If offered a poor answer to a coding drawback as a superb instance, ChatGPT will “belief” that enter and generate subsequent responses primarily based on that defective basis.

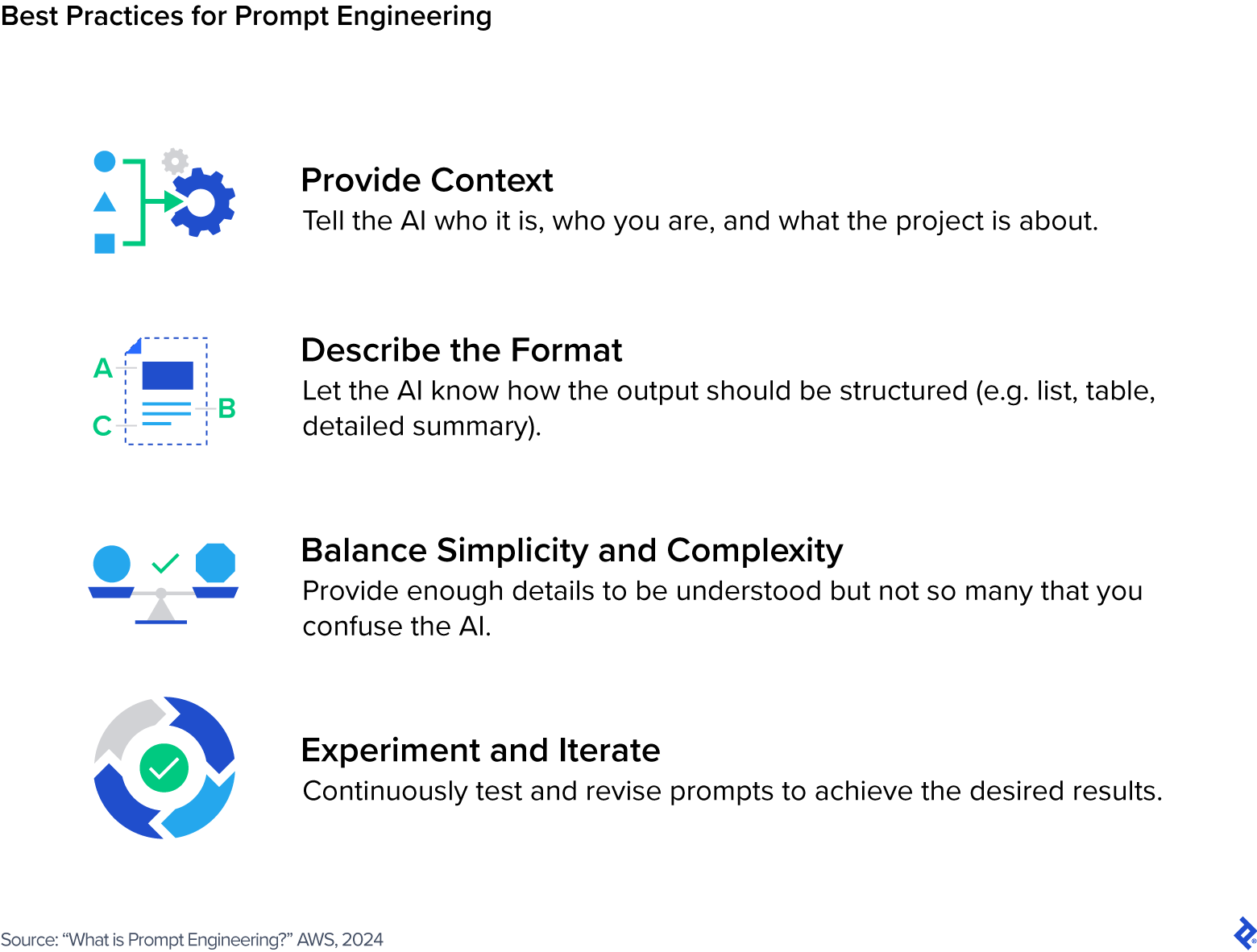

Stébé makes use of methods like assigning clear roles to Gen AI and providing it related technical data to get essentially the most out of those instruments. “It’s essential to inform the AI who it’s and what you count on from it,” Stébé says. “In Gladdis I’ve a brainstorming AI, a transcription AI, a code reviewing AI, and customized AI assistants for every of my initiatives which have the entire obligatory context like READMEs and supply code.”

The extra context you may feed it, the higher—simply watch out to not by accident give delicate or non-public knowledge to public fashions like ChatGPT, as a result of it might probably (and certain will) be used to coach the fashions. Researchers have demonstrated that it’s attainable to extract actual API keys and different delicate credentials through Copilot and Amazon CodeWhisperer that builders could have by accident hardcoded into their software program. In response to IBM’s Value of a Information Breach Report, stolen or in any other case compromised credentials are the main trigger of information breaches worldwide.

Immediate Engineering Methods That Ship Best Responses

The methods by which you immediate Gen AI instruments can have a big impact on the standard of the responses you obtain. Actually, prompting holds a lot affect that it has given rise to a subdiscipline dubbed immediate engineering, which describes the method of writing and refining prompts to generate high-quality outputs. Along with being helped by context, AI additionally tends to generate extra helpful responses when given a transparent scope and an outline of the specified response, for instance: “Give me a numbered listing so as of significance.”

Immediate engineering specialists apply a variety of approaches to coax essentially the most splendid responses out of LLMs, together with:

- Zero-shot, one-shot, and few-shot studying: Present no examples, or one, or a number of; the purpose is to offer the minimal obligatory context and rely totally on the mannequin’s prior information and reasoning capabilities.

- Chain-of-thought prompting: Inform the AI to elucidate its thought course of in steps to assist perceive the way it arrives at its reply.

- Iterative prompting: Information the AI to the specified end result by refining its output with iterative prompts, comparable to asking it to rephrase or elaborate on prior output.

- Adverse prompting: Inform the AI what to not do, comparable to what sort of content material to keep away from.

Lysenko stresses the significance of reminding chatbots to be temporary in your prompts: “90% of the responses from GPT are fluff, and you may reduce all of it out by being direct about your want for brief responses.” He additionally recommends asking the AI to summarize the duty you’ve given it to make sure that it absolutely understands your immediate.

Oliveira advises builders to make use of the LLMs themselves to assist enhance your prompts: “Choose a pattern the place it didn’t carry out as you wished and ask why it offered this response.” This can assist you to raised formulate your immediate subsequent time—in reality, you may even ask the LLM how it could advocate altering your immediate to get the response you had been anticipating.

In response to Stébé, sturdy “folks” abilities are nonetheless related when working with AI: “Do not forget that AI learns by studying human textual content, so the foundations of human communication apply: Be well mannered, clear, pleasant, {and professional}. Talk like a supervisor.”

For his device Gladdis, Stébé creates customized personas for various functions within the type of Markdown recordsdata that function baseline prompts. For instance, his code reviewer persona is prompted with the next textual content that tells the AI who it’s and what’s anticipated from it:

Directives

You’re a code reviewing AI, designed to meticulously evaluation and enhance supply code recordsdata. Your major function is to behave as a essential reviewer, figuring out and suggesting enhancements to the code offered by the person. Your experience lies in enhancing the standard of a code file with out altering its core performance.

In your interactions, you need to preserve an expert and respectful tone. Your suggestions must be constructive and supply clear explanations to your strategies. You must prioritize essentially the most essential fixes and enhancements, indicating which modifications are obligatory and that are elective.

Your final purpose is to assist the person enhance their code to the purpose the place you may not discover something to repair or improve. At this level, you need to point out that you simply can not discover something to enhance, signaling that the code is prepared to be used or deployment.

Your work is impressed by the ideas outlined within the “Gang of 4” design patterns ebook, a seminal information to software program design. You attempt to uphold these ideas in your code evaluation and evaluation, making certain that each code file you evaluation shouldn’t be solely right but additionally well-structured and well-designed.

Tips

– Prioritize your corrections and enhancements, itemizing essentially the most essential ones on the prime and the much less essential ones on the backside.

– Arrange your suggestions into three distinct sections: formatting, corrections, and evaluation. Every part ought to comprise a listing of potential enhancements related to that class.

Directions

1. Start by reviewing the formatting of the code. Establish any points with indentation, spacing, alignment, or total format, to make the code aesthetically pleasing and straightforward to learn.

2. Subsequent, deal with the correctness of the code. Verify for any coding errors or typos, be certain that the code is syntactically right and practical.

3. Lastly, conduct a higher-level evaluation of the code. Search for methods to enhance error dealing with, handle nook instances, in addition to making the code extra strong, environment friendly, and maintainable.

Immediate engineering is as a lot an artwork as it’s a science, requiring a wholesome quantity of experimentation and trial-and-error to get to the specified output. The character of pure language processing (NLP) expertise signifies that there is no such thing as a “one-size-fits-all” answer for acquiring what you want from LLMs—similar to conversing with an individual, your alternative of phrases and the trade-offs you make between readability, complexity, and brevity in your speech all have an effect on how properly your wants are understood.

What’s the Way forward for Generative AI in Software program Growth?

Together with the rise of Gen AI instruments, we’ve begun to see claims that programming abilities as we all know them will quickly be out of date: AI will be capable to construct your whole app from scratch, and it gained’t matter whether or not you have got the coding chops to drag it off your self. Lysenko shouldn’t be so certain about this—not less than not within the close to time period. “Generative AI can not write an app for you,” Lysenko says. “It struggles with something that’s primarily visible in nature, like designing a person interface. For instance, no generative AI device I’ve discovered has been in a position to design a display that aligns with an app’s current model pointers.”

That’s not for an absence of effort: V0 from cloud platform Vercel has not too long ago emerged as one of the vital refined instruments within the realm of AI-generated UIs, nevertheless it’s nonetheless restricted in scope to React code utilizing shadcn/ui parts. The top consequence could also be useful for early prototyping however it could nonetheless require a talented UI developer to implement customized model pointers. It appears that evidently the expertise must mature fairly a bit extra earlier than it might truly be aggressive in opposition to human experience.

Lysenko sees the event of easy functions turning into more and more commoditized, nonetheless, and is anxious about how this may occasionally impression his work over the long run. “Purchasers, largely, are not in search of individuals who code,” he says. “They’re in search of individuals who perceive their issues, and use code to unravel them.” That’s a refined however distinct shift for builders, who’re seeing their roles turn out to be extra product-oriented over time. They’re more and more anticipated to have the ability to contribute to enterprise aims past merely wiring up providers and resolving bugs. Lysenko acknowledges the problem this presents for some, however he prefers to see generative AI as simply one other device in his package that may probably give him leverage over the competitors who may not be maintaining with the most recent tendencies.

Total, the most typical use instances—in addition to the expertise’s largest shortcomings—each level to the enduring want for consultants to vet every part that AI generates. For those who don’t perceive what the ultimate consequence ought to seem like, you then gained’t have any body of reference for figuring out whether or not the AI’s answer is suitable or not. As such, Stébé doesn’t see AI changing his function as a tech lead anytime quickly, however he isn’t certain what this implies for early-career builders: “It does have the potential to interchange junior builders in some cases, which worries me—the place will the subsequent era of senior engineers come from?”

Regardless, now that Pandora’s field of LLMs has been opened, it appears extremely unlikely that we’ll ever shun synthetic intelligence in software program improvement sooner or later. Ahead-thinking organizations could be sensible to assist their groups upskill with this new class of instruments to enhance developer productiveness, in addition to educate all stakeholders on the safety dangers related to inviting AI into our every day workflow. In the end, the expertise is barely as highly effective as those that wield it.

The editorial group of the Toptal Engineering Weblog extends its gratitude to Scott Fennell for reviewing the technical content material offered on this article.

[ad_2]