[ad_1]

Many machine studying (ML) fashions sometimes give attention to studying one activity at a time. For instance, language fashions predict the likelihood of a subsequent phrase given a context of previous phrases, and object detection fashions establish the thing(s) which are current in a picture. Nonetheless, there could also be cases when studying from many associated duties on the similar time would result in higher modeling efficiency. That is addressed within the area of multi-task studying, a subfield of ML during which a number of aims are educated throughout the similar mannequin on the similar time.

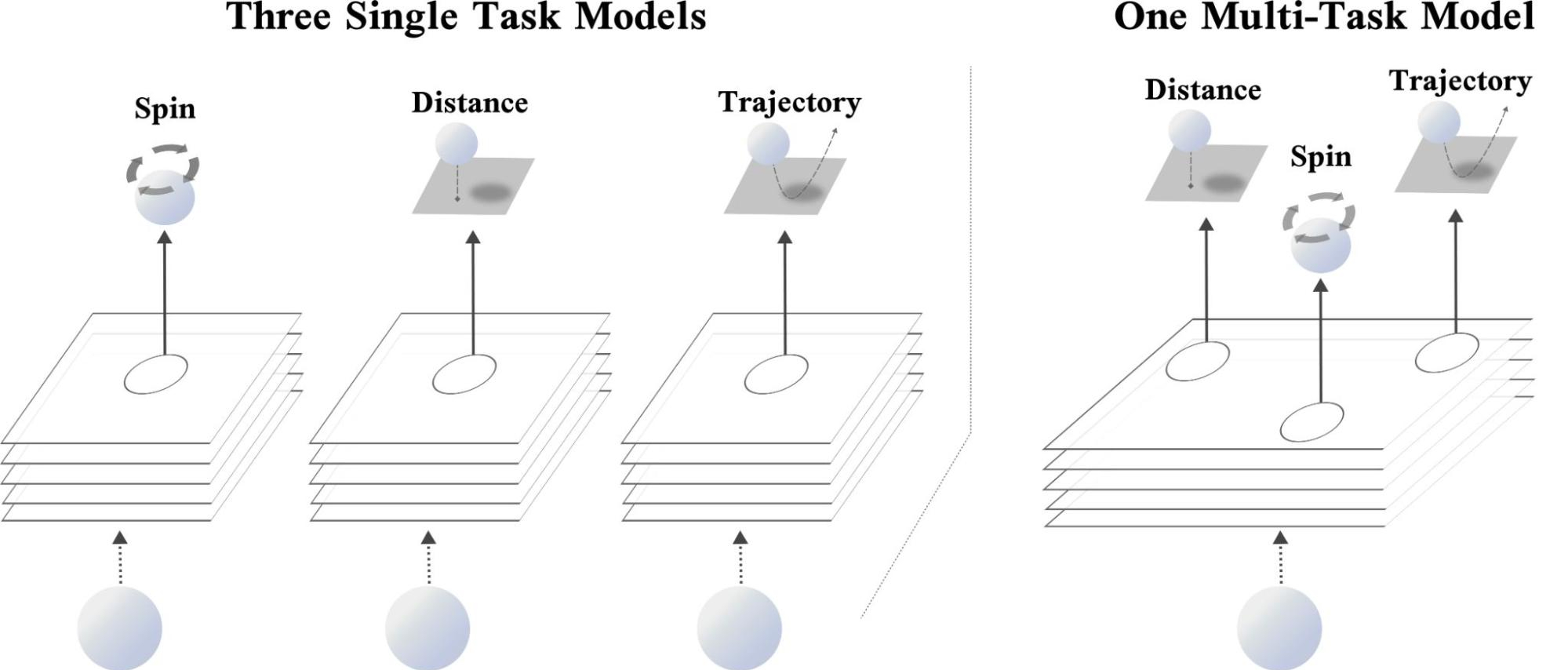

Contemplate a real-world instance: the sport of ping-pong. When enjoying ping-pong, it’s typically advantageous to evaluate the gap, spin, and imminent trajectory of the ping-pong ball to regulate your physique and line up a swing. Whereas every of those duties are distinctive — predicting the spin of a ping-pong ball is essentially distinct from predicting its location — enhancing your reasoning of the placement and spin of the ball will possible enable you higher predict its trajectory and vice-versa. By analogy, throughout the realm of deep studying, coaching a mannequin to foretell three associated duties (i.e., the placement, spin, and trajectory of a ping-pong ball) might lead to improved efficiency over a mannequin that solely predicts a single goal.

In “Effectively Figuring out Job Groupings in Multi-Job Studying”, a highlight presentation at NeurIPS 2021, we describe a way known as Job Affinity Groupings (TAG) that determines which duties ought to be educated collectively in multi-task neural networks. Our method makes an attempt to divide a set of duties into smaller subsets such that the efficiency throughout all duties is maximized. To perform this objective, it trains all duties collectively in a single multi-task mannequin and measures the diploma to which one activity’s gradient replace on the mannequin’s parameters would have an effect on the lack of the opposite duties within the community. We denote this amount as inter-task affinity. Our experimental findings point out that deciding on teams of duties that maximize inter-task affinity correlates strongly with general mannequin efficiency.

Which Duties Ought to Prepare Collectively?

Within the superb case, a multi-task studying mannequin will apply the knowledge it learns throughout coaching on one activity to lower the loss on different duties included in coaching the community. This switch of knowledge results in a single mannequin that may not solely make a number of predictions, however might also exhibit improved accuracy for these predictions in comparison with the efficiency of coaching a unique mannequin for every activity. Then again, coaching a single mannequin on many duties might result in competitors for mannequin capability and severely degrade efficiency. This latter state of affairs typically happens when duties are unrelated. Returning to our ping-pong analogy, think about making an attempt to foretell the placement, spin, and trajectory of the ping-pong ball whereas concurrently recounting the Fibonnaci sequence. Not a enjoyable prospect, and most definitely detrimental to your development as a ping-pong participant.

One direct method to pick out the subset of duties on which a mannequin ought to practice is to carry out an exhaustive search over all attainable combos of multi-task networks for a set of duties. Nonetheless, the price related to this search may be prohibitive, particularly when there are numerous duties, as a result of the variety of attainable combos will increase exponentially with respect to the variety of duties within the set. That is additional difficult by the truth that the set of duties to which a mannequin is utilized might change all through its lifetime. As duties are added to or dropped from the set of all duties, this pricey evaluation would should be repeated to find out new groupings. Furthermore, as the dimensions and complexity of fashions continues to extend, even approximate activity grouping algorithms that consider solely a subset of attainable multi-task networks might turn out to be prohibitively pricey and time-consuming to judge.

Constructing Job Affinity Groupings

In analyzing this problem, we drew inspiration from meta-learning, a website of machine studying that trains a neural community that may be shortly tailored to a brand new, and beforehand unseen activity. One of many traditional meta-learning algorithms, MAML, applies a gradient replace to the fashions’ parameters for a group of duties after which updates its authentic set of parameters to attenuate the loss for a subset of duties in that assortment computed on the up to date parameter values. Utilizing this technique, MAML trains the mannequin to study representations that won’t reduce the loss for its present set of weights, however reasonably for the weights after a number of steps of coaching. Because of this, MAML trains a fashions’ parameters to have the capability to shortly adapt to a beforehand unseen activity as a result of it optimizes for the longer term, not the current.

TAG employs an analogous mechanism to realize perception into the coaching dynamics of multi-task neural networks. Particularly, it updates the mannequin’s parameters with respect solely to a single activity, seems to be at how this variation would have an effect on the opposite duties within the multi-task neural community, after which undoes this replace. This course of is then repeated for each different activity to assemble info on how every activity within the community would work together with another activity. Coaching then continues as regular by updating the mannequin’s shared parameters with respect to each activity within the community.

Amassing these statistics, and taking a look at their dynamics all through coaching, reveals that sure duties constantly exhibit helpful relationships, whereas some are antagonistic in direction of one another. A community choice algorithm can leverage this information to be able to group duties collectively that maximize inter-task affinity, topic to a practitioner’s selection of what number of multi-task networks can be utilized throughout inference.

Outcomes

Our experimental findings point out that TAG can choose very sturdy activity groupings. On the CelebA and Taskonomy datasets, TAG is aggressive with the prior state-of-the-art, whereas working between 32x and 11.5x quicker, respectively. On the Taskonomy dataset, this speedup interprets to 2,008 fewer Tesla V100 GPU hours to seek out activity groupings.

Conclusion

TAG is an environment friendly technique to find out which duties ought to practice collectively in a single coaching run. The strategy seems to be at how duties work together by coaching, notably, the impact that updating the mannequin’s parameters when coaching on one activity would have on the loss values of the opposite duties within the community. We discover that deciding on teams of duties to maximise this rating correlates strongly with mannequin efficiency.

Acknowledgements

We wish to thank Ehsan Amid, Zhe Zhao, Tianhe Yu, Rohan Anil, and Chelsea Finn for his or her elementary contributions to this work. We additionally acknowledge Tom Small for designing the animation, and Google Analysis as an entire for fostering a collaborative and uplifting analysis atmosphere.

[ad_2]