[ad_1]

Within the first article of this collection, we mentioned communal computing gadgets and the issues they create–or, extra exactly, the issues that come up as a result of we don’t actually perceive what “communal” means. Communal gadgets are supposed for use by teams of individuals in properties and workplaces. Examples embrace standard house assistants and sensible shows just like the Amazon Echo, Google House, Apple HomePod, and lots of others. If we don’t create these gadgets with communities of individuals in thoughts, we’ll proceed to construct the incorrect ones.

Ever for the reason that idea of a “person” was invented (which was most likely later than you suppose), we’ve assumed that gadgets are “owned” by a single person. Somebody buys the system and units up the account; it’s their system, their account. Once we’re constructing shared gadgets with a person mannequin, that mannequin rapidly runs into limitations. What occurs whenever you need your private home assistant to play music for a cocktail party, however your preferences have been skewed by your youngsters’s listening habits? We, as customers, have sure expectations for what a tool ought to do. However we, as technologists, have sometimes ignored our personal expectations when designing and constructing these gadgets.

This expectation isn’t a brand new one both. The phone within the kitchen was for everybody’s use. After the discharge of the iPad in 2010 Craig Hockenberry mentioned the nice worth of communal computing but additionally the considerations:

“While you move it round, you’re giving everybody who touches it the chance to mess along with your personal life, whether or not deliberately or not. That makes me uneasy.”

Communal computing requires a brand new mindset that takes under consideration customers’ expectations. If the gadgets aren’t designed with these expectations in thoughts, they’re destined for the landfill. Customers will ultimately expertise “weirdness” and “annoyance” that grows to mistrust of the system itself. As technologists, we frequently name these weirdnesses “edge circumstances.” That’s exactly the place we’re incorrect: they’re not edge circumstances, however they’re on the core of how folks need to use these gadgets.

Within the first article, we listed 5 core questions we must always ask about communal gadgets:

- Id: Do we all know the entire people who find themselves utilizing the system?

- Privateness: Are we exposing (or hiding) the correct content material for the entire folks with entry?

- Safety: Are we permitting the entire folks utilizing the system to do or see what they need to and are we defending the content material from people who shouldn’t?

- Expertise: What’s the contextually applicable show or subsequent motion?

- Possession: Who owns the entire information and providers hooked up to the system that a number of persons are utilizing?

On this article, we’ll take a deeper have a look at these questions, to see how the issues manifest and easy methods to perceive them.

Id

All the issues we’ve listed begin with the concept there’s one registered and identified one who ought to use the system. That mannequin doesn’t match actuality: the id of a communal system isn’t a single individual, however everybody who can work together with it. This could possibly be anybody in a position to faucet the display, make a voice command, use a distant, or just be sensed by it. To know this communal mannequin and the issues it poses, begin with the one who buys and units up the system. It’s related to that particular person’s account, like a private Amazon account with its order historical past and buying listing. Then it will get tough. Who doesn’t, can’t, or shouldn’t have full entry to an Amazon account? Would you like everybody who comes into your own home to have the ability to add one thing to your buying listing?

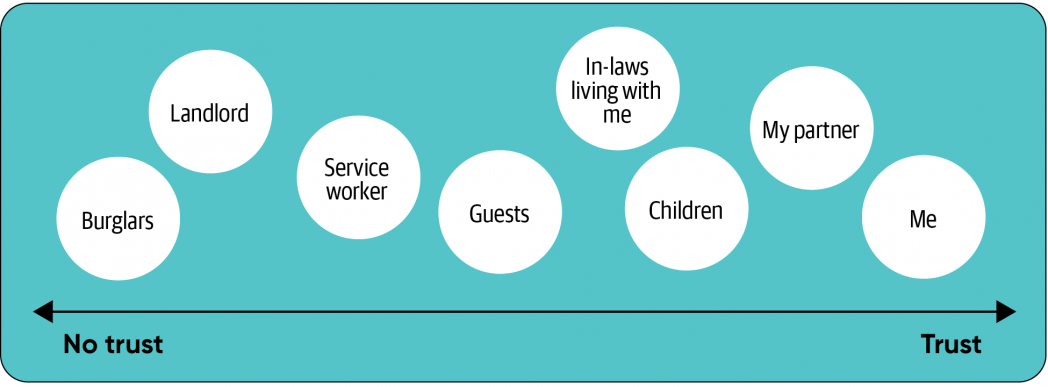

If you concentrate on the spectrum of people that could possibly be in your own home, they vary from folks whom you belief, to individuals who you don’t actually belief however who needs to be there, to those that you shouldn’t belief in any respect.

Along with people, we have to take into account the teams that every individual could possibly be a part of. These group memberships are referred to as “pseudo-identities”; they’re aspects of an individual’s full id. They’re often outlined by how the individual related themself with a bunch of different folks. My life at work, house, a highschool pals group, and as a sports activities fan present completely different elements of my id. After I’m with different individuals who share the identical pseudo-identity, we will share data. When there are folks from one group in entrance of a tool I could keep away from displaying content material that’s related to one other group (or one other private pseudo-identity). This will sound summary, nevertheless it isn’t; should you’re with pals in a sports activities bar, you most likely need notifications in regards to the groups you observe. You most likely don’t need information about work, until it’s an emergency.

There are vital explanation why we present a selected aspect of our id in a selected context. When designing an expertise, that you must take into account the id context and the place the expertise will happen. Most not too long ago this has give you do business from home. Many individuals speak about ‘bringing your complete self to work,’ however don’t understand that “your complete self” isn’t all the time applicable. Distant work modifications when and the place I ought to work together with work. For a wise display in my kitchen, it’s applicable to have content material that’s associated to my house and household. Is it applicable to have all of my work notifications and conferences there? May or not it’s an issue for youngsters to have the flexibility to affix my work calls? What does my IT group require so far as safety of labor gadgets versus private house gadgets?

With these gadgets we might have to modify to a distinct pseudo-identity to get one thing finished. I could have to be reminded of a piece assembly. After I get a notification from an in depth good friend, I must resolve whether or not it’s applicable to reply based mostly on the opposite folks round me.

The pandemic has damaged down the obstacles between house and work. The pure context swap from being at work and worrying about work issues after which going house to fret about house issues is now not the case. Folks must make a aware effort to “flip off work” and to alter the context. Simply because it’s the center of the workday doesn’t all the time imply I need to be bothered by work. I could need to change contexts to take a break. Such context shifts add nuance to the best way the present pseudo-identity needs to be thought of, and to the overarching context that you must detect.

Subsequent, we have to take into account identities as teams that I belong to. I’m a part of my household, and my household would doubtlessly need to discuss with different households. I stay in a home that’s on my avenue alongside different neighbors. I’m a part of a corporation that I determine as my work. These are all pseudo-identities we must always take into account, based mostly on the place the system is positioned and in relation to different equally vital identities.

The crux of the issue with communal gadgets is the a number of identities which might be or could also be utilizing the system. This requires larger understanding of who, the place, and why persons are utilizing the system. We have to take into account the kinds of teams which might be a part of the house and workplace.

Privateness

As we take into account the identities of all folks with entry to the system, and the id of the place the system is to be a part of, we begin to take into account what privateness expectations folks could have given the context during which the system is used.

Privateness is difficult to grasp. The framework I’ve discovered most useful is Contextual Integrity which was launched by Helen Nissenbaum within the e book Privateness in Context. Contextual Integrity describes 4 key facets of privateness:

- Privateness is supplied by applicable flows of knowledge.

- Acceptable data flows are people who conform to contextual data norms.

- Contextual informational norms refer to 5 impartial parameters: information topic, sender, recipient, data kind, and transmission precept.

- Conceptions of privateness are based mostly on moral considerations that evolve over time.

What’s most vital about Contextual Integrity is that privateness shouldn’t be about hiding data away from the general public however giving folks a solution to management the circulation of their very own data. The context during which data is shared determines what is suitable.

This circulation both feels applicable, or not, based mostly on key traits of the knowledge (from Wikipedia):

- The info topic: Who or what is that this about?

- The sender of the information: Who’s sending it?

- The recipient of the information: Who will ultimately see or get the information?

- The data kind: What kind of knowledge is that this (e.g. a photograph, textual content)?

- The transmission precept: In what set of norms is that this being shared (e.g. college, medical, private communication)?

We hardly ever acknowledge how a delicate change in one in every of these parameters could possibly be a violation of privateness. It might be utterly acceptable for my good friend to have a bizarre picture of me, however as soon as it will get posted on an organization intranet web site it violates how I would like data (a photograph) to circulation. The recipient of the information has modified to one thing I now not discover acceptable. However I won’t care whether or not a whole stranger (like a burglar) sees the picture, so long as it by no means will get again to somebody I do know.

For communal use circumstances, the sender or receiver of knowledge is usually a bunch. There could also be a number of folks within the room throughout a video name, not simply the individual you might be calling. Folks can stroll out and in. I may be pleased with some folks in my house seeing a selected picture, however discover it embarrassing whether it is proven to company at a cocktail party.

We should additionally take into account what occurs when different folks’s content material is proven to those that shouldn’t see it. This content material could possibly be photographs or notifications from folks outdoors the communal area that could possibly be seen by anybody in entrance of the system. Smartphones can cover message contents whenever you aren’t close to your telephone for this precise purpose.

The providers themselves can develop the ‘receivers’ of knowledge in ways in which create uncomfortable conditions. In Privateness in Context, Nissenbaum talks about the privateness implications of Google Road View when it locations photographs of individuals’s homes on Google Maps. When a home was solely seen to individuals who walked down the road that was one factor, however when anybody on the earth can entry an image of a home, that modifications the parameters in a manner that causes concern. Most not too long ago, IBM used Flickr photographs that have been shared underneath a Artistic Commons license to coach facial recognition algorithms. Whereas this didn’t require any change to phrases of the service it was a shock to folks and could also be in violation of the Artistic Commons license. Ultimately, IBM took the dataset down.

Privateness issues for communal gadgets ought to deal with who’s getting access to data and whether or not it’s applicable based mostly on folks’s expectations. With out utilizing a framework like contextual inquiry we might be caught speaking about generalized guidelines for information sharing, and there’ll all the time be edge circumstances that violate somebody’s privateness.

A observe about youngsters

Kids make id and privateness particularly tough. About 40% of all households have a toddler. Kids shouldn’t be an afterthought. In the event you aren’t compliant with native legal guidelines you may get in lots of bother. In 2019, YouTube needed to settle with the FTC for a $170 million advantageous for promoting advertisements focusing on youngsters. It will get sophisticated as a result of the ‘age of consent’ is dependent upon the area as effectively: COPPA within the US is for folks underneath 13 years previous, CCPA in California is for folks underneath 16, and GDPR general is underneath 16 years previous however every member state can set its personal. The second you acknowledge youngsters are utilizing your platforms, that you must accommodate them.

For communal gadgets, there are numerous use circumstances for youngsters. As soon as they understand they’ll play no matter music they need (together with tracks of fart sounds) on a shared system they are going to do it. Kids deal with the exploration over the duty and can find yourself discovering far more in regards to the system than mother and father may. Adjusting your practices after constructing a tool is a recipe for failure. You will discover that the paradigms you select for different events received’t align with the expectations for youngsters, and modifying your software program to accommodate youngsters is tough or unattainable. It’s vital to account for youngsters from the start.

Safety

To get to a house assistant, you often must move by way of a house’s outer door. There’s often a bodily limitation by the use of a lock. There could also be alarm techniques. Lastly, there are social norms: you don’t simply stroll into another person’s home with out knocking or being invited.

As soon as you might be previous all of those locks, alarms, and norms, anybody can entry the communal system. Few issues inside a house are restricted–probably a protected with vital paperwork. When a communal system requires authentication, it’s often subverted in a roundabout way for comfort: for instance, a password may be taped to it, or a password could by no means have been set.

The idea of Zero Belief Networks speaks to this downside. It comes all the way down to a key query: is the chance related to an motion larger than the belief we’ve got that the individual performing the motion is who they are saying they’re?

Passwords, passcodes, or cell system authentication develop into nuisances; these supposed secrets and techniques are regularly shared between everybody who has entry to the system. Passwords may be written down for individuals who can’t keep in mind them, making them seen to much less trusted folks visiting your family. Have we not realized something for the reason that film Warfare Video games?

Once we take into account the chance related to an motion, we have to perceive its privateness implications. Would the motion expose somebody’s data with out their information? Would it not permit an individual to faux to be another person? May one other occasion inform simply the system was being utilized by an imposter?

There’s a tradeoff between the belief and threat. The system must calculate whether or not we all know who the individual is and whether or not the individual desires the knowledge to be proven. That must be weighed in opposition to the potential threat or hurt if an inappropriate individual is in entrance of the system.

Just a few examples of this tradeoff:

| Function | Danger and belief calculation | Potential points |

| Displaying a photograph when the system detects somebody within the room | Picture content material sensitivity, who’s within the room | Displaying an inappropriate picture to a whole stranger |

| Beginning a video name | Particular person’s account getting used for the decision, the precise individual beginning the decision | When the opposite aspect picks up it might not be who they thought it could be |

| Taking part in a private track playlist | Private suggestions being impacted | Incorrect future suggestions |

| Routinely ordering one thing based mostly on a voice command | Comfort of ordering, approval of the buying account’s proprietor | Delivery an merchandise that shouldn’t have been ordered |

This will get even trickier when folks now not within the house can entry the gadgets remotely. There have been circumstances of harassment, intimidation, and home abuse by folks whose entry ought to have been revoked: for instance, an ex-partner turning off the heating system. When ought to somebody have the ability to entry communal gadgets remotely? When ought to their entry be controllable from the gadgets themselves? How ought to folks be reminded to replace their entry management lists? How does primary safety upkeep occur inside a communal area?

See how a lot work this takes in a latest account of professional bono safety work for a harassed mom and her son. Or how a YouTuber was blackmailed, surveilled, and harassed by her sensible house. Apple even has a handbook for one of these scenario.

At house, the place there’s no company IT group to create insurance policies and automation to maintain issues safe, it’s subsequent to unattainable to handle all of those safety points. Even some companies have bother with it. We have to determine how customers will preserve and configure a communal system over time. Configuration for gadgets within the house and workplace may be wrought with numerous various kinds of wants over time.

For instance, what occurs when somebody leaves the house and is now not a part of it? We might want to take away their entry and will even discover it vital to dam them from sure providers. That is highlighted with the circumstances of harassment of individuals by way of spouses that also management the communal gadgets. Ongoing upkeep of a selected system may be triggered by a change in wants by the group. A house system could also be used to only play music or examine the climate at first. However when a brand new child comes house, with the ability to do video calling with shut kinfolk could develop into the next precedence.

Finish customers are often very dangerous at altering configuration after it’s set. They might not even know that they’ll configure one thing within the first place. Because of this folks have made a enterprise out of organising house stereo and video techniques. Folks simply don’t perceive the applied sciences they’re placing of their homes. Does that imply we want some kind of handy-person that does house system setup and administration? When extra sophisticated routines are required to fulfill the wants, how does somebody permit for modifications with out writing code, if they’re allowed to?

Communal gadgets want new paradigms of safety that transcend the usual login. The world inside a house is protected by a barrier like a locked door; the capabilities of communal gadgets ought to respect that. This implies each eradicating friction in some circumstances and growing it in others.

A observe about biometrics

(Supply: Google Face Match video, https://youtu.be/ODy_xJHW6CI?t=26)

Biometric authentication for voice and face recognition will help us get a greater understanding of who’s utilizing a tool. Examples of biometric authentication embrace FaceID for the iPhone and voice profiles for Amazon Alexa. There’s a push for regulation of facial recognition applied sciences, however opt-in for authentication functions tends to be carved out.

Nonetheless, biometrics aren’t with out issues. Along with points with pores and skin tone, gender bias, and native accents, biometrics assumes that everybody is prepared to have a biometric profile on the system–and that they’d be legally allowed to (for instance, youngsters might not be allowed to consent to a biometric profile). It additionally assumes this know-how is safe. Google FaceMatch makes it very clear it is just a know-how for personalization, reasonably than authentication. I can solely guess they’ve legalese to keep away from legal responsibility when an unauthorized individual spoofs somebody’s face, say by taking a photograph off the wall and displaying it to the system.

What can we imply by “personalization?” While you stroll right into a room and FaceMatch identifies your face, the Google House Hub dings, exhibits your face icon, then exhibits your calendar (whether it is related), and a feed of customized playing cards. Apple’s FaceID makes use of many ranges of presentation assault detection (also called “anti-spoofing”): it verifies your eyes are open and you’re looking on the display, and it makes use of a depth sensor to verify it isn’t “seeing” a photograph. The telephone can then present hidden notification content material or open the telephone to the house display. This measurement of belief and threat is benefited by understanding who could possibly be in entrance of the system. We are able to’t overlook that the machine studying that’s doing biometrics shouldn’t be a deterministic calculation; there’s all the time some extent of uncertainty.

Social and knowledge norms outline what we take into account acceptable, who we belief, and the way a lot. As belief goes up, we will take extra dangers in the best way we deal with data. Nonetheless, it’s tough to attach belief with threat with out understanding folks’s expectations. I’ve entry to my accomplice’s iPhone and know the passcode. It will be a violation of a norm if I walked over and unlocked it with out being requested, and doing so will result in diminished belief between us.

As we will see, biometrics does supply some advantages however received’t be the panacea for the distinctive makes use of of communal gadgets. Biometrics will permit these prepared to opt-in to the gathering of their biometric profile to achieve customized entry with low friction, however it’ll by no means be useable for everybody with bodily entry.

Experiences

Folks use a communal system for brief experiences (checking the climate), ambient experiences (listening to music or glancing at a photograph), and joint experiences (a number of folks watching a film). The system wants to pay attention to norms inside the area and between the a number of folks within the area. Social norms are guidelines by which individuals resolve easy methods to act in a selected context or area. Within the house, there are norms about what folks ought to and mustn’t do. If you’re a visitor, you attempt to see if folks take their sneakers off on the door; you don’t rearrange issues on a bookshelf; and so forth.

Most software program is constructed to work for as many individuals as doable; that is referred to as generalization. Norms stand in the best way of generalization. Right now’s know-how isn’t adequate to adapt to each doable scenario. One technique is to simplify the software program’s performance and let the people implement norms. For instance, when a number of folks discuss to an Echo on the identical time, Alexa will both not perceive or it’ll take motion on the final command. Multi-turn conversations between a number of folks are nonetheless of their infancy. That is advantageous when there are understood norms–for instance, between my accomplice and I. Nevertheless it doesn’t work so effectively whenever you and a toddler are each making an attempt to shout instructions.

Norms are fascinating as a result of they are typically realized and negotiated over time, however are invisible. Experiences which might be constructed for communal use want to pay attention to these invisible norms by way of cues that may be detected from peoples’ actions and phrases. This will get particularly tough as a result of a dialog between two folks may embrace data topic to completely different expectations (in a Contextual Integrity sense) about how that data is used. With sufficient information, fashions may be created to “learn between the strains” in each useful and harmful methods.

Video video games already cater to a number of folks’s experiences. With the Nintendo Change or another gaming system, a number of folks can play collectively in a joint expertise. Nonetheless, the principles governing these experiences are by no means utilized to, say, Netflix. The belief is all the time that one individual holds the distant. How may these experiences be improved if software program may settle for enter from a number of sources (distant controls, voice, and so forth.) to construct a number of films that’s applicable for everybody watching?

Communal expertise issues spotlight inequalities in households. With ladies doing extra family coordination than ever, there’s a must rebalance the duties for households. More often than not these coordination duties are relegated to non-public gadgets, typically the spouse’s cell phone, once they contain all the household (although there’s a digital divide outdoors the US). With out shifting these experiences into a spot that everybody can take part in, we’ll proceed these inequalities.

To this point, know-how has been nice at intermediating folks for coordination by way of techniques like textual content messaging, social networks, and collaborative paperwork. We don’t construct interplay paradigms that permit for a number of folks to have interaction on the identical time of their communal areas. To do that we have to handle that the norms that dictate what is suitable habits are invisible and pervasive within the areas these applied sciences are deployed.

Possession

Many of those gadgets will not be actually owned by the individuals who purchase them. As half of the present development in direction of subscription-based enterprise fashions, the system received’t operate should you don’t subscribe to a service. These providers have license agreements that specify what you possibly can and can’t do (which you’ll be able to learn when you have a few hours to spare and can perceive them).

For instance, this has been a difficulty for followers of Amazon’s Blink digital camera. The house automation trade is fragmented: there are numerous distributors, every with its personal software to manage their specific gadgets. However most individuals don’t need to use completely different apps to manage their lighting, their tv, their safety cameras, and their locks. Due to this fact, folks have began to construct controllers that span the completely different ecosystems. Doing so has brought about Blink customers to get their accounts suspended.

What’s even worse is that these license agreements can change each time the corporate desires. Licenses are regularly modified with nothing greater than a notification, after which one thing that was beforehand acceptable is now forbidden. In 2020, Wink immediately utilized a month-to-month service cost; should you didn’t pay, the system would cease working. Additionally in 2020, Sonos brought about a stir by saying they have been going to “recycle” (disable) previous gadgets. They ultimately modified their coverage.

The problem isn’t simply what you are able to do along with your gadgets; it’s additionally what occurs to the information they create. Amazon’s Ring partnership with one in ten US police departments troubles many privateness teams as a result of it creates an enormous surveillance program. What should you don’t need to be part of the police state? Be sure to examine the correct field and skim your phrases of service. In the event you’re designing a tool, that you must require customers to choose in to information sharing (particularly as areas adapt GDPR and CCPA-like regulation).

Whereas methods like federated studying are on the horizon, to keep away from latency points and mass information assortment, it stays to be seen whether or not these methods are passable for corporations that gather information. Is there a profit to each organizations and their prospects to restrict or obfuscate the transmission of information away from the system?

Possession is especially tough for communal gadgets. This can be a collision between the expectations of customers who put one thing of their house; these expectations run instantly in opposition to the best way rent-to-use providers are pitched. Till we acknowledge that {hardware} put in a house is completely different from a cloud service, we’ll by no means get it proper.

A number of issues, now what?

Now that we’ve got dived into the varied issues that rear their head with communal gadgets, what can we do about it? Within the subsequent article we focus on a solution to take into account the map of the communal area. This helps construct a greater understanding of how the communal system matches within the context of the area and providers that exist already.

We may even present an inventory of dos and don’ts for leaders, builders, and designers to contemplate when constructing a communal system.

[ad_2]