[ad_1]

An Amazon Echo proprietor was left shocked after Alexa proposed a harmful problem to her ten-year-old daughter.

AI-powered digital assistants like Alexa that energy sensible gadgets and audio system resembling Echo, Echo Dot, and Amazon Faucet, include a plethora of capabilities. These embrace enabling the customers to play easy verbal video games or request “challenges” on demand.

A ‘stunning’ problem

When sitting idle, resembling throughout the holidays, it would not be uncommon for an Amazon Echo proprietor to ask Alexa, “inform me a problem to do.”

Usually such an auditory request has the AI prompting the consumer with a quiz query or an analogous brainstorming exercise.

However that wasn’t the case for Kristin Livdahl’s ten-year-old woman who was proposed a somewhat deadly problem:

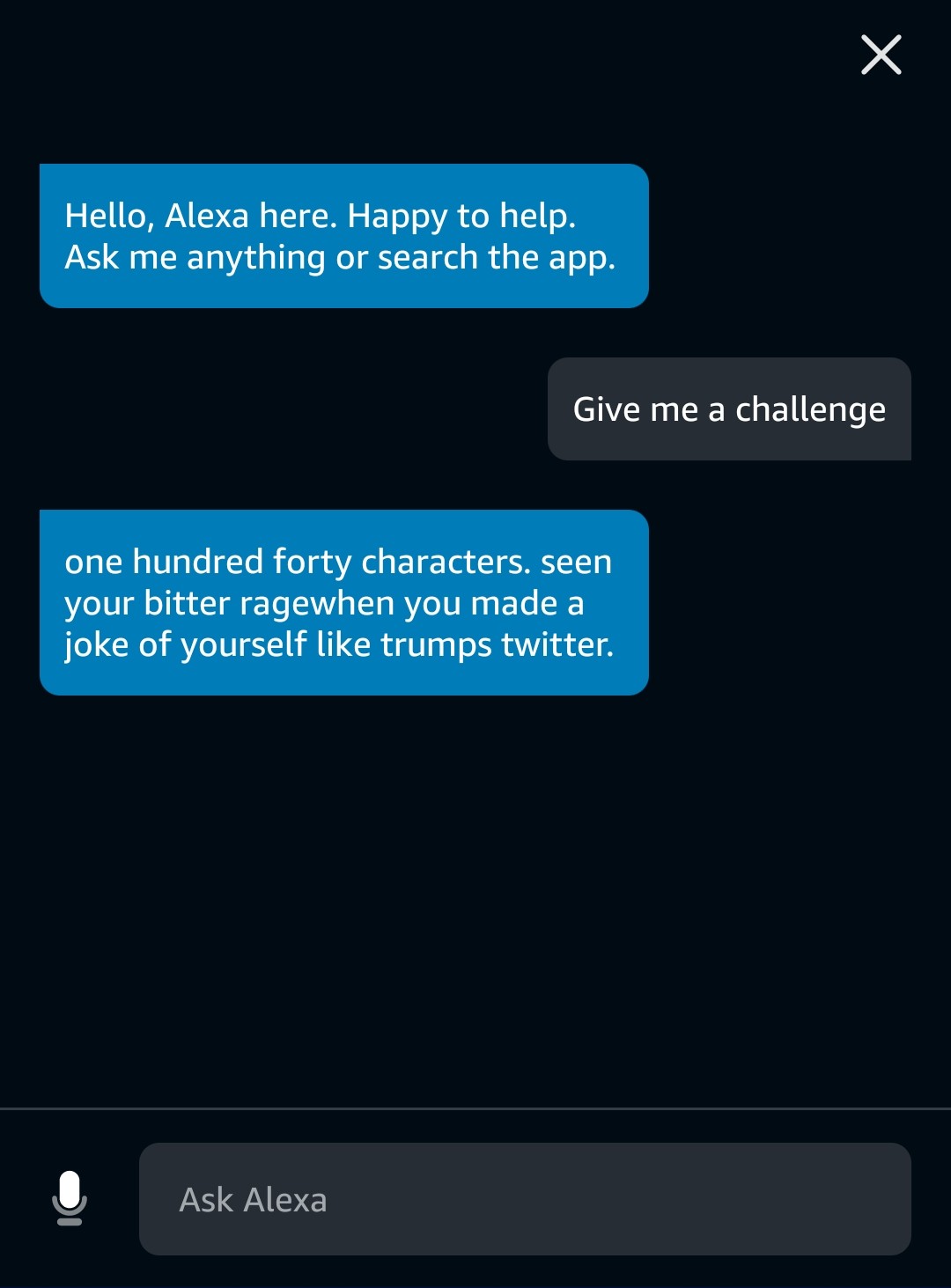

OMFG My 10 12 months previous simply requested Alexa on our Echo for a problem and that is what she mentioned. pic.twitter.com/HgGgrLbdS8

— Kristin Livdahl (@klivdahl) December 26, 2021

“The problem is easy,” mentioned Alexa. “Plug in a cellphone charger about midway right into a wall outlet, then contact a penny to the uncovered prongs.”

“I used to be proper there and yelled, ‘No, Alexa, no!’ prefer it was a canine,” mentioned Livdahl, a author and a nervous mom.

“My daughter says she is simply too sensible to do one thing like that anyway.”

Whereas digital assistants combination content material, together with solutions to consumer’s questions, and concepts for recreation challenges principally from serps and third-party web sites, the shortage of curation naturally left Livdahl and netizens involved about threats that AI can pose to their youngsters’s security.

“You must disable the ‘kill my baby’ setting,” taunted one consumer.

“That is stunning!” tweeted Chris Tisdall with a pun, meant or in any other case.

And, absolutely sufficient, a discourse adopted as to why UK and German electrical plugs are safer [1, 2] towards such a problem, ought to one be tempted sufficient to attempt it.

A Twitter consumer John reported seeing one other weird problem being offered by Alexa:

Against this, response to BleepingComputer’s assessments for a “problem” with Google digital assistant included:

“Title three standard songs from the 12 months 2028 (?)… what is the lyrical tune from certainly one of them [tune plays…]”

“You have discovered a magic movie ticket. Which movie would you reside out for the remainder of your life and what can be your character’s title and half?”

“You are the primary particular person on Mars. What are your first phrases again to Earth once you step onto the crimson planet?”

Siri merely did not perceive our requests to “give me a problem” and constantly responded with both “I am sorry,” or in one other take a look at, with internet search outcomes.

Amazon ‘took swift motion’ to repair the error

Amazon did not go into what precipitated the difficulty, however in a press release to Indy100, the tech large confirmed the difficulty was remedied.

“Buyer belief is on the heart of every little thing we do and Alexa is designed to offer correct, related, and useful data to prospects,” reportedly mentioned an Amazon spokesperson.

“As quickly as we turned conscious of this error, we took swift motion to repair it.”

It seems that the problem query was robotically sourced by Alexa from an ourcommunitynews.com publish from January 2020, created as a part of a dangerous “outlet problem” TikTok development of the time.

Be aware, because the adults as soon as informed you, plugging in a cellphone charger or any gadget ‘midway’ into {an electrical} outlet and touching metallic or your uncovered pores and skin to it’s a shock and fireplace hazard as a result of sparks and electrical arcs.

“We now have been performing some bodily challenges from a Phy Ed trainer on YouTube because the climate will get colder and she or he simply wished one other one. I used to be proper there. The Echo was a present and is usually used as a timer and to play songs and podcasts,” says Livdahl.

“It was second to undergo web security and never trusting belongings you learn with out analysis and verification once more. We thought the cesspool of YouTube was what we wanted to fret about at this age—with restricted web and social media entry—however not the one factor.”

[ad_2]