[ad_1]

A Transient Historical past of Distributed Databases

The period of Internet 2.0 introduced with it a renewed curiosity in database design. Whereas conventional RDBMS databases served properly the info storage and knowledge processing wants of the enterprise world from their business inception within the late Nineteen Seventies till the dotcom period, the big quantities of information processed by the brand new functions—and the velocity at which this knowledge must be processed—required a brand new method. For an important overview on the necessity for these new database designs, I extremely suggest watching this presentation that database guru Michael Stonebraker delivered for Stanford’s Pc Programs Colloquium. The brand new databases which have emerged throughout this time have adopted names corresponding to NoSQL and NewSQL, emphasizing that good outdated SQL databases fell quick when it got here to assembly the brand new calls for.

Regardless of their completely different design selections for specific protocols, these databases have adopted, for essentially the most half, a shared-nothing, distributed computing structure. Whereas the processing energy of each computing system is in the end restricted by bodily constraints and, in circumstances corresponding to distributed databases the place parallel executions are concerned, by the implications of Amdahl’s legislation, most of those programs supply the theoretical risk of limitless horizontal capability scaling for each compute and storage. Every node represents a unit of compute and storage that may be added to the system as wanted.

Nevertheless, as Cockroach Labs CEO and co-founder Spencer Kimball explains right here within the case of CockroachDB, designing certainly one of these new databases from scratch is a herculean activity that requires extremely educated and skillful engineers working in coordination and making very fastidiously thought choices. For databases corresponding to CockroachDB, having a dependable, high-performance solution to retailer and retrieve knowledge from steady storage is crucial. Designing a library that gives quick steady storage leveraging both filesystem or uncooked units is a really troublesome downside due to the elevated variety of edge circumstances which are required to get proper.

Offering Quick Storage with RocksDB

RocksDB is a library that solves the issue of abstracting entry to native steady storage. It permits software program engineers to focus their energies on the design and implementation of different areas of their programs with the peace of thoughts of counting on RocksDB for entry to steady storage, understanding that it at the moment runs a number of the most demanding database workloads wherever on the planet at Fb and different equally difficult environments.

The benefits of RocksDB over different retailer engines are:

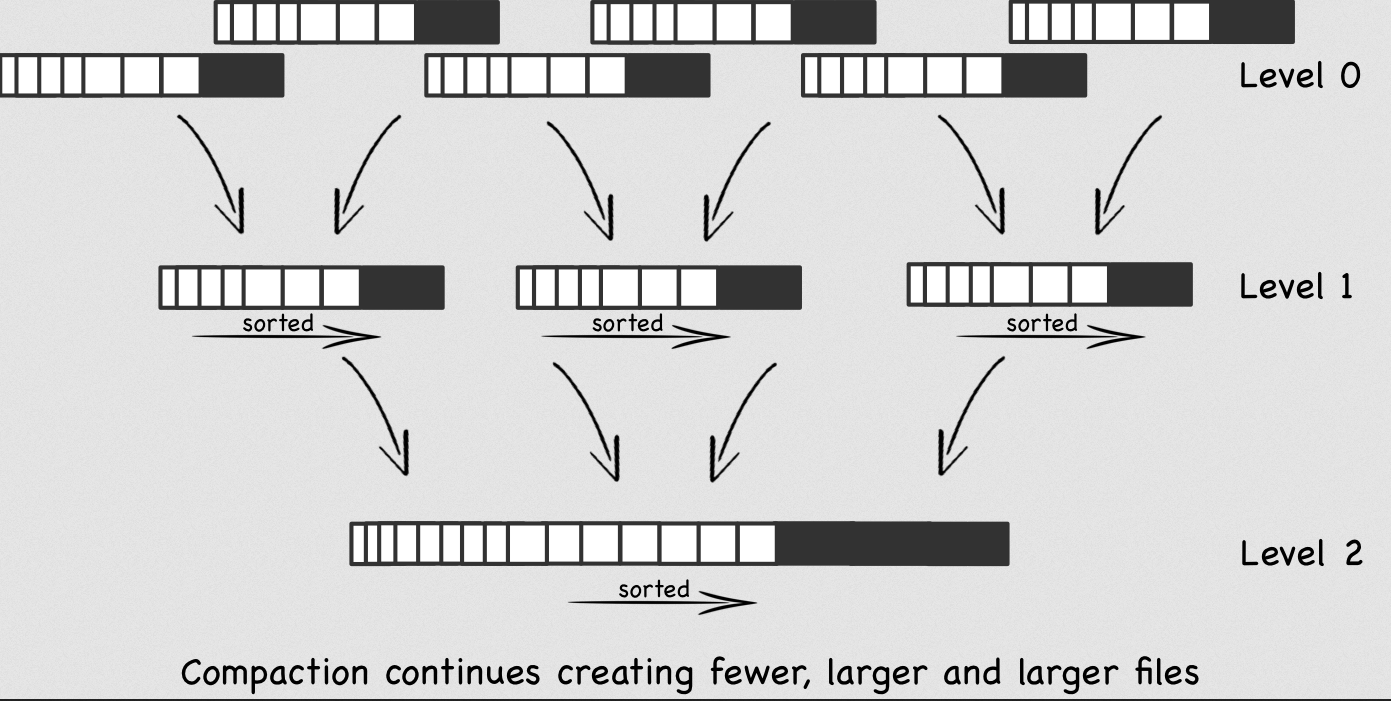

Technical design. As a result of probably the most widespread use circumstances of the brand new databases is storing knowledge that’s generated by high-throughput sources, it is crucial that the shop engine is ready to deal with write-intensive workloads, all whereas providing acceptable learn efficiency. RocksDB implements what is thought within the database literature as a log-structured merge tree aka LSM tree. Going into the main points of LSM timber, and RocksDB’s implementation of the identical, is out of the scope of this weblog, however suffice it to say that it’s an indexing construction optimized to deal with high-volume—sequential or random—write workloads. That is completed by treating each write as an append operation. A mechanism, that goes by the identify of compaction runs—transparently for the developer—within the background, eradicating knowledge that’s now not related corresponding to deleted keys or older variations of legitimate keys.

Supply: http://www.benstopford.com/2015/02/14/log-structured-merge-trees/

Via the intelligent use of bloom filters, RocksDB additionally affords nice learn efficiency making RocksDB the best candidate on which to base distributed databases. The opposite standard option to base storage engines on is b-trees. InnoDB, MySQL’s default storage engine, is an instance of a retailer engine implementing a b-tree by-product, particularly, what is named a b+tree.

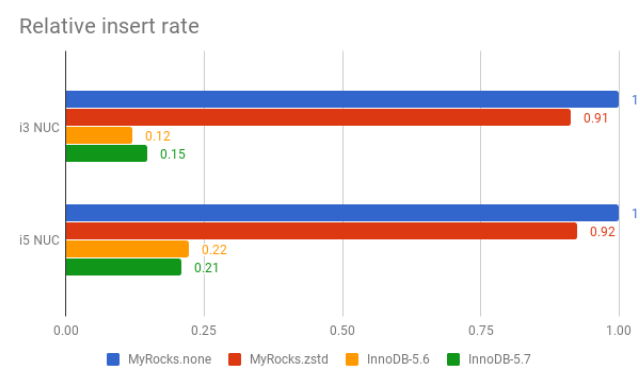

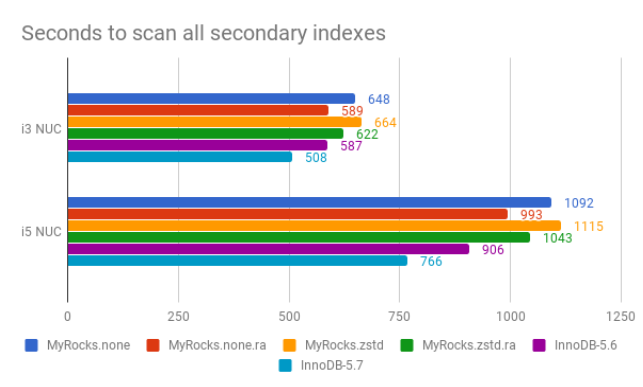

Efficiency. The selection of a given technical design for efficiency causes must be backed with empirical verification of the selection. Throughout his time at Fb, within the context of the challenge, a fork of MySQL that replaces InnoDB with RocksDB as MySQL’s storage engine, Mark Callaghan carried out intensive and rigorous efficiency measurements to check MySQL efficiency on InnoDB vs on RocksDB. Particulars could be discovered right here. Not surprisingly, RocksDB frequently comes out as vastly superior in write-intensive benchmarks. Apparently, whereas InnoDB was additionally frequently higher than RocksDB in read-intensive benchmarks, this benefit, in relative phrases, was not as massive because the benefit RocksDB offers within the case of write-intensive duties over InnoDB. Right here is an instance within the case of a I/O certain benchmark on Intel NUC:

Supply: https://smalldatum.blogspot.com/2017/11/insert-benchmark-io-bound-intel-nuc.html

Tunability. RocksDB offers a number of tunable parameters to extract the perfect efficiency on completely different {hardware} configurations. Whereas the technical design offers an architectural motive to favor one sort of answer over one other, attaining optimum efficiency on specific use circumstances normally requires the flexibleness of tuning sure parameters for these use circumstances. RocksDB offers a protracted listing of parameters that can be utilized for this objective. Samsung’s Praveen Krishnamoorthy offered on the 2015 annual meetup an in depth examine on how RocksDB could be tuned to accommodate completely different workloads.

Manageability. In mission-critical options corresponding to distributed databases, it’s important to have as a lot management and monitoring capabilities as potential over important elements of the system, such because the storage engine within the nodes. Fb launched a number of necessary enhancements to RocksDB, corresponding to dynamic choice modifications and the provision of detailed statistics for all features of RocksDB inside operations together with compaction, which are required by enterprise grade software program merchandise.

Manufacturing references. The world of enterprise software program, notably on the subject of databases, is extraordinarily threat averse. For completely comprehensible causes—threat of financial losses and reputational injury in case of information loss or knowledge corruption—no person desires to be a guinea pig on this area. RocksDB was developed by Fb with the unique motivation of switching the storage engine of its large MySQL cluster internet hosting its consumer manufacturing database from InnoDB to RocksDB. The migration was accomplished by 2018 leading to a 50% storage financial savings for Fb. Having Fb lead the event and upkeep of RocksDB for its most crucial use circumstances of their multibillion greenback enterprise is a vital endorsement, notably for builders of databases that lack Fb’s sources to develop and preserve their very own storage engines.

Language bindings. RocksDB affords a key-value API, out there for C++, C and Java. These are essentially the most broadly used programming languages within the distributed database world.

When contemplating all these 6 areas holistically, RocksDB is a really interesting alternative for a distributed database developer on the lookout for a quick, manufacturing examined storage engine.

Who Makes use of RocksDB?

Through the years, the listing of recognized makes use of of RocksDB has elevated dramatically. Here’s a non-exhaustive listing of databases that embed RocksDB that underscores its suitability as a quick storage engine:

Whereas all these database suppliers in all probability have related causes for choosing RocksDB over different choices, Instagram’s substitute of Apache Cassandra’s personal Java written LSM tree with RocksDB, which is now out there to all different customers of Apache Cassandra, is critical. Apache Cassandra is likely one of the hottest NoSQL databases.

RocksDB has additionally discovered huge acceptance as an embedded database exterior the distributed database world for equally necessary, mission-critical use circumstances:

- Kafka Streams – Within the Apache Kafka ecosystem, Kafka Streams is a shopper library that’s generally used to construct functions and microservices that eat and produce messages saved in Kafka clusters. Kafka Streams helps fault-tolerant stateful functions. RocksDB is utilized by default to retailer state in such configurations.

- Apache Samza – Apache Samza affords related performance as Kafka Streams and it additionally makes use of RocksDB to retailer state in fault-tolerant configurations.

- Netflix – After taking a look at a number of choices, Netflix picked RocksDB to assist their SSD caching wants of their world caching system, EVCache.

- Santander UK – Cloudera Skilled Companies constructed a near-real-time transactional analytics system for Santander UK, backed by Apache Hadoop, that implements a streaming enrichment answer that shops its state on RocksDB. Santander Group is certainly one of Spain’s largest multinational banks. As of this writing, its revenues are near 50 billion euros with property beneath administration approaching 1.5 trillion euros.

- Uber – Cherami is Uber’s personal sturdy distributed messaging system equal to Amazon’s SQS. Cherami selected to make use of RocksDB as their storage engine of their storage hosts for its efficiency and indexing options.

RocksDB: Powering Excessive-Efficiency Distributed Information Programs

From its beginnings as a fork of LevelDB, a key-value embedded retailer developed by Google infrastructure specialists Jeff Dean and Sanjay Ghemawat, by means of the efforts and arduous work of the Fb engineers that reworked it into an enterprise-class answer apt for operating mission-critical workloads, RocksDB has been in a position to acquire widespread acceptance because the storage engine of alternative for engineers on the lookout for a battle-tested embedded storage engine.

Ethan is a software program engineering skilled. Primarily based in Silicon Valley, he has labored at quite a few industry-leading firms and startups: Hewlett Packard—together with their world-renowned analysis group HP Labs—TIBCO Software program, Delphix and Cape Analytics. At TIBCO Software program he was one of many key contributors to the re-design and implementation of ActiveSpaces, TIBCO’s distributed in-memory knowledge grid. Ethan holds Masters (2007) and PhD (2012) levels in Electrical Engineering from Stanford College.

[ad_2]