[ad_1]

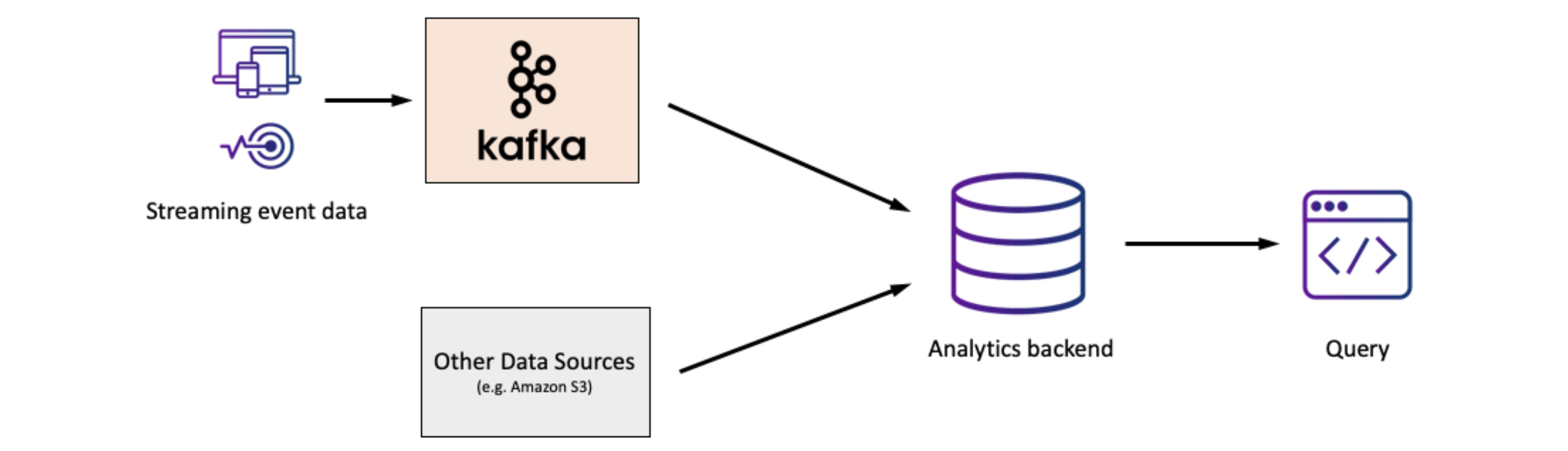

Apache Kafka has seen broad adoption because the streaming platform of alternative for constructing purposes that react to streams of information in actual time. In lots of organizations, Kafka is the foundational platform for real-time occasion analytics, appearing as a central location for accumulating occasion information and making it accessible in actual time.

Whereas Kafka has develop into the usual for occasion streaming, we regularly want to research and construct helpful purposes on Kafka information to unlock probably the most worth from occasion streams. On this e-commerce instance, Fynd analyzes clickstream information in Kafka to know what’s taking place within the enterprise over the previous couple of minutes. Within the digital actuality area, a supplier of on-demand VR experiences makes determinations on what content material to supply primarily based on massive volumes of consumer habits information generated in actual time and processed by Kafka. So how ought to organizations take into consideration implementing analytics on information from Kafka?

Issues for Actual-Time Occasion Analytics with Kafka

When deciding on an analytics stack for Kafka information, we are able to break down key issues alongside a number of dimensions:

- Information Latency

- Question Complexity

- Columns with Blended Sorts

- Question Latency

- Question Quantity

- Operations

Information Latency

How updated is the information being queried? Remember the fact that advanced ETL processes can add minutes to hours earlier than the information is offered to question. If the use case doesn’t require the freshest information, then it could be ample to make use of an information warehouse or information lake to retailer Kafka information for evaluation.

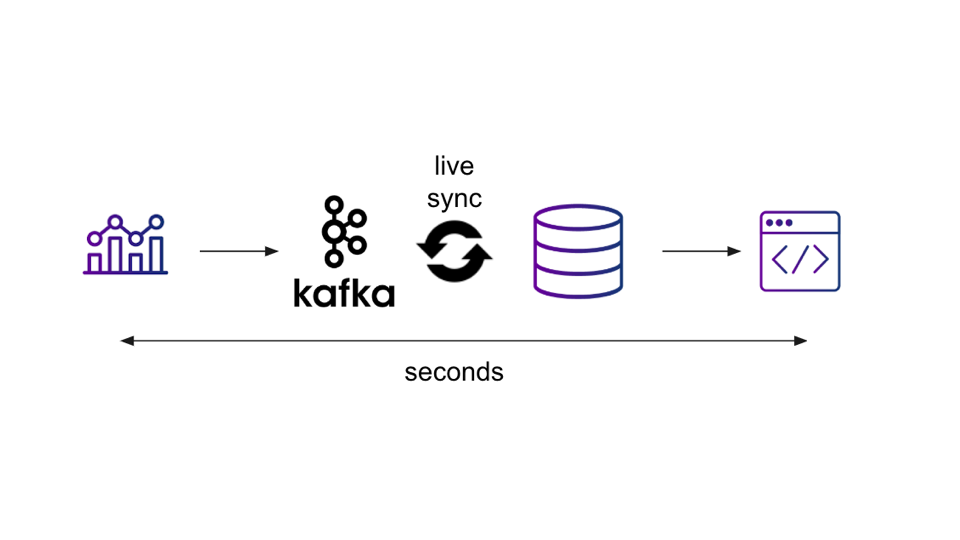

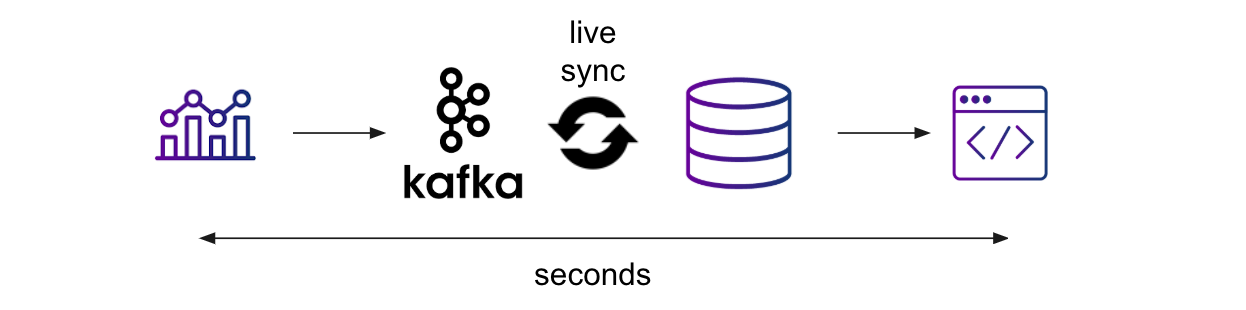

Nonetheless, Kafka is a real-time streaming platform, so enterprise necessities usually necessitate a real-time database, which might present quick ingestion and a steady sync of recent information, to have the ability to question the newest information. Ideally, information needs to be accessible for question inside seconds of the occasion occurring with the intention to help real-time purposes on occasion streams.

Question Complexity

Does the applying require advanced queries, like joins, aggregations, sorting, and filtering? If the applying requires advanced analytic queries, then help for a extra expressive question language, like SQL, could be fascinating.

Notice that in lots of situations, streams are most helpful when joined with different information, so do contemplate whether or not the flexibility to do joins in a performant method could be necessary for the use case.

Columns with Blended Sorts

Does the information conform to a well-defined schema or is the information inherently messy? If the information suits a schema that doesn’t change over time, it could be attainable to keep up an information pipeline that hundreds it right into a relational database, with the caveat talked about above that information pipelines will add information latency.

If the information is messier, with values of various sorts in the identical column as an example, then it could be preferable to pick out a Kafka sink that may ingest the information as is, with out requiring information cleansing at write time, whereas nonetheless permitting the information to be queried.

Question Latency

Whereas information latency is a query of how recent the information is, question latency refers back to the pace of particular person queries. Are quick queries required to energy real-time purposes and dwell dashboards? Or is question latency much less essential as a result of offline reporting is ample for the use case?

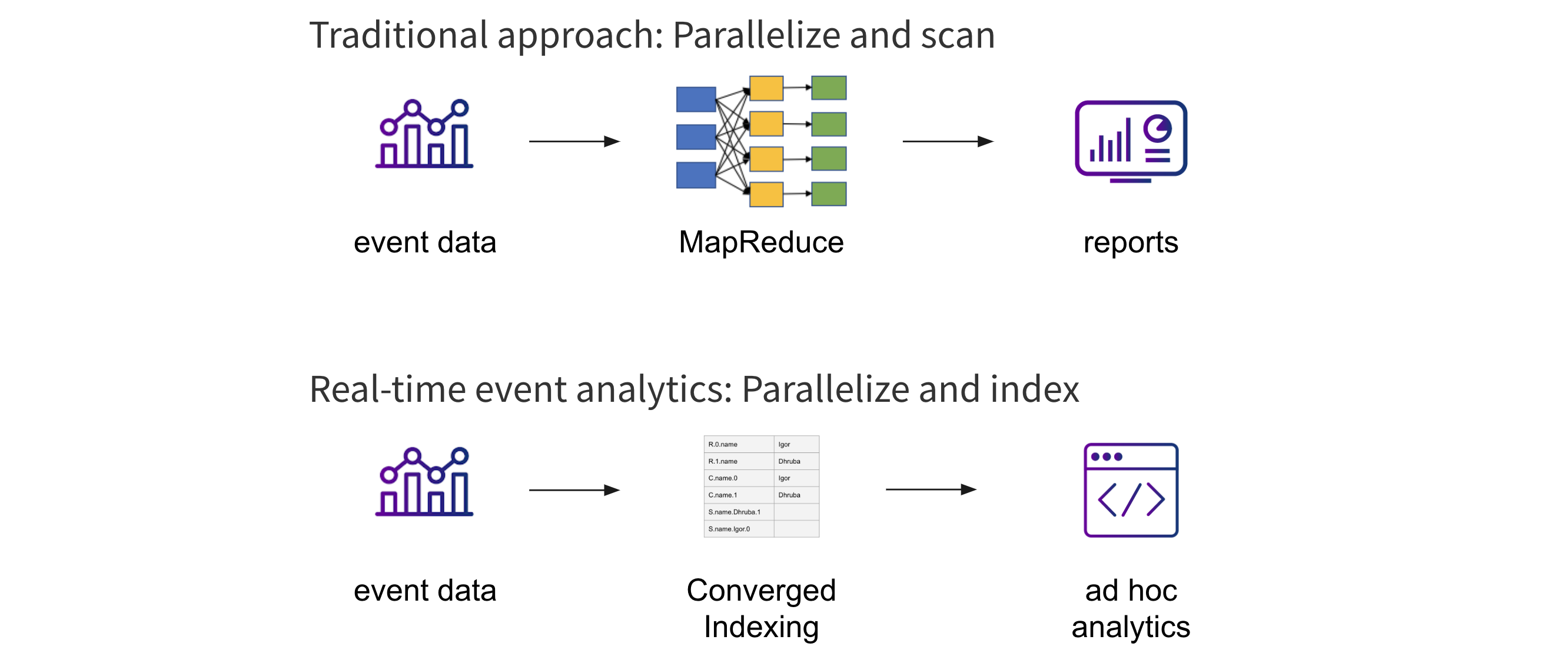

The normal strategy to analytics on massive information units entails parallelizing and scanning the information, which is able to suffice for much less latency-sensitive use circumstances. Nonetheless, to satisfy the efficiency necessities of real-time purposes, it’s higher to think about approaches that parallelize and index the information as a substitute, to allow low-latency advert hoc queries and drilldowns.

Question Quantity

Does the structure have to help massive numbers of concurrent queries? If the use case requires on the order of 10-50 concurrent queries, as is widespread with reporting and BI, it could suffice to ETL the Kafka information into an information warehouse to deal with these queries.

There are a lot of fashionable information purposes that want a lot greater question concurrency. If we’re presenting product suggestions in an e-commerce situation or making choices on what content material to function a streaming service, then we are able to think about hundreds of concurrent queries, or extra, on the system. In these circumstances, a real-time analytics database could be the higher alternative.

Operations

Is the analytics stack going to be painful to handle? Assuming it’s not already being run as a managed service, Kafka already represents one distributed system that needs to be managed. Including yet one more system for analytics provides to the operational burden.

That is the place absolutely managed cloud companies can assist make real-time analytics on Kafka way more manageable, particularly for smaller information groups. Search for options don’t require server or database administration and that scale seamlessly to deal with variable question or ingest calls for. Utilizing a managed Kafka service also can assist simplify operations.

Conclusion

Constructing real-time analytics on Kafka occasion streams entails cautious consideration of every of those elements to make sure the capabilities of the analytics stack meet the necessities of your software and engineering workforce. Elasticsearch, Druid, Postgres, and Rockset are generally used as real-time databases to serve analytics on information from Kafka, and it’s best to weigh your necessities, throughout the axes above, in opposition to what every resolution supplies.

For extra data on this matter, do try this associated tech discuss the place we undergo these issues in higher element: Finest Practices for Analyzing Kafka Occasion Streams.

[ad_2]