[ad_1]

Intro

In recent times, Kafka has develop into synonymous with “streaming,” and with options like Kafka Streams, KSQL, joins, and integrations into sinks like Elasticsearch and Druid, there are extra methods than ever to construct a real-time analytics software round streaming knowledge in Kafka. With all of those stream processing and real-time knowledge retailer choices, although, additionally comes questions for when every must be used and what their execs and cons are. On this publish, I’ll focus on some widespread real-time analytics use-cases that we now have seen with our prospects right here at Rockset and the way totally different real-time analytics architectures swimsuit every of them. I hope by the tip you end up higher knowledgeable and fewer confused concerning the real-time analytics panorama and are able to dive in to it for your self.

First, an compulsory apart on real-time analytics.

Traditionally, analytics have been performed in batch, with jobs that might run at some specified interval and course of some properly outlined quantity of knowledge. Over the past decade nonetheless, the web nature of our world has led rise to a unique paradigm of knowledge era wherein there isn’t a properly outlined begin or finish to the information. These unbounded “streams” of knowledge are sometimes comprised of buyer occasions from a web-based software, sensor knowledge from an IoT gadget, or occasions from an inside service. This shift in the way in which we take into consideration our enter knowledge has necessitated the same shift in how we course of it. In spite of everything, what does it imply to compute the min or max of an unbounded stream? Therefore the rise of real-time analytics, a self-discipline and methodology for the right way to run computation on knowledge from real-time streams to supply helpful outcomes. And since streams additionally have a tendency have a excessive knowledge velocity, real-time analytics is mostly involved not solely with the correctness of its outcomes but in addition its freshness.

Kafka match itself properly into this new motion as a result of it’s designed to bridge knowledge producers and shoppers by offering a scalable, fault-tolerant spine for event-like knowledge to be written to and skim from. Through the years as they’ve added options like Kafka Streams, KSQL, joins, Kafka ksqlDB, and integrations with varied knowledge sources and sinks, the barrier to entry has decreased whereas the facility of the platform has concurrently elevated. It’s necessary to additionally notice that whereas Kafka is kind of highly effective, there are a lot of issues it self-admittedly just isn’t. Particularly, it’s not a database, it’s not transactional, it’s not mutable, its question language KSQL just isn’t totally SQL-compliant, and it’s not trivial to setup and keep.

Now that we’ve settled that, let’s contemplate a couple of widespread use circumstances for Kafka and see the place stream processing or a real-time database may fit. We’ll focus on what a pattern structure would possibly appear like for every.

Use Case 1: Easy Filtering and Aggregation

A quite common use case for stream processing is to supply fundamental filtering and predetermined aggregations on high of an occasion stream. Let’s suppose we now have clickstream knowledge coming from a client net software and we need to decide the variety of homepage visits per hour.

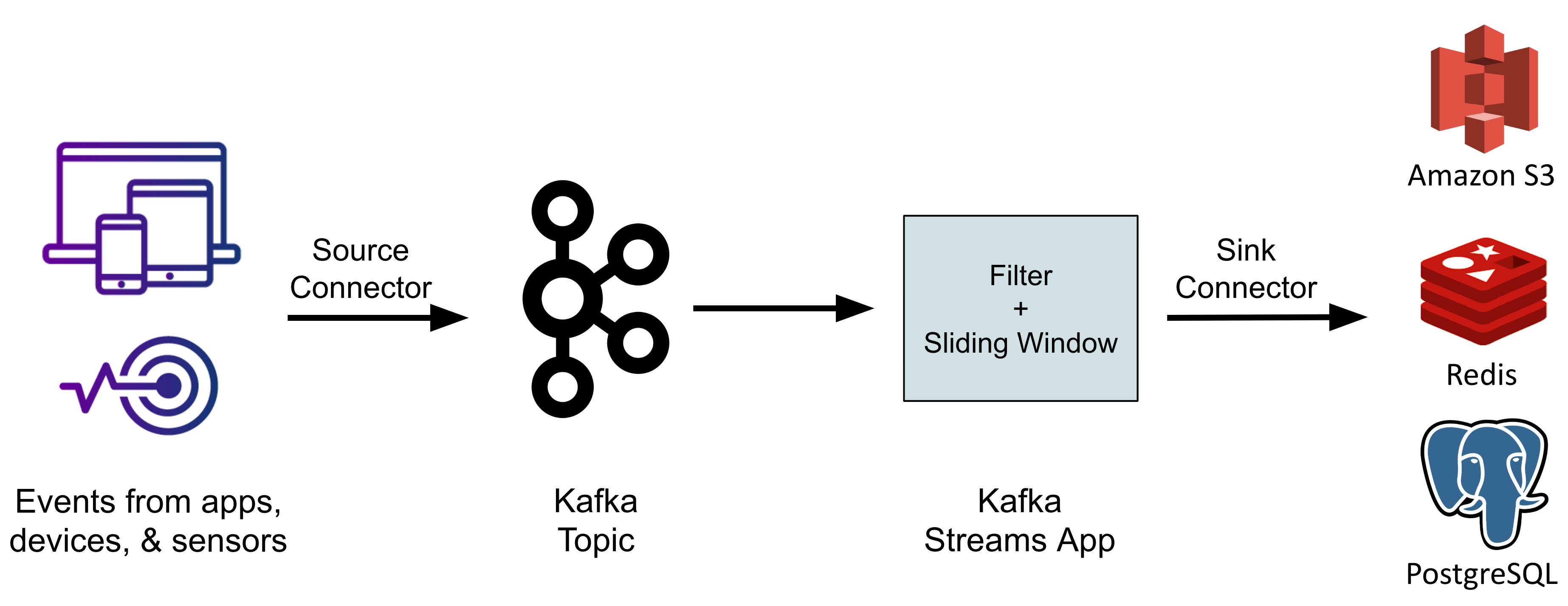

To perform this we are able to use Kafka streams and KSQL. Our net software writes occasions right into a Kafka subject known as clickstream. We are able to then create a Kafka stream primarily based on this subject that filters out all occasions the place endpoint != '/' and applies a sliding window with an interval of 1 hour over the stream and computes a rely(*). This ensuing stream can then dump the emitted information into your sink of selection– S3/GCS, Elasticsearch, Redis, Postgres, and many others. Lastly your inside software/dashboard can pull the metrics from this sink and show them nonetheless you want.

Observe: Now with ksqlDB you may have a materialized view of a Kafka stream that’s straight queryable, so you could not essentially must dump it right into a third-party sink.

This kind of setup is type of the “hiya world” of Kafka streaming analytics. It’s so easy however will get the job achieved, and consequently this can be very widespread in real-world implementations.

Professionals:

- Easy to setup

- Quick queries on the sinks for predetermined aggregations

Cons:

- You must outline a Kafka stream’s schema at stream creation time, that means future modifications within the software’s occasion payload may result in schema mismatches and runtime points

- There’s no alternate solution to slice the information after-the-fact (i.e. views/minute)

Use Case 2: Enrichment

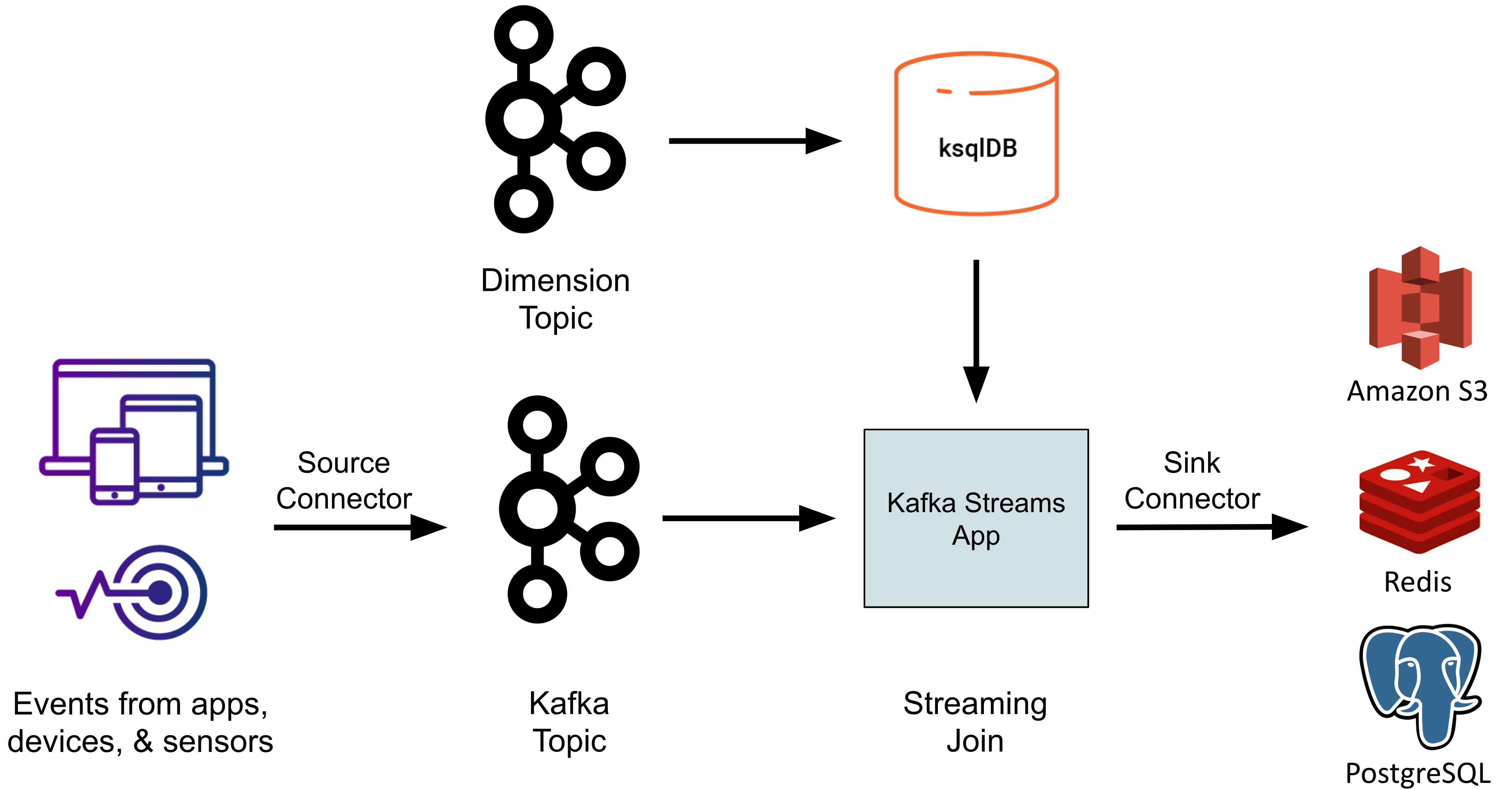

The following use case we’ll contemplate is stream enrichment– the method of denormalizing stream knowledge to make downstream analytics less complicated. That is generally known as a “poor man’s be a part of” since you are successfully becoming a member of the stream with a small, static dimension desk (from SQL parlance). For instance, let’s say the identical clickstream knowledge from earlier than contained a subject known as countryId. Enrichment would possibly contain utilizing the countryId to lookup the corresponding nation identify, nationwide language, and many others. and inject these further fields into the occasion. This may then allow downstream functions that have a look at the information to compute, for instance, the variety of non-native English audio system who load the English model of the web site.

To perform this, step one is to get our dimension desk mapping countryId to call and language accessible in Kafka. Since all the pieces in Kafka is a subject, even this knowledge have to be written to some new subject, let’s say known as international locations. Then we have to create a KSQL desk on high of that subject utilizing the CREATE TABLE KSQL DDL. This requires the schema and first key be specified at creation time and can materialize the subject as an in-memory desk the place the newest file for every distinctive major key worth is represented. If the subject is partitioned, KSQL could be good right here and partition this in-memory desk as properly, which can enhance efficiency. Beneath the hood, these in-memory tables are literally cases of RocksDB, an extremely highly effective, embeddable key worth retailer created at Fb by the identical engineers who’ve now constructed Rockset (small world!).

Then, like earlier than, we have to create a Kafka stream on high of the clickstream Kafka subject. Let’s name this stream S. Then utilizing some SQL-like semantics, we are able to outline one other stream, let’s name it T which would be the output of the be a part of between that Kafka stream and our Kafka desk from above. For every file in our stream S, it is going to lookup the countryId within the Kafka desk we outlined and add the countryName and language fields to the file and emit that file to stream T.

Professionals:

- Downstream functions now have entry to fields from a number of sources multi functional place

Cons:

- Kafka desk is just keyed on one subject, so joins for an additional subject require creating one other desk on the identical knowledge that’s keyed in another way

- Kafka desk being in-memory means dimension tables should be small-ish

- Early materialization of the be a part of can result in stale knowledge. For instance if we had a userId subject that we had been attempting to hitch on to counterpoint the file with the person’s whole visits, the information in stream

Twouldn’t mirror the up to date worth of the person’s visits after the enrichment takes place

Use Case 3: Actual-Time Databases

The following step within the maturation of streaming analytics is to start out working extra intricate queries that deliver collectively knowledge from varied sources. For instance, let’s say we need to analyze our clickstream knowledge in addition to knowledge about our promoting campaigns to find out the right way to most successfully spend our advert {dollars} to generate a rise in visitors. We want entry to knowledge from Kafka, our transactional retailer (i.e. Postgres), and possibly even knowledge lake (i.e. S3) to tie collectively all the scale of our visits.

To perform this we have to decide an end-system that may ingest, index, and question all these knowledge. Since we need to react in real-time to traits, a knowledge warehouse is out of query since it might take too lengthy to ETL the information there after which attempt to run this evaluation. A database like Postgres additionally wouldn’t work since it’s optimized for level queries, transactions, and comparatively small knowledge sizes, none of that are related/supreme for us.

You may argue that the strategy in use case #2 may fit right here since we are able to arrange one connector for every of our knowledge sources, put all the pieces in Kafka matters, create a number of ksqlDBs, and arrange a cluster of Kafka streams functions. Whilst you may make that work with sufficient brute power, if you wish to assist ad-hoc slicing of your knowledge as a substitute of simply monitoring metrics, in case your dashboards and functions evolve with time, or in order for you knowledge to at all times be contemporary and by no means stale, that strategy received’t reduce it. We successfully want a read-only reproduction of our knowledge from its varied sources that helps quick queries on giant volumes of knowledge; we want a real-time database.

Professionals:

- Assist ad-hoc slicing of knowledge

- Combine knowledge from quite a lot of sources

- Keep away from stale knowledge

Cons:

- One other service in your infrastructure

- One other copy of your knowledge

Actual-Time Databases

Fortunately we now have a couple of good choices for real-time database sinks that work with Kafka.

The primary possibility is Apache Druid, an open-source columnar database. Druid is nice as a result of it could scale to petabytes of knowledge and is very optimized for aggregations. Sadly although it doesn’t assist joins, which implies to make this work we should carry out the enrichment forward of time in another service earlier than dumping the information into Druid. Additionally, its structure is such that spikes in new knowledge being written can negatively have an effect on queries being served.

The following possibility is Elasticsearch which has develop into immensely fashionable for log indexing and search, in addition to different search-related functions. For level lookups on semi-structured or unstructured knowledge, Elasticsearch could also be the most suitable choice on the market. Like Druid, you’ll nonetheless must pre-join the information, and spikes in writes can negatively affect queries. In contrast to Druid, Elasticsearch received’t be capable of run aggregations as shortly, and it has its personal visualization layer in Kibana, which is intuitive and nice for exploratory level queries.

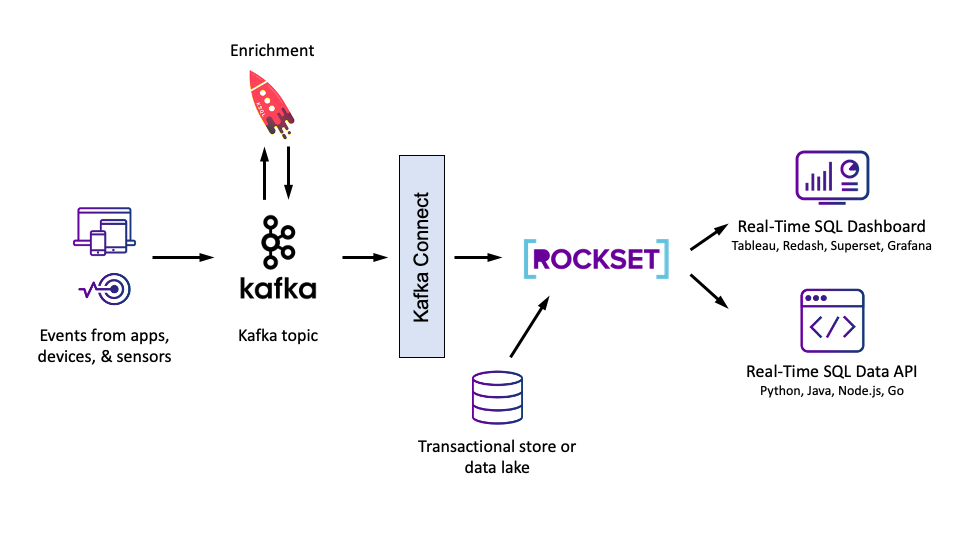

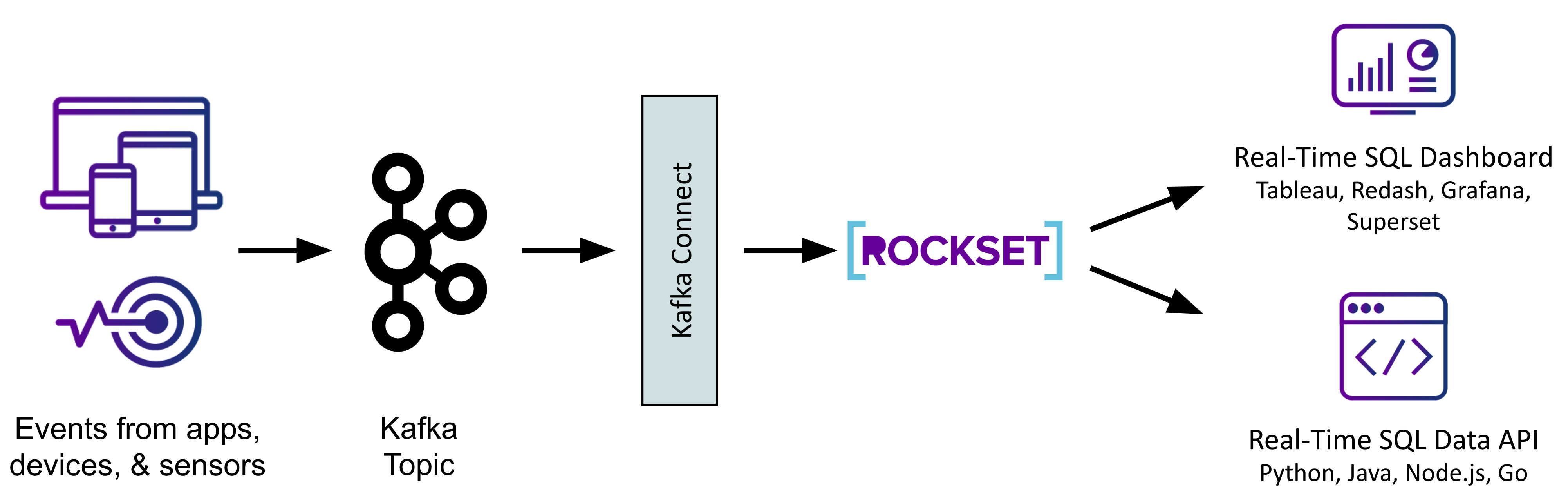

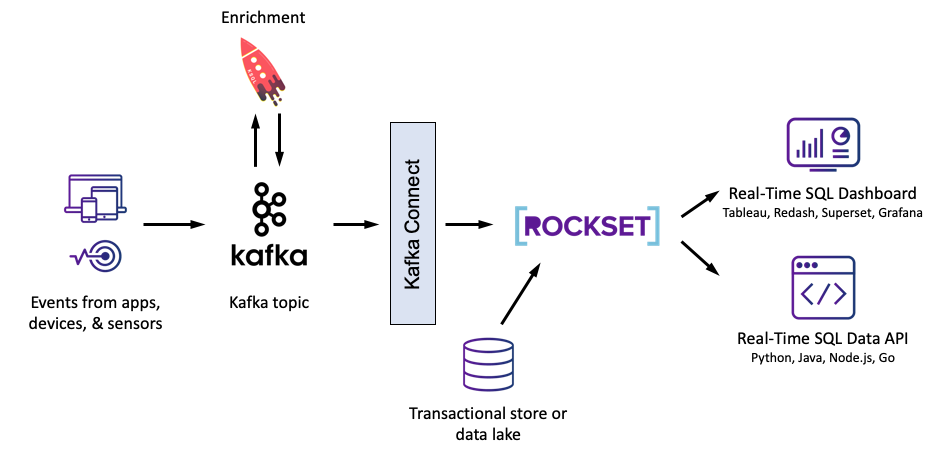

The ultimate possibility is Rockset, a serverless real-time database that helps totally featured SQL, together with joins, on knowledge from quite a lot of sources. With Rockset you may be a part of a Kafka stream with a CSV file in S3 with a desk in DynamoDB in real-time as in the event that they had been all simply common tables in the identical SQL database. No extra stale, pre-joined knowledge! Nevertheless Rockset isn’t open supply and received’t scale to petabytes like Druid, and it’s not designed for unstructured textual content search like Elastic.

Whichever possibility we decide, we are going to arrange our Kafka subject as earlier than and this time join it utilizing the suitable sink connector to our real-time database. Different sources may even feed straight into the database, and we are able to level our dashboards and functions to this database as a substitute of on to Kafka. For instance, with Rockset, we may use the online console to arrange our different integrations with S3, DynamoDB, Redshift, and many others. Then by means of Rockset’s on-line question editor, or by means of the SQL-over-REST protocol, we are able to begin querying all of our knowledge utilizing acquainted SQL. We are able to then go forward and use a visualization device like Tableau to create a dashboard on high of our Kafka stream and our different knowledge sources to higher view and share our findings.

For a deeper dive evaluating these three, take a look at this weblog.

Placing It Collectively

Within the earlier sections, we checked out stream processing and real-time databases, and when finest to make use of them along with Kafka. Stream processing, with KSQL and Kafka Streams, must be your selection when performing filtering, cleaning, and enrichment, whereas utilizing a real-time database sink, like Rockset, Elasticsearch, or Druid, is smart if you’re constructing knowledge functions that require extra complicated analytics and advert hoc queries.

You may conceivably make use of each in your analytics stack in case your necessities contain each filtering/enrichment and complicated analytic queries. For instance, we may use KSQL to counterpoint our clickstreams with geospatial knowledge and likewise use Rockset as a real-time database downstream, bringing in buyer transaction and advertising knowledge, to serve an software making suggestions to customers on our website.

Hopefully the use circumstances mentioned above have resonated with an actual drawback you are attempting to resolve. Like some other expertise, Kafka could be extraordinarily highly effective when used appropriately and very clumsy when not. I hope you now have some extra readability on the right way to strategy a real-time analytics structure and shall be empowered to maneuver your group into the information future.

[ad_2]