[ad_1]

Collaboration on advanced growth initiatives virtually all the time presents challenges. For conventional software program initiatives, these challenges are well-known, and over time a variety of approaches to addressing them have advanced. However as machine studying (ML) turns into an integral part of increasingly more methods, it poses a brand new set of challenges to growth groups. Chief amongst these challenges is getting knowledge scientists (who make use of an experimental strategy to system mannequin growth) and software program builders (who depend on the self-discipline imposed by software program engineering rules) to work harmoniously.

On this SEI weblog submit, which is customized from a not too long ago printed paper to which I contributed, I spotlight the findings of a research on which I teamed up with colleagues Nadia Nahar (who led this work as a part of her PhD research at Carnegie Mellon College and Christian Kästner (additionally from Carnegie Mellon College) and Shurui Zhou (of the College of Toronto).The research sought to establish collaboration challenges frequent to the event of ML-enabled methods. By way of interviews performed with quite a few people engaged within the growth of ML-enabled methods, we sought to reply our main analysis query: What are the collaboration factors and corresponding challenges between knowledge scientists and engineers? We additionally examined the impact of assorted growth environments on these initiatives. Primarily based on this evaluation, we developed preliminary suggestions for addressing the collaboration challenges reported by our interviewees. Our findings and suggestions knowledgeable the aforementioned paper, Collaboration Challenges in Constructing ML-Enabled Techniques: Communication, Documentation, Engineering, and Course of, which I’m proud to say acquired a Distinguished Paper Award on the forty fourth Worldwide Convention on Software program Engineering (ICSE 2022).

Regardless of the eye ML-enabled methods have attracted—and the promise of those methods to exceed human-level cognition and spark nice advances—transferring a machine-learned mannequin to a practical manufacturing system has proved very onerous. The introduction of ML requires higher experience and introduces extra collaboration factors when in comparison with conventional software program growth initiatives. Whereas the engineering elements of ML have acquired a lot consideration, the adjoining human elements regarding the want for interdisciplinary collaboration haven’t.

The Present State of the Apply and Its Limits

Most software program initiatives prolong past the scope of a single developer, so collaboration is a should. Builders usually divide the work into varied software program system parts, and staff members work largely independently till all of the system parts are prepared for integration. Consequently, the technical intersections of the software program parts themselves (that’s, the element interfaces) largely decide the interplay and collaboration factors amongst growth staff members.

Challenges to collaboration happen, nevertheless, when staff members can not simply and informally talk or when the work requires interdisciplinary collaboration. Variations in expertise, skilled backgrounds, and expectations concerning the system can even pose challenges to efficient collaboration in conventional top-down, modular growth initiatives. To facilitate collaboration, communication, and negotiation round element interfaces, builders have adopted a spread of methods and sometimes make use of casual broadcast instruments to maintain everybody on the identical web page. Software program lifecycle fashions, resembling waterfall, spiral, and Agile, additionally assist builders plan and design steady interfaces.

ML-enabled methods usually characteristic a basis of conventional growth into which ML element growth is launched. Growing and integrating these parts into the bigger system requires separating and coordinating knowledge science and software program engineering work to develop the realized fashions, negotiate the element interfaces, and plan for the system’s operation and evolution. The realized mannequin could possibly be a minor or main element of the general system, and the system usually consists of parts for coaching and monitoring the mannequin.

All of those steps imply that, in comparison with conventional methods, ML-enabled system growth requires experience in knowledge science for mannequin constructing and knowledge administration duties. Software program engineers not skilled in knowledge science who, nonetheless, tackle mannequin constructing have a tendency to supply ineffective fashions. Conversely, knowledge scientists are likely to choose to concentrate on modeling duties to the exclusion of engineering work that may affect their fashions. The software program engineering neighborhood has solely not too long ago begun to look at software program engineering for ML-enabled methods, and far of this work has centered narrowly on issues resembling testing fashions and ML algorithms, mannequin deployment, and mannequin equity and robustness. Software program engineering analysis on adopting a system-wide scope for ML-enabled methods has been restricted.

Framing a Analysis Method Round Actual-World Expertise in ML-Enabled System Growth

Discovering restricted current analysis on collaboration in ML-enabled system growth, we adopted a qualitative technique for our analysis primarily based on 4 steps: (1) establishing scope and conducting a literature overview, (2) interviewing professionals constructing ML-enabled methods, (3) triangulating interview findings with our literature overview, and (4) validating findings with interviewees. Every of those steps is mentioned under:

- Scoping and literature overview: We examined the present literature on software program engineering for ML-enabled methods. In so doing, we coded sections of papers that both immediately or implicitly addressed collaboration points amongst staff members with completely different expertise or instructional backgrounds. We analyzed the codes and derived the collaboration areas that knowledgeable our interview steerage.

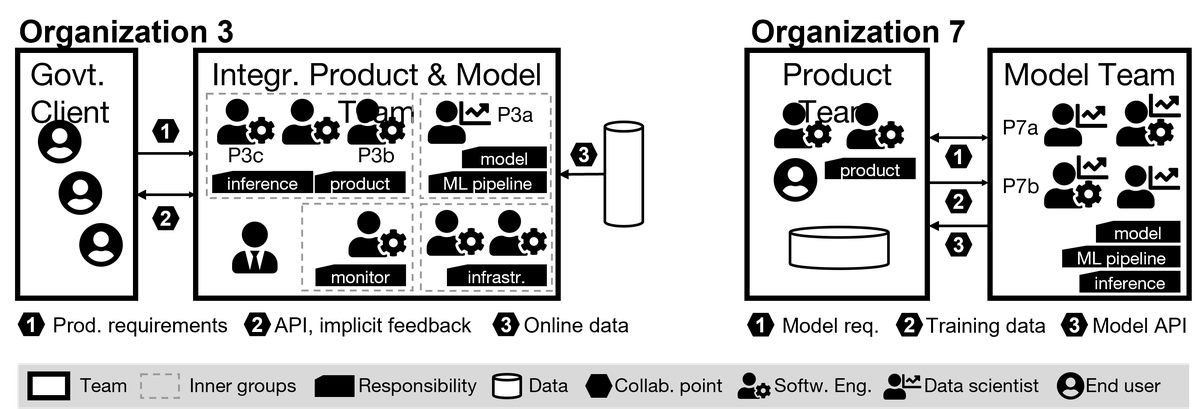

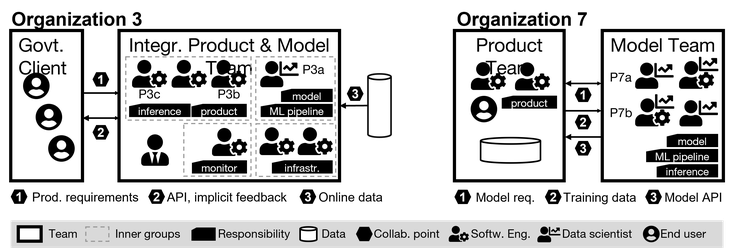

- Interviews: We performed interviews with 45 builders of ML-enabled methods from 28 completely different organizations which have solely not too long ago adopted ML (see Desk 1 for participant demographics). We transcribed the interviews, after which we created visualizations of organizational construction and duties to map challenges to collaboration factors (see Determine 1 for pattern visualizations). We additional analyzed the visualizations to find out whether or not we might affiliate collaboration issues with particular organizational constructions.

- Triangulation with literature: We related interview knowledge with associated discussions recognized in our literature overview, together with potential options. Out of the 300 papers we learn, we recognized 61 as probably related and coded them utilizing our codebook.

- Validity examine: After making a full draft of our research, we supplied it to our interviewees together with supplementary materials and questions prompting them to examine for correctness, areas of settlement and disagreement, and any insights gained from studying the research.

Desk 1: Participant and Firm Demographics

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Our interviews with professionals revealed that the quantity and kinds of groups growing ML-enabled methods, their composition, their duties, the ability dynamics at play, and the formality of their collaborations different broadly from group to group. Determine 1 presents a simplified illustration of groups in two organizations. Crew composition and accountability differed for varied artifacts (as an example, mannequin, pipeline, knowledge, and accountability for the ultimate product). We discovered that groups typically have a number of duties and interface with different groups at a number of collaboration factors.

Determine 1: Construction of Two Interviewed Organizations

Some groups we examined have accountability for each mannequin and software program growth. In different circumstances, software program and mannequin growth are dealt with by completely different groups. We discerned no clear international patterns throughout all of the staff we studied. Nonetheless, patterns did emerge once we narrowed the main focus to a few particular elements of collaboration:

- necessities and planning

- coaching knowledge

- product-model integration

Navigating the Tensions Between Product and Mannequin Necessities

To start, we discovered key variations within the order during which groups establish product and mannequin necessities:

- Mannequin first (13 of 28 organizations): These groups construct the mannequin first after which construct the product across the mannequin. The mannequin shapes product necessities. The place mannequin and product groups are completely different, the mannequin staff most frequently begins the event course of.

- Product first (13 of 28 organizations): These groups begin with product growth after which develop a mannequin to assist it. Most frequently, the product already exists, and new ML growth seeks to reinforce the product’s capabilities. Mannequin necessities are derived from product necessities, which frequently constrain mannequin qualities.

- Parallel (2 of 28 organizations): The mannequin and product groups work in parallel.

No matter which of those three growth trajectories utilized to any given group, our interviews revealed a relentless stress between product necessities and mannequin necessities. Three key observations arose from these tensions:

- Product necessities require enter from the mannequin staff. It’s onerous to elicit product necessities and not using a strong understanding of ML capabilities, so the mannequin staff should be concerned within the course of early. Information scientists reported having to deal with unrealistic expectations about mannequin capabilities, they usually often needed to educate shoppers and builders about ML methods to appropriate these expectations. The place a product-first growth trajectory is practiced, it was attainable for the product staff to disregard knowledge necessities when negotiating product necessities. Nonetheless, when necessities gathering is left to the mannequin staff, key product necessities, resembling usability, could be ignored.

- Mannequin growth with unclear necessities is frequent. Regardless of an expectation they may work independently, mannequin groups hardly ever obtain enough necessities. Typically, they have interaction of their work and not using a full understanding of the product their mannequin is to assist. This omission generally is a thorny drawback for groups that apply model-first growth.

- Offered mannequin necessities hardly ever transcend accuracy and knowledge safety. Ignoring different necessary necessities, resembling latency or scalability, has brought about integration and operation issues. Equity and explainability necessities are hardly ever thought of.

Suggestions

Necessities and planning kind a key collaboration level for product and mannequin groups growing ML-enabled methods. Primarily based on our interviews and literature overview, we’ve proposed the next suggestions for this collaboration level:

- Contain knowledge scientists early within the course of.

- Contemplate adopting a parallel growth trajectory for product and mannequin groups.

- Conduct ML coaching classes to coach shoppers and product groups.

- Undertake extra formal necessities documentation for each mannequin and product.

Addressing Challenges Associated to Coaching Information

Our research revealed that disagreements over coaching knowledge represented the most typical collaboration challenges. These disagreements typically stem from the truth that the mannequin staff often doesn’t personal, acquire, or perceive the info. We noticed three organizational constructions that affect the collaboration challenges associated to coaching knowledge:

- Offered knowledge: The product staff gives knowledge to the mannequin staff. Coordination tends to be distant and formal, and the product staff holds extra energy in negotiations over knowledge.

- Exterior knowledge: The mannequin staff depends on an exterior entity for the info. The information usually comes from publicly obtainable sources or from a third-party vendor. Within the case of publicly obtainable knowledge, the mannequin staff has little negotiating energy. It holds extra negotiating energy when hiring a 3rd occasion to supply the info.

- In-house knowledge: Product, mannequin, and knowledge groups all exist throughout the identical group and make use of that group’s inside knowledge. In such circumstances, each product and mannequin groups want to beat negotiation challenges associated to knowledge use stemming from differing priorities, permissions, and knowledge safety necessities.

Many interviewees famous dissatisfaction with knowledge amount and high quality. One frequent drawback is that the product staff typically lacks data about high quality and quantity of knowledge wanted. Different knowledge issues frequent to the organizations we examined included the next:

- Offered and public knowledge are sometimes insufficient. Analysis has raised questions concerning the representativeness and trustworthiness of such knowledge. Coaching skew is frequent: fashions that present promising outcomes throughout growth fail in manufacturing environments as a result of real-world knowledge differs from the supplied coaching knowledge.

- Information understanding and entry to knowledge consultants typically current bottlenecks. Information documentation is sort of by no means enough. Crew members typically acquire info and maintain monitor of the small print of their heads. Mannequin groups who obtain knowledge from product groups battle getting assist from the product staff to know the info. The identical holds for knowledge obtained from publicly obtainable sources. Even inside knowledge typically suffers from evolving and poorly documented knowledge sources.

- Ambiguity arises when hiring a knowledge agency. Issue generally arises when a mannequin staff seeks buy-in from the product staff on hiring an exterior knowledge agency. Contributors in our research famous communication vagueness and hidden assumptions as key challenges within the course of. Expectations are communicated verbally, with out clear documentation. Consequently, the info staff typically doesn’t have ample context to know what knowledge is required.

- There’s a have to deal with evolving knowledge. Fashions should be recurrently retrained with extra knowledge or tailored to adjustments within the surroundings. Nonetheless, in circumstances the place knowledge is supplied constantly, mannequin groups battle to make sure consistency over time, and most organizations lack the infrastructure to watch knowledge high quality and amount.

- In-house priorities and safety issues typically hinder knowledge entry. Typically, in-house initiatives are native initiatives with at the very least some administration buy-in however little buy-in from different groups centered on their very own priorities. These different groups may query the enterprise worth of the undertaking, which could not have an effect on their space immediately. When knowledge is owned by a unique staff throughout the group, safety issues over knowledge sharing typically come up.

Coaching knowledge of ample high quality and amount is essential for growing ML-enabled methods. Primarily based on our interviews and literature overview, we’ve proposed the next suggestions for this collaboration level:

- When planning, funds for knowledge assortment and entry to area consultants (or perhaps a devoted knowledge staff).

- Undertake a proper contract that specifies knowledge high quality and amount expectations.

- When working with a devoted knowledge staff, make expectations very clear.

- Contemplate using a knowledge validation and monitoring infrastructure early within the undertaking.

Challenges Integrating the Product and Mannequin in ML-Enabled Techniques

At this collaboration level, knowledge scientists and software program engineers have to work carefully collectively, often throughout a number of groups. Conflicts typically happen at this juncture, nevertheless, stemming from unclear processes and duties. Differing practices and expectations additionally create tensions, as does the way in which during which engineering duties are assigned for mannequin growth and operation. The challenges confronted at this collaboration level tended to fall into two broad classes: tradition clashes amongst groups with differing duties and high quality assurance for mannequin and undertaking.

Interdisciplinary Collaboration and Cultural Clashes

We noticed the next conflicts stemming from variations in software program engineering and knowledge science cultures, all of which have been amplified by a scarcity of readability about duties and bounds:

- Crew duties typically don’t match capabilities and preferences. Information scientists expressed dissatisfaction when pressed to tackle engineering duties, whereas software program engineers typically had inadequate data of fashions to successfully combine them.

- Siloing knowledge scientists fosters integration issues. Information scientists typically work in isolation with weak necessities and a lack of knowledge of the bigger context.

- Technical jargon challenges communication. The differing terminology utilized in every discipline results in ambiguity, misunderstanding, and defective assumptions.

- Code high quality, documentation, and versioning expectations differ broadly. Software program engineers asserted that knowledge scientists don’t observe the identical growth practices or conform to the identical high quality requirements when writing code.

Many conflicts we noticed relate to boundaries of accountability and differing expectations. To handle these challenges, we proposed the next suggestions:

- Outline processes, duties, and bounds extra fastidiously.

- Doc APIs at collaboration factors.

- Recruit devoted engineering assist for mannequin deployment.

- Don’t silo knowledge scientists.

- Set up frequent terminology.

Interdisciplinary Collaboration and High quality Assurance for Mannequin and Product

Throughout growth and integration, questions of accountability for high quality assurance typically come up. We famous the next challenges:

- Targets for mannequin adequacy are onerous to ascertain. The mannequin staff virtually all the time evaluates the accuracy of the mannequin, however it has issue deciding whether or not the mannequin is nice sufficient owing to a scarcity of standards.

- Confidence is restricted with out clear mannequin analysis. Mannequin groups don’t prioritize analysis, so that they typically haven’t any systematic analysis technique, which in flip results in skepticism concerning the mannequin from different groups.

- Accountability for system testing is unclear. Groups typically battle with testing the whole system after mannequin integration, with mannequin groups often assuming no accountability for product high quality.

- Planning for on-line testing and monitoring is uncommon. Although crucial to watch for coaching skew and knowledge drift, such testing requires the coordination of groups liable for product, mannequin, and operation. Moreover, many organizations don’t do on-line testing because of the lack of an ordinary course of, automation, and even take a look at consciousness.

Primarily based on our interviews and the insights they supplied, we developed the next suggestions to deal with challenges associated to high quality assurance:

- Prioritize and plan for high quality assurance testing.

- The product staff ought to assume accountability for total high quality and system testing, however it ought to have interaction the mannequin staff within the creation of a monitoring and experimentation infrastructure.

- Plan for, funds, and assign structured suggestions from the product engineering staff to the mannequin staff.

- Evangelize the advantages of testing in manufacturing.

- Outline clear high quality necessities for mannequin and product.

Conclusion: 4 Areas for Enhancing Collaboration on ML-Enabled System Growth

Information scientists and software program engineers should not the primary to understand that interdisciplinary collaboration is difficult, however facilitating such collaboration has not been the main focus of organizations growing ML-enabled methods. Our observations point out that challenges to collaboration on such methods fall alongside three collaboration factors: necessities and undertaking planning, coaching knowledge, and product-model integration. This submit has highlighted our particular findings in these areas, however we see 4 broad areas for enhancing collaboration within the growth of ML-enabled methods:

Communication: To fight issues arising from miscommunication, we advocate ML literacy for software program engineers and managers, and likewise software program engineering literacy for knowledge scientists.

Documentation: Practices for documenting mannequin necessities, knowledge expectations, and warranted mannequin qualities have but to take root. Interface documentation already in use could present a superb place to begin, however any strategy should use a language understood by everybody concerned within the growth effort.

Engineering: Undertaking managers ought to guarantee ample engineering capabilities for each ML and non-ML parts and foster product and operations considering.

Course of: The experimental, trial-and error technique of ML mannequin growth doesn’t naturally align with the standard, extra structured software program course of lifecycle. We advocate for additional analysis on built-in course of lifecycles for ML-enabled methods.

[ad_2]