[ad_1]

In pure conversations, we do not say individuals’s names each time we communicate to one another. As a substitute, we depend on contextual signaling mechanisms to provoke conversations, and eye contact is commonly all it takes. Google Assistant, now out there in additional than 95 nations and over 29 languages, has primarily relied on a hotword mechanism (“Hey Google” or “OK Google”) to assist greater than 700 million individuals each month get issues executed throughout Assistant units. As digital assistants grow to be an integral a part of our on a regular basis lives, we’re growing methods to provoke conversations extra naturally.

At Google I/O 2022, we introduced Look and Speak, a significant growth in our journey to create pure and intuitive methods to work together with Google Assistant-powered residence units. That is the primary multimodal, on-device Assistant function that concurrently analyzes audio, video, and textual content to find out if you end up talking to your Nest Hub Max. Utilizing eight machine studying fashions collectively, the algorithm can differentiate intentional interactions from passing glances with a purpose to precisely determine a person’s intent to interact with Assistant. As soon as inside 5ft of the system, the person could merely have a look at the display screen and discuss to begin interacting with the Assistant.

We developed Look and Speak in alignment with our AI Rules. It meets our strict audio and video processing necessities, and like our different digicam sensing options, video by no means leaves the system. You possibly can all the time cease, overview and delete your Assistant exercise at myactivity.google.com. These added layers of safety allow Look and Speak to work only for those that flip it on, whereas retaining your information protected.

Modeling Challenges

The journey of this function started as a technical prototype constructed on high of fashions developed for tutorial analysis. Deployment at scale, nonetheless, required fixing real-world challenges distinctive to this function. It needed to:

- Help a variety of demographic traits (e.g., age, pores and skin tones).

- Adapt to the ambient range of the actual world, together with difficult lighting (e.g., backlighting, shadow patterns) and acoustic situations (e.g., reverberation, background noise).

- Cope with uncommon digicam views, since sensible shows are generally used as countertop units and search for on the person(s), not like the frontal faces sometimes utilized in analysis datasets to coach fashions.

- Run in real-time to make sure well timed responses whereas processing video on-device.

The evolution of the algorithm concerned experiments with approaches starting from area adaptation and personalization to domain-specific dataset growth, field-testing and suggestions, and repeated tuning of the general algorithm.

Expertise Overview

A Look and Speak interplay has three phases. Within the first section, Assistant makes use of visible alerts to detect when a person is demonstrating an intent to interact with it after which “wakes up” to take heed to their utterance. The second section is designed to additional validate and perceive the person’s intent utilizing visible and acoustic alerts. If any sign within the first or second processing phases signifies that it’s not an Assistant question, Assistant returns to standby mode. These two phases are the core Look and Speak performance, and are mentioned under. The third section of question achievement is typical question movement, and is past the scope of this weblog.

Section One: Participating with Assistant

The primary section of Look and Speak is designed to evaluate whether or not an enrolled person is deliberately partaking with Assistant. Look and Speak makes use of face detection to determine the person’s presence, filters for proximity utilizing the detected face field measurement to deduce distance, after which makes use of the prevailing Face Match system to find out whether or not they’re enrolled Look and Speak customers.

For an enrolled person inside vary, an customized eye gaze mannequin determines whether or not they’re trying on the system. This mannequin estimates each the gaze angle and a binary gaze-on-camera confidence from picture frames utilizing a multi-tower convolutional neural community structure, with one tower processing the entire face and one other processing patches across the eyes. For the reason that system display screen covers a area beneath the digicam that might be pure for a person to have a look at, we map the gaze angle and binary gaze-on-camera prediction to the system display screen space. To make sure that the ultimate prediction is resilient to spurious particular person predictions and involuntary eye blinks and saccades, we apply a smoothing perform to the person frame-based predictions to take away spurious particular person predictions.

|

| Eye-gaze prediction and post-processing overview. |

We implement stricter consideration necessities earlier than informing customers that the system is prepared for interplay to reduce false triggers, e.g., when a passing person briefly glances on the system. As soon as the person trying on the system begins talking, we chill out the eye requirement, permitting the person to naturally shift their gaze.

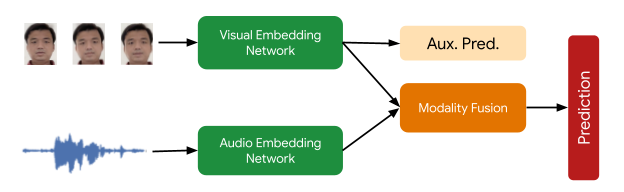

The ultimate sign mandatory on this processing section checks that the Face Matched person is the lively speaker. That is supplied by a multimodal lively speaker detection mannequin that takes as enter each video of the person’s face and the audio containing speech, and predicts whether or not they’re talking. Quite a lot of augmentation strategies (together with RandAugment, SpecAugment, and augmenting with AudioSet sounds) helps enhance prediction high quality for the in-home area, boosting end-feature efficiency by over 10%.The ultimate deployed mannequin is a quantized, hardware-accelerated TFLite mannequin, which makes use of 5 frames of context for the visible enter and 0.5 seconds for the audio enter.

Section Two: Assistant Begins Listening

In section two, the system begins listening to the content material of the person’s question, nonetheless solely on-device, to additional assess whether or not the interplay is meant for Assistant utilizing further alerts. First, Look and Speak makes use of Voice Match to additional make sure that the speaker is enrolled and matches the sooner Face Match sign. Then, it runs a state-of-the-art automated speech recognition mannequin on-device to transcribe the utterance.

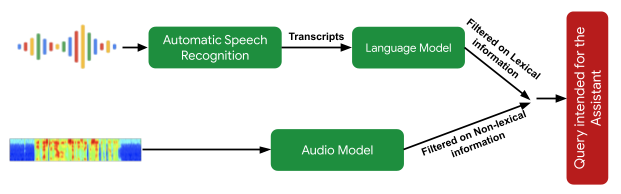

The subsequent important processing step is the intent understanding algorithm, which predicts whether or not the person’s utterance was supposed to be an Assistant question. This has two components: 1) a mannequin that analyzes the non-lexical data within the audio (i.e., pitch, velocity, hesitation sounds) to find out whether or not the utterance appears like an Assistant question, and a couple of) a textual content evaluation mannequin that determines whether or not the transcript is an Assistant request. Collectively, these filter out queries not supposed for Assistant. It additionally makes use of contextual visible alerts to find out the probability that the interplay was supposed for Assistant.

|

| Overview of the semantic filtering strategy to find out if a person utterance is a question supposed for the Assistant. |

Lastly, when the intent understanding mannequin determines that the person utterance was doubtless meant for Assistant, Look and Speak strikes into the achievement section the place it communicates with the Assistant server to acquire a response to the person’s intent and question textual content.

Efficiency, Personalization and UX

Every mannequin that helps Look and Speak was evaluated and improved in isolation after which examined within the end-to-end Look and Speak system. The massive number of ambient situations wherein Look and Speak operates necessitates the introduction of personalization parameters for algorithm robustness. By utilizing alerts obtained through the person’s hotword-based interactions, the system personalizes parameters to particular person customers to ship enhancements over the generalized world mannequin. This personalization additionally runs solely on-device.

And not using a predefined hotword as a proxy for person intent, latency was a big concern for Look and Speak. Typically, a powerful sufficient interplay sign doesn’t happen till effectively after the person has began talking, which may add a whole bunch of milliseconds of latency, and current fashions for intent understanding add to this since they require full, not partial, queries. To bridge this hole, Look and Speak fully forgoes streaming audio to the server, with transcription and intent understanding being on-device. The intent understanding fashions can work off of partial utterances. This leads to an end-to-end latency comparable with present hotword-based methods.

The UI expertise is predicated on person analysis to offer well-balanced visible suggestions with excessive learnability. That is illustrated within the determine under.

|

| Left: The spatial interplay diagram of a person partaking with Look and Speak. Proper: The Consumer Interface (UI) expertise. |

We developed a various video dataset with over 3,000 contributors to check the function throughout demographic subgroups. Modeling enhancements pushed by range in our coaching information improved efficiency for all subgroups.

Conclusion

Look and Speak represents a big step towards making person engagement with Google Assistant as pure as attainable. Whereas it is a key milestone in our journey, we hope this would be the first of many enhancements to our interplay paradigms that can proceed to reimagine the Google Assistant expertise responsibly. Our purpose is to make getting assist really feel pure and simple, in the end saving time so customers can concentrate on what issues most.

Acknowledgements

This work concerned collaborative efforts from a multidisciplinary group of software program engineers, researchers, UX, and cross-functional contributors. Key contributors from Google Assistant embrace Alexey Galata, Alice Chuang, Barbara Wang, Britanie Corridor, Gabriel Leblanc, Gloria McGee, Hideaki Matsui, James Zanoni, Joanna (Qiong) Huang, Krunal Shah, Kavitha Kandappan, Pedro Silva, Tanya Sinha, Tuan Nguyen, Vishal Desai, Will Truong, Yixing Cai, Yunfan Ye; from Analysis together with Hao Wu, Joseph Roth, Sagar Savla, Sourish Chaudhuri, Susanna Ricco. Because of Yuan Yuan and Caroline Pantofaru for his or her management, and everybody on the Nest, Assistant, and Analysis groups who supplied invaluable enter towards the event of Look and Speak.

[ad_2]