[ad_1]

Supervised studying is a standard method to machine studying (ML) through which the mannequin is educated utilizing information that’s labeled appropriately for the duty at hand. Abnormal supervised studying trains on unbiased and identically distributed (IID) information, the place all coaching examples are sampled from a hard and fast set of lessons, and the mannequin has entry to those examples all through all the coaching part. In distinction, continuous studying tackles the issue of coaching a single mannequin on altering information distributions the place totally different classification duties are introduced sequentially. That is notably necessary, for instance, to allow autonomous brokers to course of and interpret steady streams of knowledge in real-world situations.

For instance the distinction between supervised and continuous studying, take into account two duties: (1) classify cats vs. canine and (2) classify pandas vs. koalas. In supervised studying, which makes use of IID, the mannequin is given coaching information from each duties and treats it as a single 4-class classification downside. Nonetheless, in continuous studying, these two duties arrive sequentially, and the mannequin solely has entry to the coaching information of the present process. Consequently, such fashions are likely to endure from efficiency degradation on the earlier duties, a phenomenon known as catastrophic forgetting.

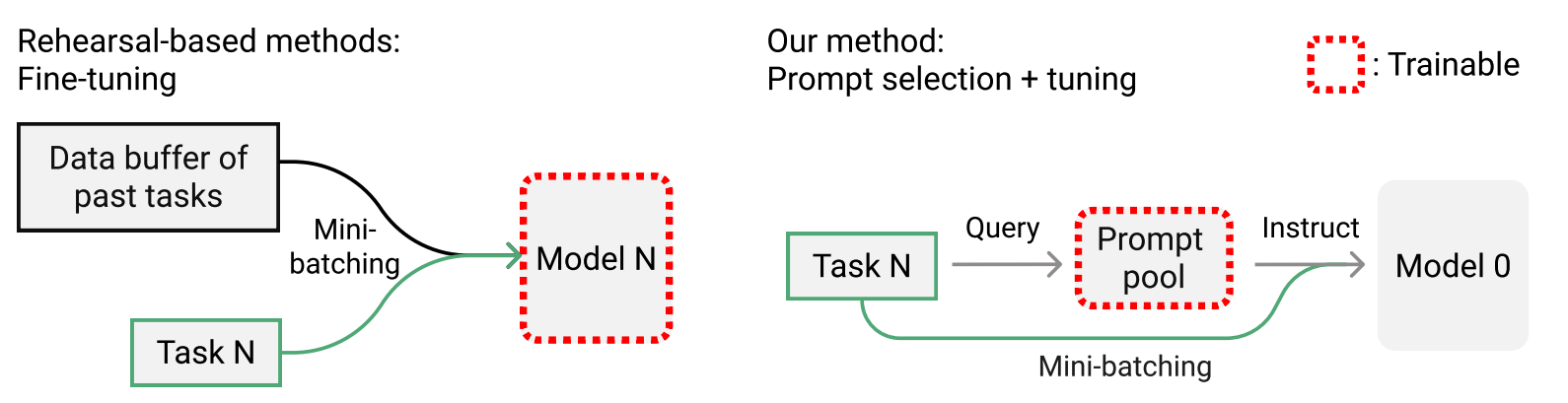

Mainstream options attempt to tackle catastrophic forgetting by buffering previous information in a “rehearsal buffer” and mixing it with present information to coach the mannequin. Nonetheless, the efficiency of those options relies upon closely on the scale of the buffer and, in some instances, might not be attainable in any respect because of information privateness considerations. One other department of labor designs task-specific elements to keep away from interference between duties. However these strategies usually assume that the duty at check time is understood, which isn’t all the time true, they usually require a lot of parameters. The constraints of those approaches increase vital questions for continuous studying: (1) Is it attainable to have a more practical and compact reminiscence system that goes past buffering previous information? (2) Can one robotically choose related information elements for an arbitrary pattern with out understanding its process identification?

In “Studying to Immediate for Continuous Studying”, introduced at CVPR2022, we try to reply these questions. Drawing inspiration from prompting methods in pure language processing, we suggest a novel continuous studying framework known as Studying to Immediate (L2P). As an alternative of frequently re-learning all of the mannequin weights for every sequential process, we as a substitute present learnable task-relevant “directions” (i.e., prompts) to information pre-trained spine fashions by means of sequential coaching by way of a pool of learnable immediate parameters. L2P is relevant to varied difficult continuous studying settings and outperforms earlier state-of-the-art strategies persistently on all benchmarks. It achieves aggressive outcomes towards rehearsal-based strategies whereas additionally being extra reminiscence environment friendly. Most significantly, L2P is the primary to introduce the concept of prompting within the subject of continuous studying.

Immediate Pool and Occasion-Clever Question

Given a pre-trained Transformer mannequin, “prompt-based studying” modifies the unique enter utilizing a hard and fast template. Think about a sentiment evaluation process is given the enter “I like this cat”. A prompt-based methodology will remodel the enter to “I like this cat. It appears to be like X”, the place the “X” is an empty slot to be predicted (e.g., “good”, “cute”, and so forth.) and “It appears to be like X” is the so-called immediate. By including prompts to the enter, one can situation the pre-trained fashions to unravel many downstream duties. Whereas designing fastened prompts requires prior information together with trial and error, immediate tuning prepends a set of learnable prompts to the enter embedding to instruct the pre-trained spine to be taught a single downstream process, underneath the switch studying setting.

Within the continuous studying state of affairs, L2P maintains a learnable immediate pool, the place prompts might be flexibly grouped as subsets to work collectively. Particularly, every immediate is related to a key that’s realized by decreasing the cosine similarity loss between matched enter question options. These keys are then utilized by a question perform to dynamically lookup a subset of task-relevant prompts based mostly on the enter options. At check time, inputs are mapped by the question perform to the top-N closest keys within the immediate pool, and the related immediate embeddings are then fed to the remainder of the mannequin to generate the output prediction. At coaching, we optimize the immediate pool and the classification head by way of the cross-entropy loss.

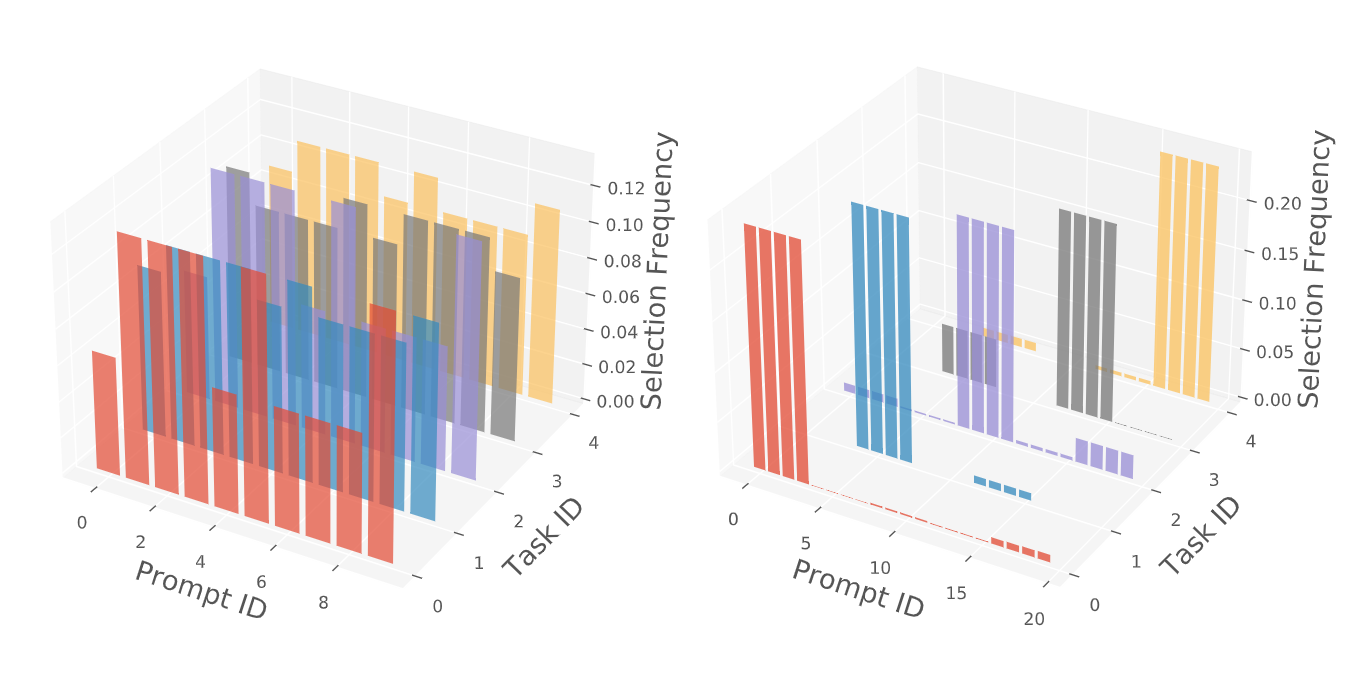

Intuitively, related enter examples have a tendency to decide on related units of prompts and vice versa. Thus, prompts which can be regularly shared encode extra generic information whereas different prompts encode extra task-specific information. Furthermore, prompts retailer high-level directions and hold lower-level pre-trained representations frozen, thus catastrophic forgetting is mitigated even with out the need of a rehearsal buffer. The instance-wise question mechanism removes the need of understanding the duty identification or boundaries, enabling this method to deal with the under-investigated problem of task-agnostic continuous studying.

Effectiveness of L2P

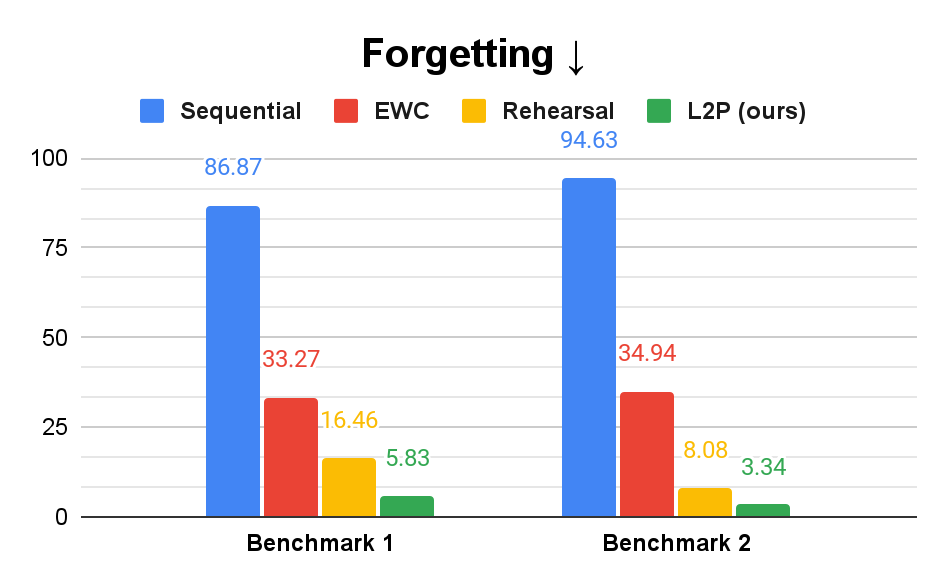

We consider the effectiveness of L2P in several baseline strategies utilizing an ImageNet pre-trained Imaginative and prescient Transformer (ViT) on consultant benchmarks. The naïve baseline, known as Sequential within the graphs beneath, refers to coaching a single mannequin sequentially on all duties. The EWC mannequin provides a regularization time period to mitigate forgetting and the Rehearsal mannequin saves previous examples to a buffer for blended coaching with present information. To measure the general continuous studying efficiency, we measure each the accuracy and the common distinction between one of the best accuracy achieved throughout coaching and the ultimate accuracy for all duties (besides the final process), which we name forgetting. We discover that L2P outperforms the Sequential and EWC strategies considerably in each metrics. Notably, L2P even surpasses the Rehearsal method, which makes use of an extra buffer to avoid wasting previous information. As a result of the L2P method is orthogonal to Rehearsal, its efficiency might be additional improved if it, too, used a rehearsal buffer.

We additionally visualize the immediate choice outcome from our instance-wise question technique on two totally different benchmarks, the place one has related duties and the opposite has various duties. The outcomes point out that L2P promotes extra information sharing between related duties by having extra shared prompts, and fewer information sharing between various duties by having extra task-specific prompts.

Conclusion

On this work, we current L2P to deal with key challenges in continuous studying from a brand new perspective. L2P doesn’t require a rehearsal buffer or recognized process identification at check time to realize excessive efficiency. Additional, it could possibly deal with numerous complicated continuous studying situations, together with the difficult task-agnostic setting. As a result of large-scale pre-trained fashions are broadly used within the machine studying neighborhood for his or her sturdy efficiency on real-world issues, we imagine that L2P opens a brand new studying paradigm in direction of sensible continuous studying purposes.

Acknowledgements

We gratefully acknowledge the contributions of different co-authors, together with Chen-Yu Lee, Han Zhang, Ruoxi Solar, Xiaoqi Ren, Guolong Su, Vincent Perot, Jennifer Dy, Tomas Pfister. We’d additionally wish to thank Chun-Liang Li, Jeremy Martin Kubica, Sayna Ebrahimi, Stratis Ioannidis, Nan Hua, and Emmanouil Koukoumidis, for his or her beneficial discussions and suggestions, and Tom Small for determine creation.

[ad_2]