[ad_1]

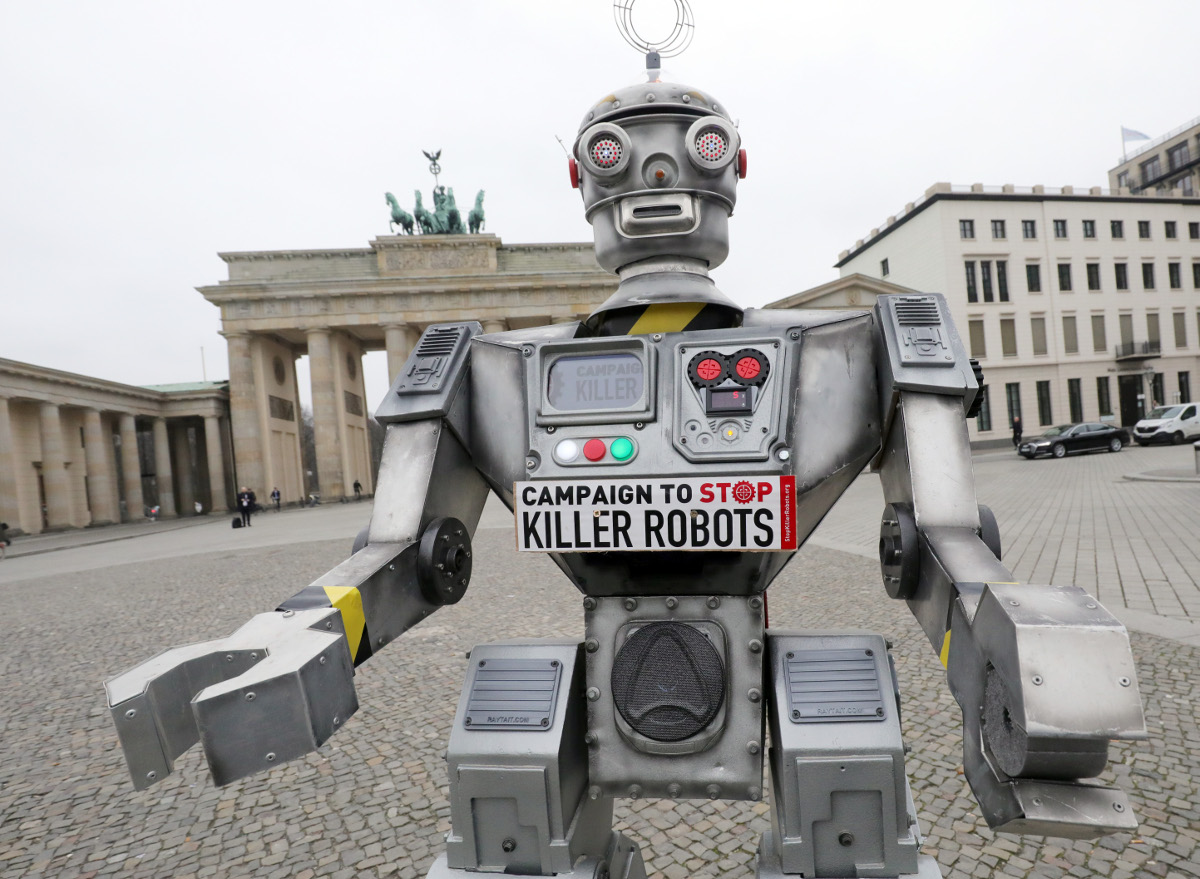

Humanitarian teams have been calling for a ban on autonomous weapons. Wolfgang Kumm/image alliance through Getty Photographs

By James Dawes

Autonomous weapon methods – generally often known as killer robots – might have killed human beings for the primary time ever final yr, in response to a latest United Nations Safety Council report on the Libyan civil warfare. Historical past may effectively determine this as the start line of the following main arms race, one which has the potential to be humanity’s ultimate one.

The United Nations Conference on Sure Typical Weapons debated the query of banning autonomous weapons at its once-every-five-years overview assembly in Geneva Dec. 13-17, 2021, however didn’t attain consensus on a ban. Established in 1983, the conference has been up to date repeatedly to limit among the world’s cruelest typical weapons, together with land mines, booby traps and incendiary weapons.

Autonomous weapon methods are robots with deadly weapons that may function independently, choosing and attacking targets and not using a human weighing in on these selections. Militaries all over the world are investing closely in autonomous weapons analysis and improvement. The U.S. alone budgeted US$18 billion for autonomous weapons between 2016 and 2020.

In the meantime, human rights and humanitarian organizations are racing to determine rules and prohibitions on such weapons improvement. With out such checks, international coverage specialists warn that disruptive autonomous weapons applied sciences will dangerously destabilize present nuclear methods, each as a result of they might seriously change perceptions of strategic dominance, rising the danger of preemptive assaults, and since they might be mixed with chemical, organic, radiological and nuclear weapons themselves.

As a specialist in human rights with a deal with the weaponization of synthetic intelligence, I discover that autonomous weapons make the unsteady balances and fragmented safeguards of the nuclear world – for instance, the U.S. president’s minimally constrained authority to launch a strike – extra unsteady and extra fragmented. Given the tempo of analysis and improvement in autonomous weapons, the U.N. assembly might need been the final likelihood to move off an arms race.

Deadly errors and black packing containers

I see 4 main risks with autonomous weapons. The primary is the issue of misidentification. When choosing a goal, will autonomous weapons have the ability to distinguish between hostile troopers and 12-year-olds enjoying with toy weapons? Between civilians fleeing a battle web site and insurgents making a tactical retreat?

Killer robots, just like the drones within the 2017 brief movie ‘Slaughterbots,’ have lengthy been a significant subgenre of science fiction. (Warning: graphic depictions of violence.)

The issue right here isn’t that machines will make such errors and people gained’t. It’s that the distinction between human error and algorithmic error is just like the distinction between mailing a letter and tweeting. The dimensions, scope and velocity of killer robotic methods – dominated by one concentrating on algorithm, deployed throughout a whole continent – may make misidentifications by particular person people like a latest U.S. drone strike in Afghanistan appear to be mere rounding errors by comparability.

Autonomous weapons professional Paul Scharre makes use of the metaphor of the runaway gun to clarify the distinction. A runaway gun is a faulty machine gun that continues to fireside after a set off is launched. The gun continues to fireside till ammunition is depleted as a result of, so to talk, the gun doesn’t know it’s making an error. Runaway weapons are extraordinarily harmful, however luckily they’ve human operators who can break the ammunition hyperlink or attempt to level the weapon in a secure course. Autonomous weapons, by definition, don’t have any such safeguard.

Importantly, weaponized AI needn’t even be faulty to provide the runaway gun impact. As a number of research on algorithmic errors throughout industries have proven, the easiest algorithms – working as designed – can generate internally appropriate outcomes that nonetheless unfold horrible errors quickly throughout populations.

For instance, a neural web designed to be used in Pittsburgh hospitals recognized bronchial asthma as a risk-reducer in pneumonia circumstances; picture recognition software program utilized by Google recognized Black folks as gorillas; and a machine-learning instrument utilized by Amazon to rank job candidates systematically assigned destructive scores to ladies.

The issue isn’t just that when AI methods err, they err in bulk. It’s that once they err, their makers usually don’t know why they did and, subsequently, the way to appropriate them. The black field drawback of AI makes it virtually unattainable to think about morally accountable improvement of autonomous weapons methods.

The proliferation issues

The following two risks are the issues of low-end and high-end proliferation. Let’s begin with the low finish. The militaries creating autonomous weapons now are continuing on the belief that they are going to have the ability to comprise and management the usage of autonomous weapons. But when the historical past of weapons expertise has taught the world something, it’s this: Weapons unfold.

Market pressures may outcome within the creation and widespread sale of what could be regarded as the autonomous weapon equal of the Kalashnikov assault rifle: killer robots which might be low cost, efficient and virtually unattainable to comprise as they flow into across the globe. “Kalashnikov” autonomous weapons may get into the fingers of individuals outdoors of presidency management, together with worldwide and home terrorists.

The Kargu-2, made by a Turkish protection contractor, is a cross between a quadcopter drone and a bomb. It has synthetic intelligence for locating and monitoring targets, and might need been used autonomously within the Libyan civil warfare to assault folks. Ministry of Protection of Ukraine, CC BY

Excessive-end proliferation is simply as dangerous, nevertheless. Nations may compete to develop more and more devastating variations of autonomous weapons, together with ones able to mounting chemical, organic, radiological and nuclear arms. The ethical risks of escalating weapon lethality can be amplified by escalating weapon use.

Excessive-end autonomous weapons are prone to result in extra frequent wars as a result of they are going to lower two of the first forces which have traditionally prevented and shortened wars: concern for civilians overseas and concern for one’s personal troopers. The weapons are prone to be geared up with costly moral governors designed to attenuate collateral harm, utilizing what U.N. Particular Rapporteur Agnes Callamard has known as the “fable of a surgical strike” to quell ethical protests. Autonomous weapons may also scale back each the necessity for and danger to 1’s personal troopers, dramatically altering the cost-benefit evaluation that nations endure whereas launching and sustaining wars.

Uneven wars – that’s, wars waged on the soil of countries that lack competing expertise – are prone to develop into extra widespread. Take into consideration the worldwide instability attributable to Soviet and U.S. army interventions in the course of the Chilly Conflict, from the primary proxy warfare to the blowback skilled all over the world at the moment. Multiply that by each nation at the moment aiming for high-end autonomous weapons.

Undermining the legal guidelines of warfare

Lastly, autonomous weapons will undermine humanity’s ultimate stopgap in opposition to warfare crimes and atrocities: the worldwide legal guidelines of warfare. These legal guidelines, codified in treaties reaching way back to the 1864 Geneva Conference, are the worldwide skinny blue line separating warfare with honor from bloodbath. They’re premised on the concept that folks could be held accountable for his or her actions even throughout wartime, that the best to kill different troopers throughout fight doesn’t give the best to homicide civilians. A outstanding instance of somebody held to account is Slobodan Milosevic, former president of the Federal Republic of Yugoslavia, who was indicted on prices of crimes in opposition to humanity and warfare crimes by the U.N.’s Worldwide Felony Tribunal for the Former Yugoslavia.

However how can autonomous weapons be held accountable? Who’s accountable for a robotic that commits warfare crimes? Who can be placed on trial? The weapon? The soldier? The soldier’s commanders? The company that made the weapon? Nongovernmental organizations and specialists in worldwide legislation fear that autonomous weapons will result in a severe accountability hole.

To carry a soldier criminally accountable for deploying an autonomous weapon that commits warfare crimes, prosecutors would wish to show each actus reus and mens rea, Latin phrases describing a responsible act and a responsible thoughts. This may be troublesome as a matter of legislation, and probably unjust as a matter of morality, on condition that autonomous weapons are inherently unpredictable. I consider the gap separating the soldier from the impartial selections made by autonomous weapons in quickly evolving environments is just too nice.

The authorized and ethical problem isn’t made simpler by shifting the blame up the chain of command or again to the positioning of manufacturing. In a world with out rules that mandate significant human management of autonomous weapons, there will probably be warfare crimes with no warfare criminals to carry accountable. The construction of the legal guidelines of warfare, together with their deterrent worth, will probably be considerably weakened.

A brand new world arms race

Think about a world through which militaries, rebel teams and worldwide and home terrorists can deploy theoretically limitless deadly drive at theoretically zero danger at occasions and locations of their selecting, with no ensuing authorized accountability. It’s a world the place the kind of unavoidable algorithmic errors that plague even tech giants like Amazon and Google can now result in the elimination of complete cities.

For my part, the world mustn’t repeat the catastrophic errors of the nuclear arms race. It mustn’t sleepwalk into dystopia.

![]()

That is an up to date model of an article initially printed on September 29, 2021.

James Dawes doesn’t work for, seek the advice of, personal shares in or obtain funding from any firm or group that will profit from this text, and has disclosed no related affiliations past their educational appointment.

tags: c-Army-Protection

The Dialog

is an impartial supply of reports and views, sourced from the educational and analysis group and delivered direct to the general public.

The Dialog

is an impartial supply of reports and views, sourced from the educational and analysis group and delivered direct to the general public.

[ad_2]