[ad_1]

Giant language fashions (LLMs) comparable to GPT-4, Llama, and Gemini are a few of the most important developments within the area of synthetic intelligence (AI), and their skill to know and generate human language is remodeling the way in which that people talk with machines. LLMs are pretrained on huge quantities of textual content knowledge, enabling them to acknowledge language construction and semantics, in addition to construct a broad data base that covers a variety of subjects. This generalized info can be utilized to drive a variety of functions, together with digital assistants, textual content or code autocompletion, and textual content summarization; nonetheless, many fields require extra specialised data and experience.

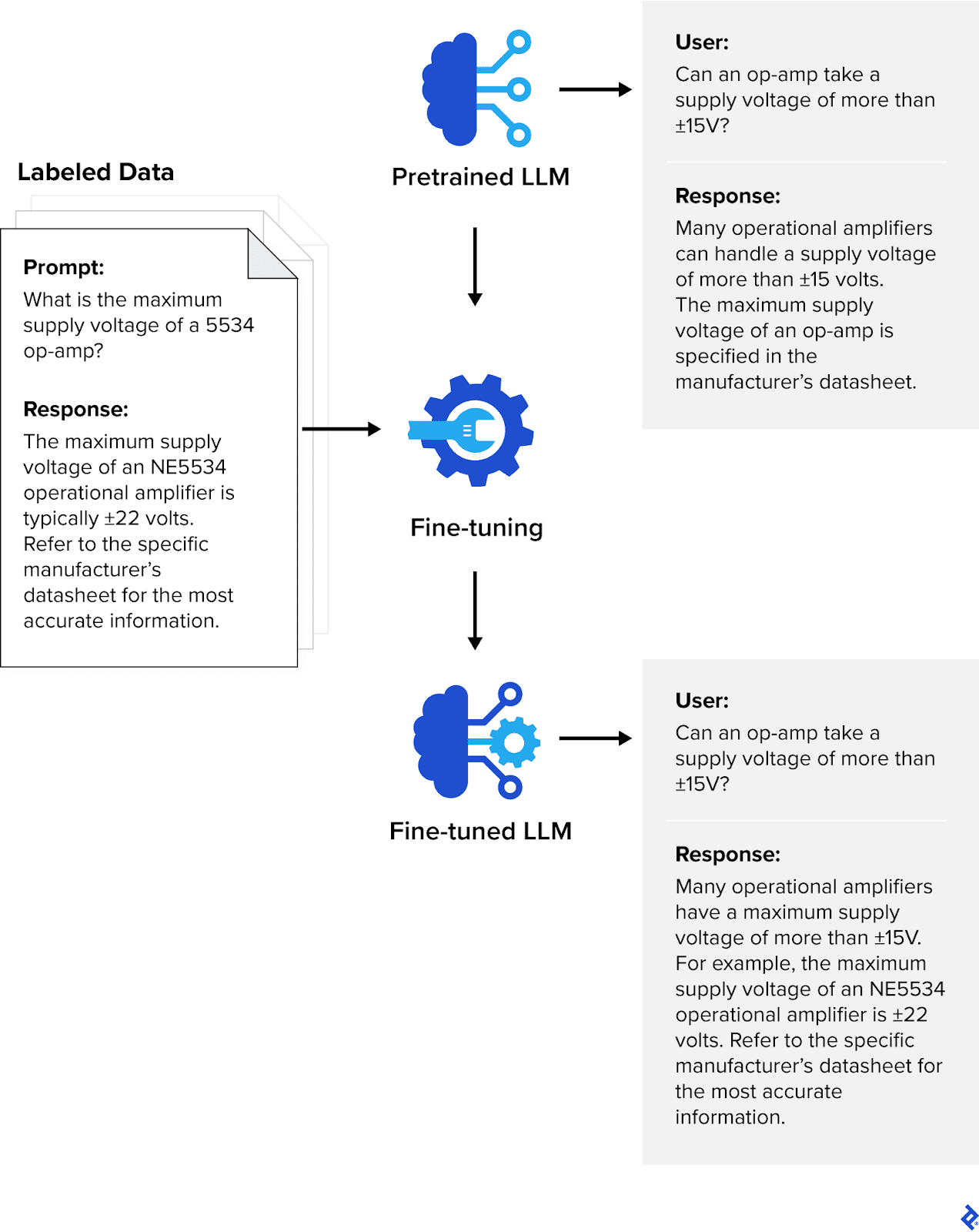

A site-specific language mannequin might be carried out in two methods: constructing the mannequin from scratch, or fine-tuning a pretrained LLM. Constructing a mannequin from scratch is a computationally and financially costly course of that requires enormous quantities of knowledge, however fine-tuning might be completed with smaller datasets. Within the fine-tuning course of, an LLM undergoes further coaching utilizing domain-specific datasets which might be curated and labeled by subject material specialists with a deep understanding of the sphere. Whereas pretraining offers the LLM basic data and linguistic capabilities, fine-tuning imparts extra specialised abilities and experience.

LLMs might be fine-tuned for many industries or domains; the important thing requirement is high-quality coaching knowledge with correct labeling. By way of my expertise creating LLMs and machine studying (ML) instruments for universities and purchasers throughout industries like finance and insurance coverage, I’ve gathered a number of confirmed greatest practices and recognized widespread pitfalls to keep away from when labeling knowledge for fine-tuning ML fashions. Knowledge labeling performs a significant function in pc imaginative and prescient (CV) and audio processing, however for this information, I give attention to LLMs and pure language processing (NLP) knowledge labeling, together with a walkthrough of easy methods to label knowledge for the fine-tuning of OpenAI’s GPT-4o.

What Are Advantageous-tuned LLMs?

LLMs are a sort of basis mannequin, which is a general-purpose machine studying mannequin able to performing a broad vary of duties. Advantageous-tuned LLMs are fashions which have acquired additional coaching, making them extra helpful for specialised industries and duties. LLMs are skilled on language knowledge and have an distinctive command of syntax, semantics, and context; despite the fact that they’re extraordinarily versatile, they may underperform with extra specialised duties the place area experience is required. For these functions, the muse LLM might be fine-tuned utilizing smaller labeled datasets that target particular domains. Advantageous-tuning leverages supervised studying, a class of machine studying the place the mannequin is proven each the enter object and the specified output worth (the annotations). These prompt-response pairs allow the mannequin to be taught the connection between the enter and output in order that it will possibly make related predictions on unseen knowledge.

Advantageous-tuned LLMs have already confirmed to be invaluable in streamlining services and products throughout a variety of industries:

- Healthcare: HCA Healthcare, one of many largest hospital networks within the US, makes use of Google’s MedLM for transcriptions of doctor-patient interactions in emergency rooms and studying digital well being information to determine necessary factors. MedLM is a collection of fashions which might be fine-tuned for the healthcare trade. MedLM relies on Med-PaLM 2, the primary LLM to succeed in expert-level efficiency (85%+) on questions much like these discovered on the US Medical Licensing Examination (USMLE).

- Finance: Establishments comparable to Morgan Stanley, Financial institution of America, and Goldman Sachs use fine-tuned LLMs to research market traits, parse monetary paperwork, and detect fraud. FinGPT, an open-source LLM that goals to democratize monetary knowledge, is fine-tuned on monetary information and social media posts, making it extremely efficient at sentiment evaluation. FinBERT is one other open-source mannequin fine-tuned on monetary knowledge, and designed for monetary sentiment evaluation.

- Authorized: Whereas a fine-tuned LLM can’t substitute human attorneys, it will possibly assist them with authorized analysis and contract evaluation. Casetext’s CoCounsel is an AI authorized assistant that automates most of the duties that decelerate the authorized course of, comparable to analyzing and drafting authorized paperwork. CoCounsel is powered by GPT-4 and fine-tuned with all the info in Casetext’s authorized database.

In comparison with basis LLMs, fine-tuned LLMs present appreciable enhancements with inputs of their specialised domains—however the high quality of the coaching knowledge is paramount. The fine-tuning knowledge for CoCounsel, for instance, was primarily based on roughly 30,000 authorized questions refined by a group of attorneys, area specialists, and AI engineers over a interval of six months. It was deemed prepared for launch solely after about 4,000 hours of labor. Though CoCounsel has already been launched commercially, it continues to be fine-tuned and improved—a key step in holding any mannequin updated.

The Knowledge Labeling Course of

The annotations required for fine-tuning include instruction-expected response pairs, the place every enter corresponds with an anticipated output. Whereas choosing and labeling knowledge might look like a simple course of, a number of concerns add to the complexity. The information ought to be clear and nicely outlined; it should even be related, but cowl a complete vary of potential interactions. This contains eventualities that will have a excessive stage of ambiguity, comparable to performing sentiment evaluation on product opinions which might be sarcastic in nature. Typically, the extra knowledge a mannequin is skilled on, the higher; nonetheless, when accumulating LLM coaching knowledge, care ought to be taken to make sure that it’s consultant of a broad vary of contexts and linguistic nuances.

As soon as the info is collected, it sometimes requires cleansing and preprocessing to take away noise and inconsistencies. Duplicate information and outliers are eliminated, and lacking values are substituted through imputation. Unintelligible textual content can also be flagged for investigation or removing.

On the annotation stage, knowledge is tagged with the suitable labels. Human annotators play a vital function within the course of, as they supply the perception crucial for correct labels. To take a few of the workload off annotators, many labeling platforms provide AI-assisted prelabeling, an automated knowledge labeling course of that creates the preliminary labels and identifies necessary phrases and phrases.

After the info is labeled, the labels endure validation and high quality assurance (QA), a overview for accuracy and consistency. Knowledge factors that had been labeled by a number of annotators are reviewed to obtain consensus. Automated instruments may also be used to validate the info and flag any discrepancies. After the QA course of, the labeled knowledge is prepared for use for mannequin coaching.

Annotation Pointers and Requirements for NLP

One of the necessary early steps within the knowledge annotation workflow is creating a transparent set of tips and requirements for human annotators to observe. Pointers ought to be straightforward to know and constant as a way to keep away from introducing any variability that may confuse the mannequin throughout coaching.

Textual content classification, comparable to labeling the physique of an e-mail as spam, is a typical knowledge labeling job. The rules for textual content classification ought to embody clear definitions for every potential class, in addition to directions on easy methods to deal with textual content that won’t match into any class.

When labeling textual content, annotators typically carry out named entity recognition (NER), figuring out and tagging names of individuals, organizations, areas, and different correct nouns. The rules for NER duties ought to checklist all potential entity varieties with examples on easy methods to deal with them. This contains edge circumstances, comparable to partial matches or nested entities.

Annotators are sometimes tasked with labeling the sentiment of textual content as constructive, destructive, or impartial. With sentiment evaluation, every class ought to be clearly outlined. As a result of sentiments can typically be delicate or combined, examples ought to be offered to assist annotators distinguish between them. The rules must also tackle potential biases associated to gender, race, or cultural context.

Coreference decision refers back to the identification of all expressions that confer with the identical entity. The rules for coreference decision ought to present directions on easy methods to observe and label entities throughout totally different sentences and paperwork, and specify easy methods to deal with pronouns.

With part-of-speech (POS) tagging, annotators label every phrase with part of speech, for instance, noun, adjective, or verb. For POS tagging, the rules ought to embody directions on easy methods to deal with ambiguous phrases or phrases that would match into a number of classes.

As a result of LLM knowledge labeling typically entails subjective judgment, detailed tips on easy methods to deal with ambiguity and borderline circumstances will assist annotators produce constant and proper labels. One instance is Common NER, a mission consisting of multilingual datasets with crowdsourced annotations; its annotation tips present detailed info and examples for every entity kind, in addition to the very best methods to deal with ambiguity.

Greatest Practices for NLP and LLM Knowledge Labeling

As a result of probably subjective nature of textual content knowledge, there could also be challenges within the annotation course of. Many of those challenges might be addressed by following a set of knowledge labeling greatest practices. Earlier than you begin, be sure you have a complete understanding of the issue you might be fixing for. The extra info you’ve got, the higher in a position you can be to create a dataset that covers all edge circumstances and variations. When recruiting annotators, your vetting course of ought to be equally complete. Knowledge labeling is a job that requires reasoning and perception, in addition to robust consideration to element. These further methods are extremely useful to the annotation course of:

- Iterative refinement: The dataset might be divided into small subsets and labeled in phases. By way of suggestions and high quality checks, the method and tips might be improved between phases, with any potential pitfalls recognized and corrected early.

- Divide and conquer method: Complicated duties might be damaged up into steps. With sentiment evaluation, phrases or phrases containing sentiment might be recognized first, with the general sentiment of the paragraph decided utilizing rule-based model-assisted automation.

Superior Methods for NLP and LLM Knowledge Labeling

There are a number of superior methods that may enhance the effectivity, accuracy, and scalability of the labeling course of. Many of those methods benefit from automation and machine studying fashions to optimize the workload for human annotators, attaining higher outcomes with much less handbook effort.

The handbook labeling workload might be decreased by utilizing lively studying algorithms; that is when pretrained ML fashions determine the info factors that may profit from human annotation. These embody knowledge factors the place the mannequin has the bottom confidence within the predicted label (uncertainty sampling), and borderline circumstances, the place the info factors fall closest to the choice boundary between two courses (margin sampling).

NER duties might be streamlined with gazetteers, that are primarily predefined lists of entities and their corresponding varieties. Utilizing a gazetteer, the identification of widespread entities might be automated, releasing up people to give attention to the ambiguous knowledge factors.

Longer textual content passages might be shortened through textual content summarization. Utilizing an ML mannequin to spotlight key sentences or summarize longer passages can scale back the period of time it takes for human annotators to carry out sentiment evaluation or textual content classification.

The coaching dataset might be expanded with knowledge augmentation. Artificial knowledge might be routinely generated via paraphrasing, again translation, and changing phrases with synonyms. A generative adversarial community (GAN) may also be used to generate knowledge factors that mimic a given dataset. These methods improve the coaching dataset, making the ensuing mannequin considerably extra sturdy, with minimal further handbook labeling.

Weak supervision is a time period that covers quite a lot of methods used to coach fashions with noisy, inaccurate, or in any other case incomplete knowledge. One kind of weak supervision is distant supervision, the place present labeled knowledge from a associated job is used to deduce relationships in unlabeled knowledge. For instance, a product overview labeled with a constructive sentiment might comprise phrases like “dependable” and “top quality,” which can be utilized to assist decide the sentiment of an unlabeled overview. Lexical assets, like a medical dictionary, may also be used to assist in NER. Weak supervision makes it potential to label massive datasets in a short time or when handbook labeling is just too costly. This comes on the expense of accuracy, nonetheless, and if the highest-quality labels are required, human annotators ought to be concerned.

Lastly, with the provision of contemporary “benchmark” LLMs comparable to GPT-4, the annotation course of might be utterly automated with LLM-generated labels, that means that the response for an instruction-expected response pair is generated by the LLM. For instance, a product overview might be enter into the LLM together with directions to categorise if the sentiment of the overview is constructive, destructive, or impartial, making a labeled knowledge level that can be utilized to coach one other LLM. In lots of circumstances, your entire course of might be automated, with the directions additionally generated by the LLM. Although knowledge labeling with a benchmark LLM could make the method quicker, it is not going to give the fine-tuned mannequin data past what the LLM already has. To advance the capabilities of the present technology of ML fashions, human perception is required.

There are a number of instruments and platforms that make the info labeling workflow extra environment friendly. Smaller, lower-budget initiatives can benefit from open-source knowledge labeling software program comparable to Doccano and Label Studio. For bigger initiatives, business platforms provide extra complete AI-assisted prelabeling; mission, group, and QA administration instruments; dashboards to visualise progress and analytics; and, most significantly, a assist group. A number of the extra broadly used business instruments embody Labelbox, Amazon’s SageMaker Floor Reality, Snorkel Circulation, and SuperAnnotate.

Extra instruments that may assist with knowledge labeling for LLMs embody the next:

- Cleanlab makes use of statistical strategies and mannequin evaluation to determine and repair points in datasets, together with outliers, duplicates, and label errors. Any points are highlighted for human overview together with recommendations for corrections.

- AugLy is a knowledge augmentation library that helps textual content, picture, audio, and video knowledge. Developed by Meta AI, AugLy offers greater than 100 augmentation methods that can be utilized to generate artificial knowledge for mannequin coaching.

- skweak is an open-source Python library that mixes totally different sources of weak supervision to generate labeled knowledge. It focuses on NLP duties, and permits customers to generate heuristic guidelines or use pretrained fashions and distant supervision to carry out NER, textual content classification, and identification of relationships in textual content.

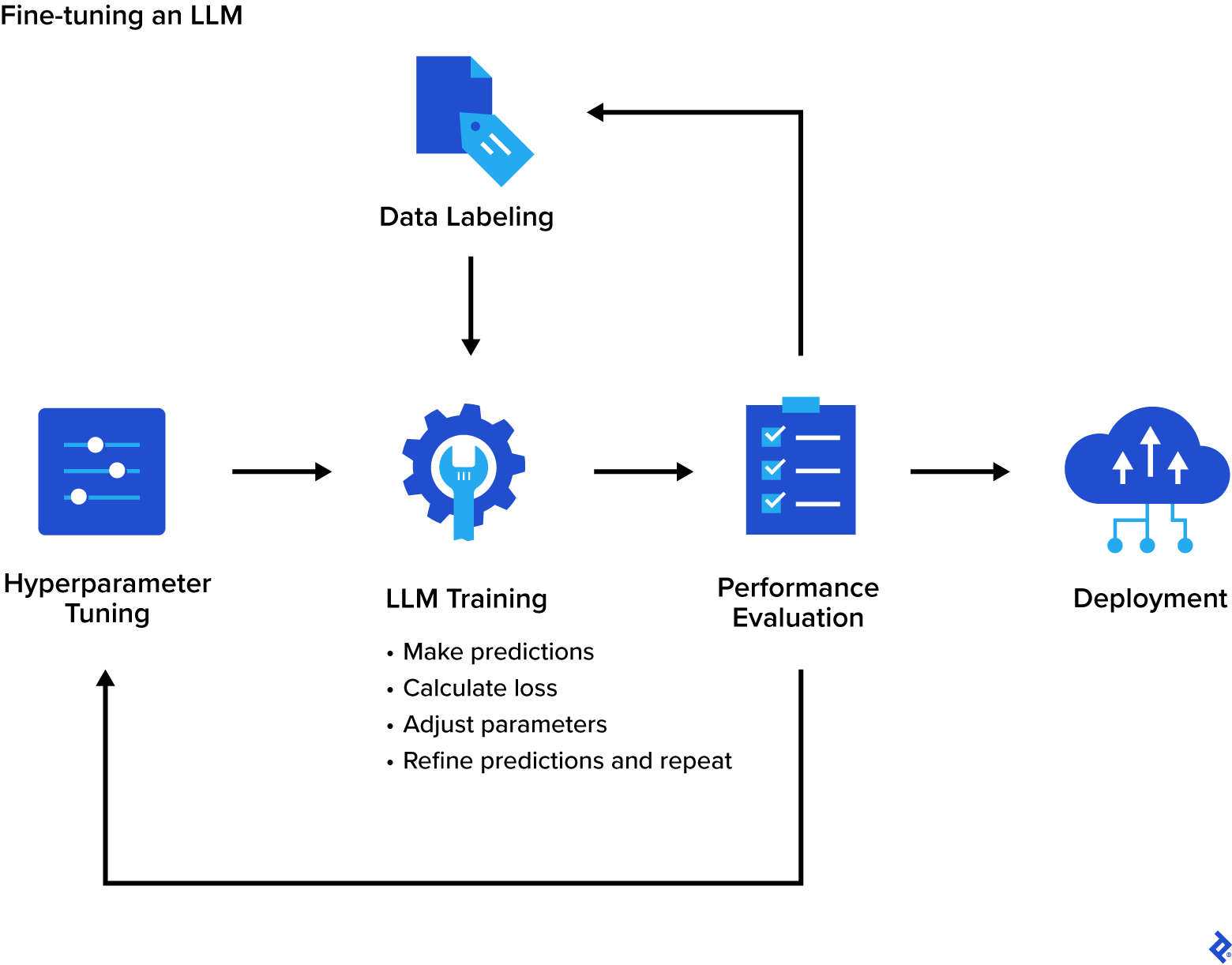

An Overview of the LLM Advantageous-tuning Course of

Step one within the fine-tuning course of is choosing the pretrained LLM. There are a number of sources for pretrained fashions, together with Hugging Face’s Transformers or NLP Cloud, which supply a variety of LLMs in addition to a platform for coaching and deployment. Pretrained LLMs may also be obtained from OpenAI, Kaggle, and Google’s TensorFlow Hub.

Coaching knowledge ought to usually be massive and various, overlaying a variety of edge circumstances and ambiguities. A dataset that’s too small can result in overfitting, the place the mannequin learns the coaching dataset too nicely, and because of this, performs poorly on unseen knowledge. Overfitting may also be brought on by coaching with too many epochs, or full passes via the dataset. Coaching knowledge that’s not various can result in bias, the place the mannequin performs poorly on underrepresented eventualities. Moreover, bias might be launched by annotators. To reduce bias within the labels, the annotation group ought to have various backgrounds and correct coaching on easy methods to acknowledge and scale back their very own biases.

Hyperparameter tuning can have a major influence on the coaching outcomes. Hyperparameters management how the mannequin learns, and optimizing these settings can forestall undesired outcomes comparable to overfitting. Some key hyperparameters embody the next:

- The lincomes price specifies how a lot the interior parameters (weights and biases) are adjusted at every iteration, primarily figuring out the pace at which the mannequin learns.

- The batch measurement specifies the variety of coaching samples utilized in every iteration.

- The variety of epochs specifies what number of instances the method is run. One epoch is one full move via your entire dataset.

Widespread methods for hyperparameter tuning embody grid search, random search, and Bayesian optimization. Devoted libraries comparable to Optuna and Ray Tune are additionally designed to streamline the hyperparameter tuning course of.

As soon as the info is labeled and has gone via the validation and QA course of, the precise fine-tuning of the mannequin can start. In a typical coaching algorithm, the mannequin generates predictions on batches of knowledge in a step referred to as the ahead move. The predictions are then in contrast with the labels, and the loss (a measure of how totally different the predictions are from the precise values) is calculated. Subsequent, the mannequin performs a backward move, calculating how a lot every parameter contributed to the loss. Lastly, an optimizer, comparable to Adam or SGD, is used to regulate the mannequin’s inside parameters as a way to enhance the predictions. These steps are repeated, enabling the mannequin to refine its predictions iteratively till the general loss is minimized. This coaching course of is usually carried out utilizing instruments like Hugging Face’s Transformers, NLP Cloud, or Google Colab. The fine-tuned mannequin might be evaluated in opposition to efficiency metrics comparable to perplexity, METEOR, BERTScore, and BLEU.

After the fine-tuning course of is full, the mannequin might be deployed into manufacturing. There are a number of choices for the deployment of ML fashions, together with NLP Cloud, Hugging Face’s Mannequin Hub, or Amazon’s SageMaker. ML fashions may also be deployed on premises utilizing frameworks like Flask or FastAPI. Domestically deployed fashions are sometimes used for improvement and testing, in addition to in functions the place knowledge privateness and safety is a priority.

Extra challenges when fine-tuning an LLM embody knowledge leakage and catastrophic interference:

Knowledge leakage happens when info within the coaching knowledge additionally seems within the check knowledge, resulting in a very optimistic evaluation of mannequin efficiency. Sustaining strict separation between coaching, validation, and check knowledge is efficient in lowering knowledge leakage.

Catastrophic interference, or catastrophic forgetting, can happen when a mannequin is skilled sequentially on totally different duties or datasets. When a mannequin is fine-tuned for a selected job, the brand new info it learns modifications its inside parameters. This transformation might trigger a lower in efficiency for extra basic duties. Successfully, the mannequin “forgets” a few of what it has realized. Analysis is ongoing on easy methods to forestall catastrophic interference, nonetheless, some methods that may scale back it embody elastic weight consolidation (EWC), parameter-efficient fine-tuning (PEFT). and replay-based strategies wherein outdated coaching knowledge is combined in with the brand new coaching knowledge, serving to the mannequin to recollect earlier duties. Implementing architectures comparable to progressive neural networks (PNN) also can forestall catastrophic interference.

Advantageous-tuning GPT-4o With Label Studio

OpenAI at present helps fine-tuning for GPT-3.5 Turbo, GPT-4o, GPT-4o mini, babbage-002, and davinci-002 at its developer platform.

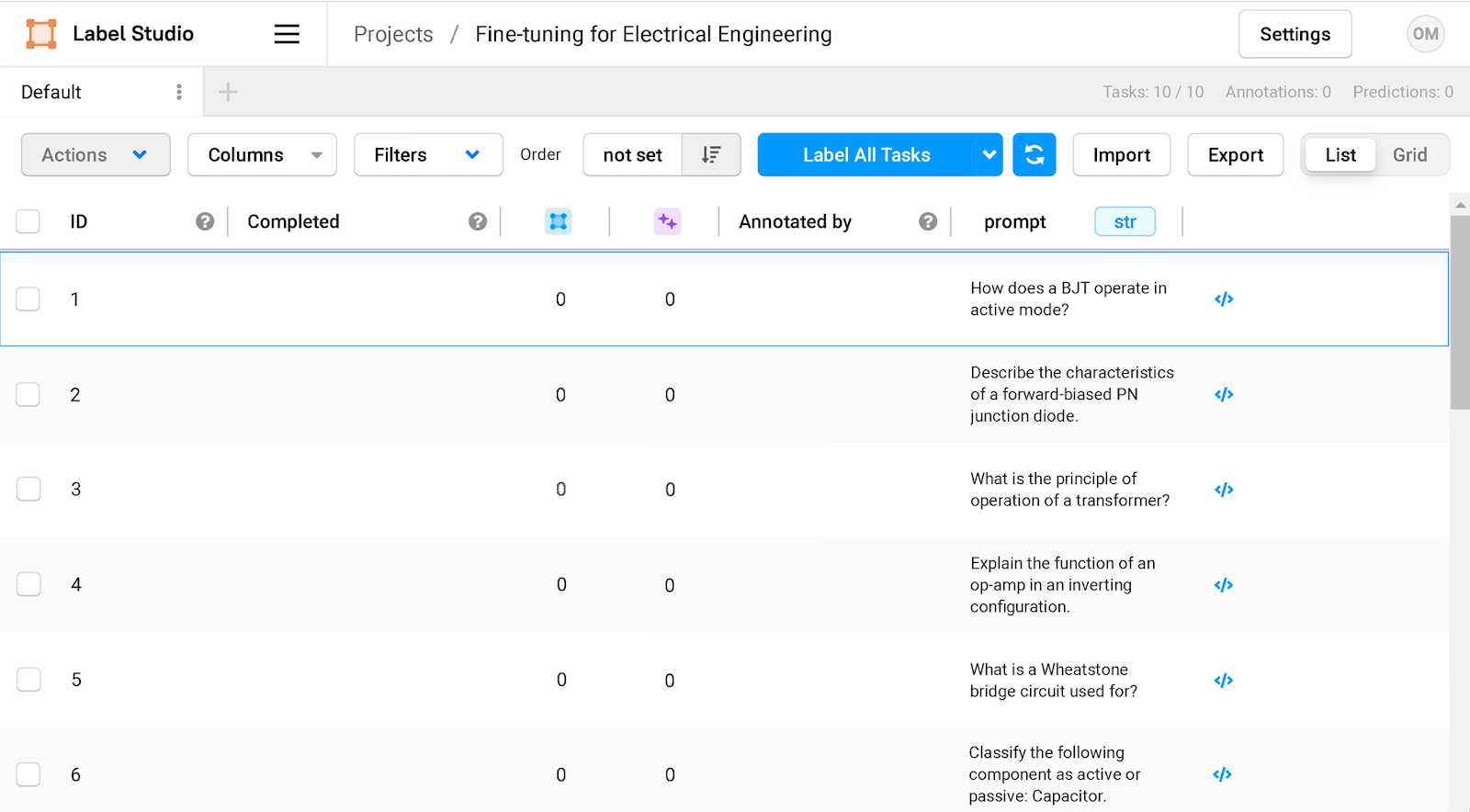

To annotate the coaching knowledge, we are going to use the free Neighborhood Version of Label Studio.

First, set up Label Studio by working the next command:

pip set up label-studio

Label Studio may also be put in utilizing Homebrew, Docker, or from supply. Label Studio’s documentation particulars every of the totally different strategies.

As soon as put in, begin the Label Studio server:

label-studio begin

Level your browser to http://localhost:8080 and enroll with an e-mail tackle and password. After you have logged in, click on the Create button to start out a brand new mission. After the brand new mission is created, choose the template for fine-tuning by going to Settings > Labeling Interface > Browse Templates > Generative AI > Supervised LLM Advantageous-tuning.

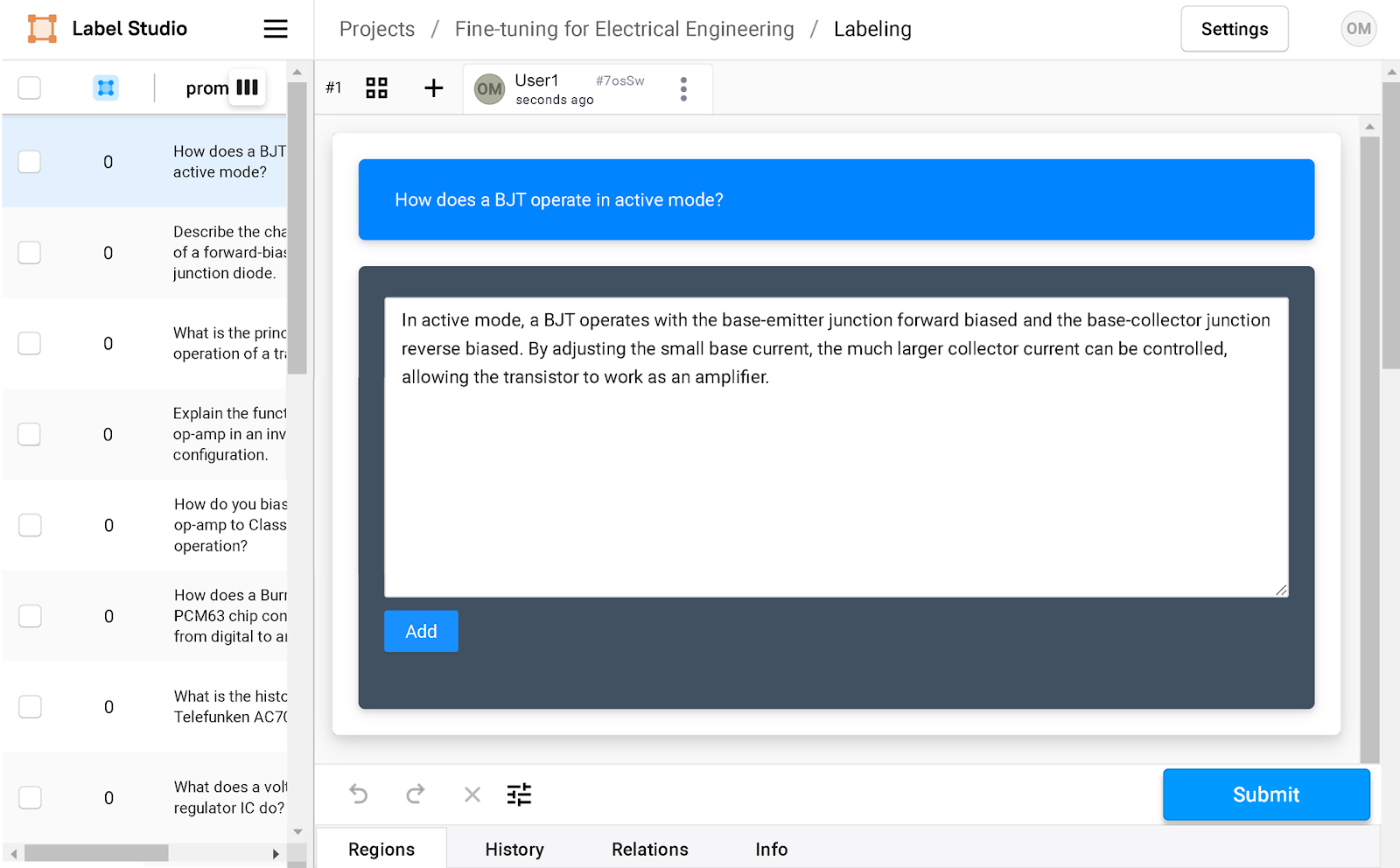

The preliminary set of prompts might be imported or added manually. For this fine-tuning mission, we are going to use electrical engineering questions as our prompts:

How does a BJT function in lively mode?

Describe the traits of a forward-biased PN junction diode.

What's the precept of operation of a transformer?

Clarify the perform of an op-amp in an inverting configuration.

What's a Wheatstone bridge circuit used for?

Classify the next part as lively or passive: Capacitor.

How do you bias a NE5534 op-amp to Class A operation?

How does a Burr-Brown PCM63 chip convert indicators from digital to analog?

What's the historical past of the Telefunken AC701 tube?

What does a voltage regulator IC do?

The questions seem as an inventory of duties within the dashboard.

Clicking on every query opens the annotation window, the place the anticipated response might be added.

As soon as all the knowledge factors are labeled, click on the Export button to export your labeled knowledge to a JSON, CSV, or TSV file. On this instance, we’re exporting to a CSV file. Nonetheless, to fine-tune GPT-4o, OpenAI requires the format of the coaching knowledge to be in keeping with its Chat Completions API. The information ought to be structured in JSON Strains (JSONL) format, with every line containing a “message” object. A message object can comprise a number of items of content material, every with its personal function, both “system,” “person,” or “assistant”:

System: Content material with the system function modifies the conduct of the mannequin. For instance, the mannequin might be instructed to undertake a sarcastic persona or write in an action-packed method. The system function is elective.

Person: Content material with the person function incorporates examples of requests or prompts.

Assistant: Content material with the assistant function offers the mannequin examples of the way it ought to reply to the request or immediate contained within the corresponding person content material.

The next is an instance of 1 message object containing an instruction and anticipated response:

{"messages":

[

{"role": "user", "content": "How does a BJT operate in active mode?"},

{"role": "assistant", "content": "In active mode, a BJT operates with the base-emitter junction forward biased and the base-collector junction reverse biased. By adjusting the small base current, the much larger collector current can be controlled, allowing the transistor to work as an amplifier."}

]

}

A Python script was created as a way to modify the CSV knowledge to have the right format. The script opens the CSV file that was created by Label Studio and iterates via every row, changing it into the JSONL format:

import pandas as pd #import the Pandas library

import json

df = pd.read_csv("C:/datafiles/engineering-data.csv") #engineering-data.csv is the csv file that LabelStudio exported

#the file shall be formatted within the JSONL format

with open("C:/datafiles/finetune.jsonl", "w") as data_file:

for _, row in df.iterrows():

instruction = row["instruction"]

immediate = row["prompt"]

data_file.write(json.dumps(

{"messages": [

{"role": "user" , "content": prompt},

{"role": "assistant" , "content": instruction}

]}))

data_file.write("n")

As soon as the info is prepared, it may be used for fine-tuning at platform.openai.com.

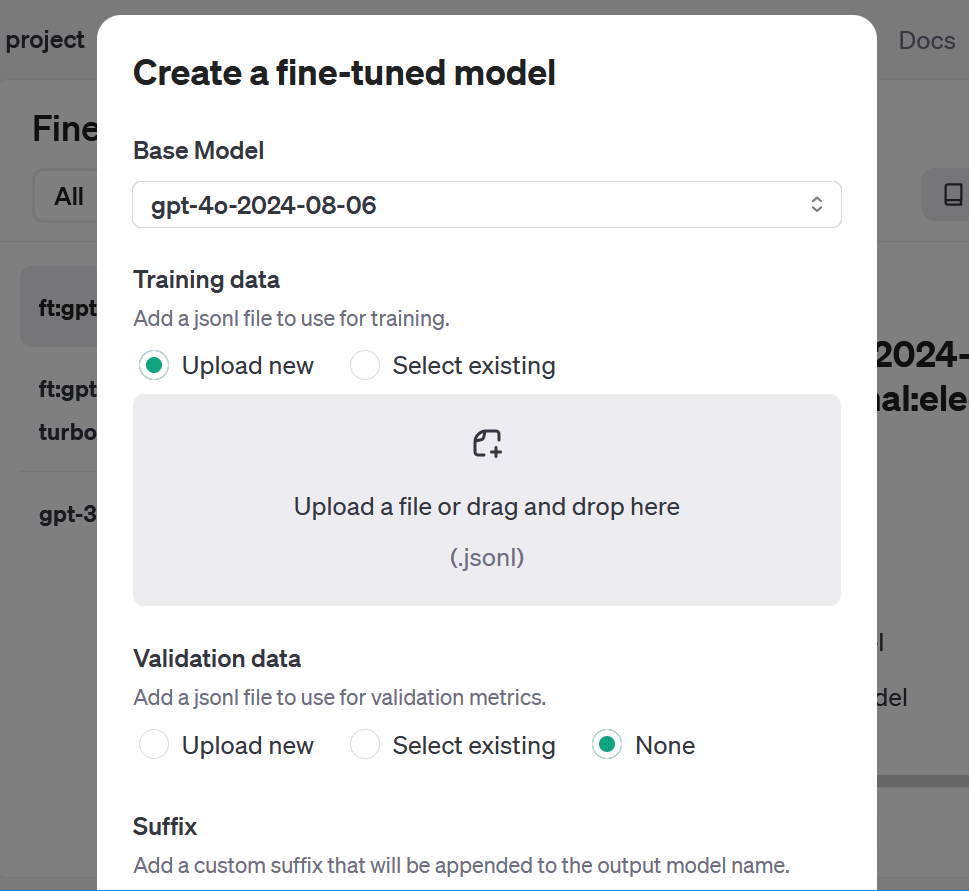

To entry the fine-tuning dashboard, click on Dashboard on the prime after which Advantageous-tuning on the left navigation menu. Clicking on the Create button brings up an interface that lets you choose the mannequin you wish to prepare, add the coaching knowledge, and alter three hyperparameters: studying price multiplier, batch measurement, and variety of epochs. Essentially the most present mannequin, gpt-4o-2024-08-06 was chosen for this check. The hyperparameters had been left on their default setting of Auto. OpenAI additionally allows you to add a suffix to assist differentiate your fine-tuned fashions. For this check, the suffix was set to “electricalengineer.”

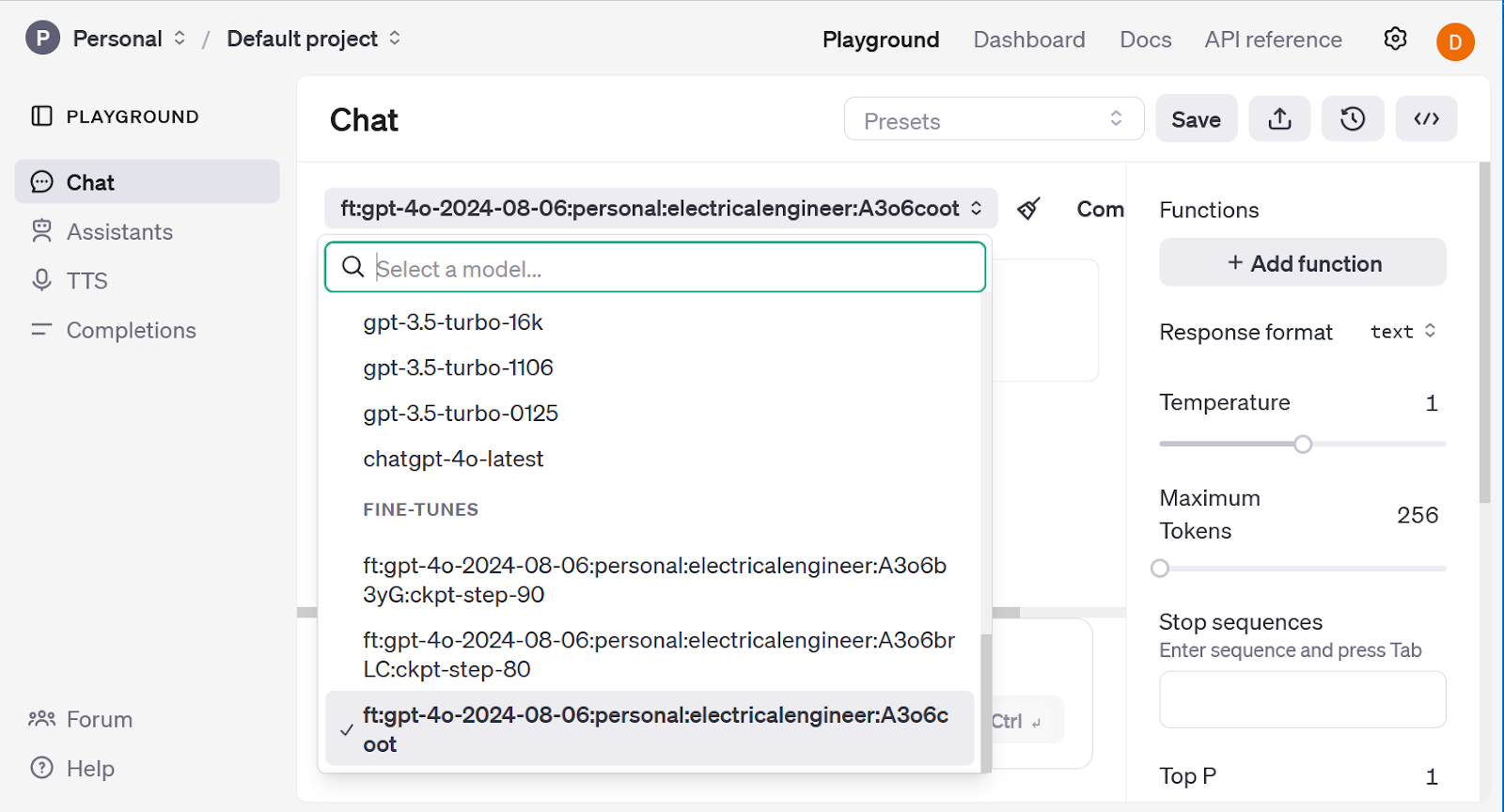

The fine-tuning course of for GPT-4o, together with validation of coaching knowledge and analysis of the finished mannequin, lasted roughly three hours and resulted in 8,700 skilled tokens. In distinction, GPT-4o mini, a smaller and extra cost-efficient mannequin, accomplished the fine-tuning course of in simply 10 minutes.

The outcomes might be examined by clicking the Playground hyperlink. Clicking on the grey drop-down menu close to the highest of the web page exhibits you the accessible fashions, together with the fine-tuned mannequin. Additionally included are further fashions that characterize checkpoints over the past three epochs of the coaching. These fashions can be utilized for numerous functions, together with in circumstances of overfitting; fashions at earlier checkpoints might be examined to find out when the overfitting occurred.

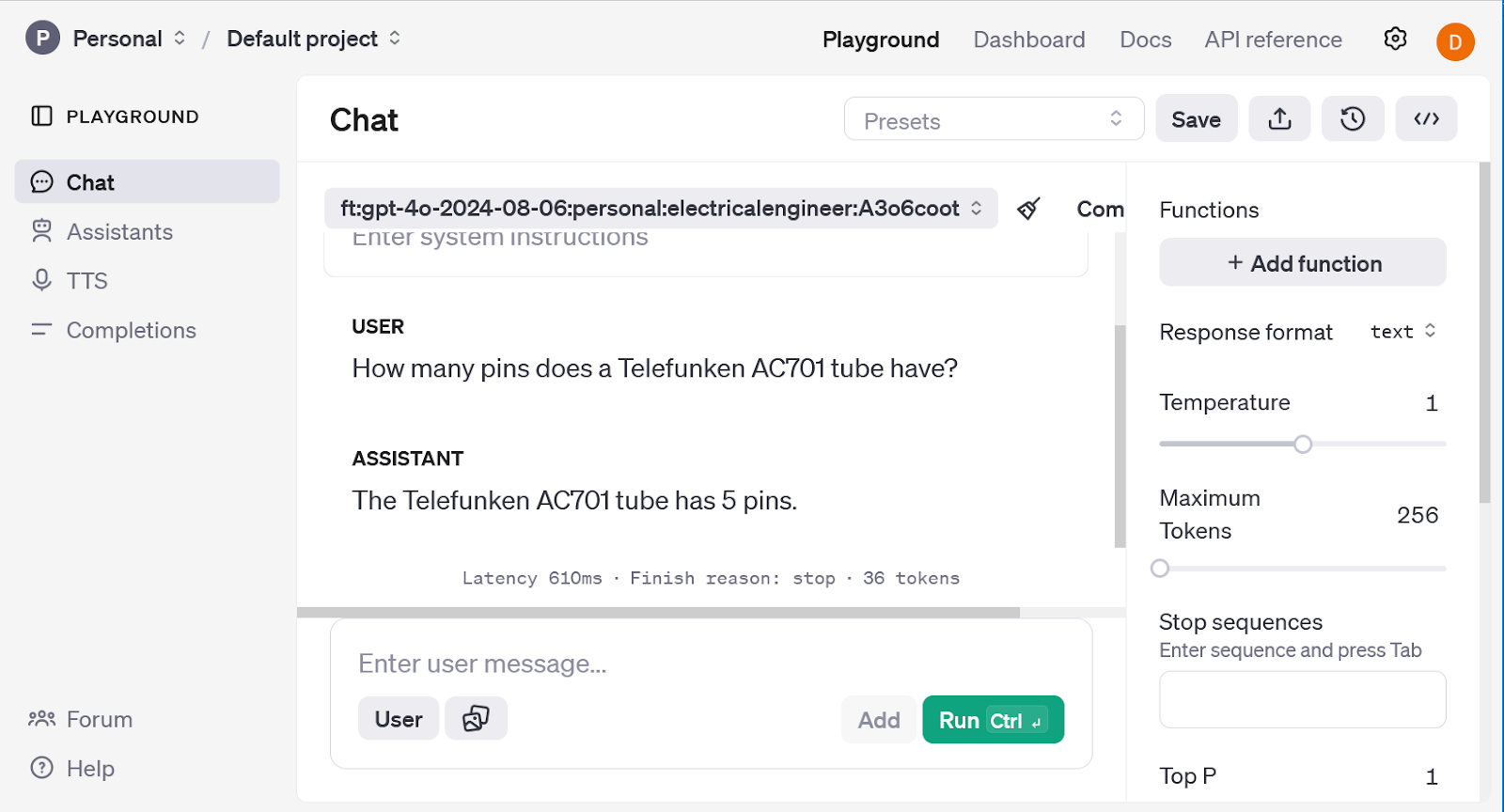

To check the outcomes of the fine-tuning course of, the mannequin was set to gpt-4o and requested an obscure query that it may not have the reply to:

What number of pins does a Telefunken AC701 tube have?

The mannequin responded with:

The Telefunken AC701 is a miniature tube typically utilized in classic microphone designs. It has 8 pins.

Whereas the response is generally right, there’s one small error. The Telefunken AC701 is a tube that was utilized in a few of the most well-known classic microphones in historical past, together with the Neumann M49, M269, KM54, Schoeps M221B, and Telefunken Ela-M251. Nonetheless, the AC701 really has 5 pins.

The mannequin was set to the fine-tuned mannequin ft:gpt-40-2024-08-06:private:electricalengineer:A3o6coot, and requested the identical query. As a result of the coaching knowledge contained details about the AC701 tube, the response from the fine-tuned mannequin was:

The Telefunken AC701 has 5 pins.

For this query, the fine-tuning course of was profitable and the mannequin was capable of be taught new details about a classic vacuum tube.

OpenAI’s fine-tuning platform is simple to make use of and efficient, nonetheless, it’s restricted to OpenAI fashions. If you wish to fine-tune LLMs like Llama and Mistral, there are a number of instruments accessible, together with AutoTrain, Axolotl, LLaMA-Manufacturing facility, and Unsloth.

The Way forward for Giant Language Fashions

Advantageous-tuned LLMs have already proven unimaginable promise, with fashions like MedLM and CoCounsel getting used professionally in specialised functions on daily basis. An LLM that’s tailor-made to a selected area is an especially highly effective and great tool, however solely when fine-tuned with related and correct coaching knowledge. Automated strategies, comparable to utilizing an LLM for knowledge labeling, are able to streamlining the method, however constructing and annotating a high-quality coaching dataset requires human experience.

As knowledge labeling methods evolve, the potential of LLMs will proceed to develop. Improvements in lively studying will enhance accuracy and effectivity, in addition to accessibility. Extra various and complete datasets may also develop into accessible, additional enhancing the info the fashions are skilled on. Moreover, methods comparable to retrieval augmented technology (RAG) might be mixed with fine-tuned LLMs to generate responses which might be extra present and dependable.

LLMs are a comparatively younger know-how with loads of room for progress. By persevering with to refine knowledge labeling methodologies, fine-tuned LLMs will develop into much more succesful and versatile, driving innovation throughout an excellent wider vary of industries.

The technical content material offered on this article was reviewed by Necati Demir.

[ad_2]